Innovations in Data Center Power and Cooling Solutions

Data Centre Infrastructure News & Trends

Events

Innovations in Data Center Power and Cooling Solutions

A-Gas participating as a Gold Sponsor at DCN

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

News

Products

AVK launches modular PowerPods for data centres

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Products

Daikin expands UK HVAC rental fleet

Data Centre Build News & Insights

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Liquid Cooling Technologies Driving Data Centre Efficiency

Sustainable Infrastructure: Building Resilient, Low-Carbon Projects

Veolia, Amazon develop data centre water reuse system

Data Centre Business News and Industry Trends

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Insights into Data Centre Investment & Market Growth

Liquid Cooling Technologies Driving Data Centre Efficiency

Vertiv acquires Strategic Thermal Labs

Data Centre Build News & Insights

Data Centre Infrastructure News & Trends

Data Centre Projects: Infrastructure Builds, Innovations & Updates

Innovations in Data Center Power and Cooling Solutions

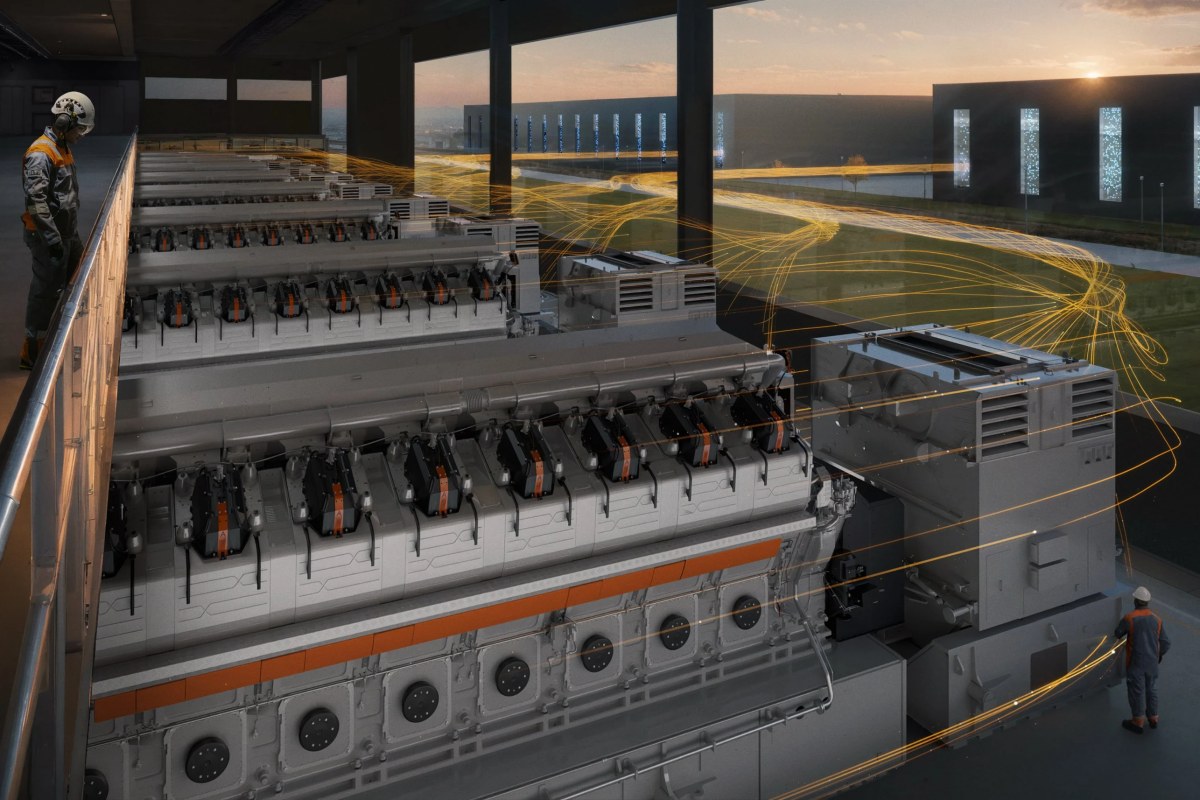

Wärtsilä secures 790MW Texas data centre deal

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

News

Carrier opens €12m Montluel HVAC testing facility

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

News

EPRI, OCP aim to advance DCs as flexible grid resources

Data Centre Business News and Industry Trends

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Insights into Data Centre Investment & Market Growth

LS Electric wins $115m data centre contract

Data Centre Business News and Industry Trends

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Insights into Data Centre Investment & Market Growth

Mission Critical Group invests in WattEV