Artificial Intelligence in Data Centre Operations

Artificial Intelligence in Data Centre Operations

Data Centre Infrastructure News & Trends

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Innovations in Data Center Power and Cooling Solutions

MPs warn grid failures could cost Britain the AI race

The All-Party Parliamentary Group (APPG) for Data Centres has published its Insights Paper, summarising findings from its inaugural 'Call for Evidence'.

The group is a cross‑party group of UK MPs and Peers that fosters parliamentary understanding of data centre development, examines sector challenges (particularly planning, energy, resilience, and sustainability) and makes evidence‑based policy recommendations to support UK digital infrastructure and economic growth.

Notably, respondents to its Call for Evidence signalled a substantial appetite to invest in UK data centre infrastructure.

Operators including Ark Data Centres, Nebius, Pure DC, and VIRTUS collectively identified £11–12 billion in specific investment plans, while Microsoft's submission committed a further £22 billion to UK AI infrastructure.

Despite this intent, respondents consistently described a set of interconnected structural barriers constraining the pace and location of development:

• Grid access and energy supply ranked as the sector's top priority, with 52% of respondents placing it first.

• Planning was placed in the top three by nearly four in five respondents (79%), while energy costs, sustainability, water use, and skills also featured prominently across submissions.

• The APPG is particularly keen to hear further evidence from community representatives, local authorities, and organisations with experience of data centre development outside London and the South East.

Parliamentary comments

Chris Curtis MP, Chair of the Data Centres APPG, notes, "This Call for Evidence shows that while significant investment is ready to support the UK's expanding AI and digital economy, it remains constrained by grid access, energy costs, and planning inconsistencies.

“The APPG will use this evidence over the coming year to work constructively with stakeholders and the Government to ensure that there is a well-informed view on how data centre infrastructure drives our national economic growth."

Alison Griffiths MP, Vice-Chair of the Data Centres APPG, adds, “The submissions to this Call for Evidence make clear that the barriers to data centre development are not insurmountable.

"They highlight gaps in the consistent application of planning policy by local authorities, as well as the need to ensure electricity cost competitiveness is felt across every part of the country.

"It is clear there are practical steps the Government can take to strengthen the UK’s leadership in digital infrastructure [and] I look forward to exploring these issues further in our upcoming evidence sessions.”

David Reed MP, Officer of the Data Centres APPG, highlights, "The submissions from academic institutions such as Exeter, Durham, and Oxford remind us that research computing infrastructure is increasingly cost-prohibitive for academia. This gap risks undermining the UK's long-term international scientific competitiveness.

“As the APPG deepens its work, I look forward to hearing from a broad range of stakeholders in this vital debate and developing practical solutions that support a thriving data centre ecosystem.”

The Rt Hon. the Lord (Philip) Hunt of King’s Heath OBE, Officer of the Data Centres APPG, concludes, "Sustainability is not a secondary consideration for this sector; it is central to its long-term viability and its licence to operate in communities across the UK.

“The evidence on waste heat recovery is particularly striking: the UK is currently capturing just 3–5% of the heat generated by data centres, against a backdrop of a national housing and energy challenge that demands innovative solutions. The APPG will be pressing hard on what policy levers can unlock this opportunity."

Joe Peck - 28 May 2026

Artificial Intelligence in Data Centre Operations

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

News

Fluke warns AI boom exposes 'confidence crisis'

As artificial intelligence (AI) demand accelerates, new research from Fluke Corporation, a US manufacturer of electronic test and measurement tool, suggests a growing confidence crisis among data centre professionals, raising concerns about the sector’s ability to scale reliably.

A survey of more than 150 data centre professionals, conducted at Data Centre World London 2026, found that only 22% fully trust that their test and measurement data reflects real-world operating conditions. Confidence drops further under pressure, with just 19% expressing full trust in data accuracy during peak load or failure scenarios.

Several factors are driving this lack of confidence in infrastructure data. Skills and training gaps were cited as the biggest barrier (43%), followed by time pressures during commissioning (16%), inconsistent testing processes (11%), and budget constraints (10%).

The operational impact is seemingly already being felt. Half of respondents reported experiencing unplanned outages or major performance disruptions at least annually, with nearly one in five experiencing disruptions as frequently as monthly (10%) or weekly (8%).

Outdated testing equipment is compounding the issue, with nearly two thirds (65%) saying legacy tools increase the risk of downtime and compliance failures within their organisation.

Speed vs compliance trade-offs emerge

The research exposes a widening gap between intent and execution. While almost all respondents agree that regular maintenance is critical to reducing downtime, only 28% have real-time or predictive monitoring in place across critical infrastructure such as power, cooling, and networks. One fifth admit maintenance is conducted quarterly at most.

Adoption of advanced technologies also reportedly remains limited. Just 10% have fully implemented automation, AI diagnostics, or predictive monitoring, while many remain in pilot (22%) or early-stage (19%) phases.

Pressure to deliver data centre capacity faster is also creating new risks. 42% of respondents said time pressures create occasional compliance risks, while 17% said they make it significantly harder to meet evolving connector and certification requirements.

Mike Slevin, Director of EMEA Market at Fluke Corporation, comments, “What’s striking here is that organisations already know what needs to be done. There’s broad recognition that regular maintenance and better monitoring are critical to reducing downtime, yet, in practice, adoption is lagging.

“That gap between awareness and action is where risk builds. When testing isn’t consistent and monitoring isn’t real-time, small issues can quickly escalate into outages.”

UK readiness in question as AI ambitions grow

The findings also cast doubt on the UK’s ability to support its ambitions to become a global AI leader. Only half of respondents believe the UK data centre sector is operationally ready to scale for AI, cloud, and hyperscale demand over the next five years.

Additionally, just 7% believe the UK currently has the infrastructure resilience and operational standards required to support its “AI superpower” ambitions, with 28% pointing to significant infrastructure gaps.

Mike continues, “AI is redefining the demands placed on data centre infrastructure. With higher-density architecture and increasingly complex fibre environments, multi-fibre testing has become paramount as the margin for error narrows.

“If organisations can’t confidently validate performance under real-world conditions, they risk building AI on unstable foundations. The challenge now is ensuring that capacity is resilient and ready for sustained demand.”

For more from Fluke, click here.

Joe Peck - 27 April 2026

Artificial Intelligence in Data Centre Operations

Data Centre Business News and Industry Trends

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Insights into Data Centre Investment & Market Growth

Rebellions, SKT, Arm partner on AI infrastructure

Rebellions, a South Korean semiconductor company, has announced a collaboration with South Korean telecommunications company SK Telecom (SKT) and British semiconductor and software design company Arm to develop AI inference infrastructure for sovereign AI and telecom-focused data centres.

The partnership will focus on building an AI server combining an Arm-designed data centre CPU with Rebellions’s AI accelerators. The system will be tested within SK Telecom’s data centre environment before potential wider deployment.

The initiative is intended to address growing demand for AI inference infrastructure, particularly in sectors requiring data sovereignty and telecom-specific processing capabilities.

The planned platform will integrate Arm’s AGI CPU, based on the Neoverse CSS V3 architecture, with Rebellions’ RebelCard accelerator. The companies will also work together on the supporting software stack, including firmware, to ensure compatibility and performance.

Development and validation of the AI server platform

Testing will take place in SK Telecom’s operational data centres, where the infrastructure will be assessed for performance and stability. This includes evaluating its suitability for large-scale data processing and AI models used in telecommunications environments.

There are also plans to assess the use of SK Telecom’s A.X K1 foundation model on the platform as part of the validation process.

Following testing, the companies will consider broader deployment opportunities, with a focus on telecom operators and public sector organisations that require independent AI infrastructure.

Jinwook Oh, CTO of Rebellions, says, “We expect this 'one-team' collaboration of experts to serve as a significant precedent in the industry for building AI-specialised infrastructure.”

Jaeshin Lee, Vice President and Head of AI Business Development at SK Telecom, adds, “By providing a full package that combines inference-optimised infrastructure with our proprietary foundation model, A.X K1, we will further strengthen our competitiveness in the AI data centre market.”

Eddie Ramirez, Vice President of the Cloud AI Business Unit at Arm, notes, “As AI infrastructure expands globally, CPUs play a critical role in coordinating workloads across accelerators, memory, and networking.

"Arm AGI CPU, built on Arm Neoverse CSS V3, was designed to deliver the performance and efficiency required for large-scale AI deployments. Together with Rebellions and SK Telecom, we’re enabling scalable infrastructure for sovereign AI and telecommunications markets.”

Joe Peck - 13 April 2026

Artificial Intelligence in Data Centre Operations

Data Centre Build News & Insights

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Exploring Modern Data Centre Design

SambaNova, Intel unveil hybrid AI platform

SambaNova, a company specialising in AI hardware and software, and American multinational technology company Intel have announced a new hybrid-chip platform designed to address data centre capacity constraints linked to AI workloads.

The architecture combines GPUs for prefill processing, Intel Xeon 6 processors for system control and workload execution, and SambaNova’s reconfigurable dataflow units (RDUs) for inference decoding.

The platform is expected to be available in the second half of 2026 for enterprise, cloud, and sovereign AI deployments.

The design targets agent-based AI workloads, which require coordinated processing across multiple stages, including data input, model inference, and execution of external tools and applications.

Hybrid approach to AI infrastructure

The platform reflects a shift towards heterogeneous computing in data centres, where different processor types are used for specific tasks rather than relying solely on GPUs.

In this model, GPUs handle the initial processing of prompts, while RDUs manage high-throughput inference tasks. Xeon 6 processors act as both the host system and execution layer, coordinating workloads, running code, and managing interactions with external systems.

Rodrigo Liang, CEO and co-founder of SambaNova Systems, explains, “Agentic AI is moving into production, and the winning pattern we’re seeing is GPUs to start the job, Intel Xeon 6 to run it, and SambaNova RDUs to finish it fast.

"Together with Intel, we’re giving customers a blueprint they can deploy in existing air-cooled data centres, with broad x86 coverage for the coding agents and tools they already use today.”

Kevork Kechichian, Executive Vice President and General Manager of the Data Center Group at Intel, adds, “The data centre software ecosystem is built on x86 and it runs on Xeon, providing a mature, proven foundation that developers, enterprises, and cloud providers rely on at scale.

"Workloads of the future will require a heterogeneous mix of computing, and this collaboration with SambaNova delivers a cost-efficient, high-performance inference architecture designed to meet customer needs at scale, powered by Xeon 6.”

The companies state that the approach is intended to support increasing demand for AI inference, particularly as agent-based systems move from testing into production environments.

Additional industry participants highlighted the growing need for scalable infrastructure to support coding agents and similar workloads, which rely on CPUs for execution alongside accelerators for inference.

The announcement marks an expansion of the existing collaboration between SambaNova and Intel, with a focus on enabling large-scale AI deployment across data centre environments.

Joe Peck - 10 April 2026

Artificial Intelligence in Data Centre Operations

Data Centre Build News & Insights

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Exploring Modern Data Centre Design

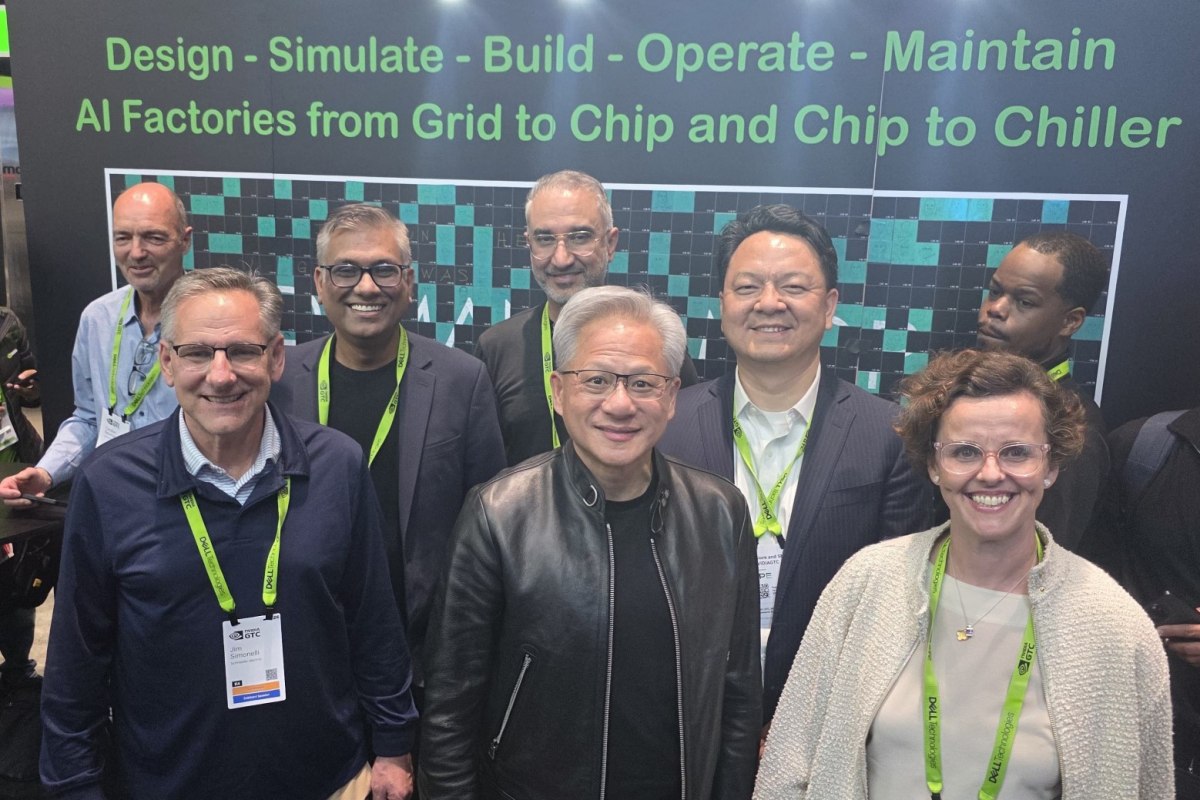

Schneider, NVIDIA to advance AI data centre design

Global energy technology company Schneider Electric has expanded its collaboration with NVIDIA to develop validated designs and digital tools for large-scale AI data centres.

Working alongside AVEVA, the companies outlined new developments in designing, simulating, building, and operating AI infrastructure during NVIDIA GTC in San Jose, USA.

These include a reference design for NVIDIA’s latest rack-scale systems, integration of digital twin capabilities, and testing of AI-driven tools for managing data centre alarms.

The announcements focus on supporting large-scale AI deployments, sometimes referred to as “AI factories”, with an emphasis on power, cooling, and operational efficiency.

Reference design and digital twin integration

A new reference design has been developed for NVIDIA’s Vera Rubin NVL72 rack architecture, covering power distribution and cooling requirements. The design supports higher supply voltage, improved thermal efficiency, and clustered rack configurations for AI workloads.

It has been validated using electrical system and airflow modelling tools to assess performance before deployment.

In parallel, AVEVA has introduced a lifecycle digital twin architecture integrated within the NVIDIA Omniverse environment. This enables simulation of power, cooling, and operational conditions, allowing operators to test and refine designs prior to construction.

According to the companies, this approach is intended to reduce design cycles, improve accuracy, and support more efficient deployment of AI infrastructure.

Manish Kumar, Executive Vice President, Secure Power & Data Centers at Schneider Electric, comments, “As AI workloads scale in both size and complexity, the margin for error in data centre design becomes incredibly small.

“Delivering AI at scale requires tightly integrated electrical, cooling, and digital architectures that can support both unprecedented performance demands while maintaining peak energy efficiency.

"By combining advanced software, digital twins, and validated reference designs, operators can simulate and optimise infrastructure before a single rack is deployed. This approach reduces risk, accelerates deployment, and ensures the efficiency and resilience needed to power the next generation of AI factories.”

Vladimir Troy, Vice President of AI Infrastructure at NVIDIA, adds, “Gigawatt-scale AI factories demand a fundamentally new class of energy-efficient and highly predictable infrastructure.

“Together, NVIDIA and Schneider Electric are providing the power, cooling, and digital twin architectures needed to accelerate time-to-token for our customers worldwide.”

AI-based alarm management testing

Schneider Electric also confirmed testing of an AI-based alarm management capability using NVIDIA Nemotron models.

The system analyses real-time data from multiple sources to identify root causes of issues and recommend corrective actions. The aim is to support data centre operators in resolving incidents more quickly and consistently, while reducing unnecessary maintenance activity.

The latest developments build on ongoing collaboration between the companies, including work on digital twin environments, power system modelling, and support for higher-voltage data centre architectures.

For more from Schneider Electric, click here.

Joe Peck - 18 March 2026

Artificial Intelligence in Data Centre Operations

Data Centre Infrastructure News & Trends

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Innovations in Data Center Power and Cooling Solutions

Siemens expands data centre ecosystem for AI infrastructure

German multinational technology company Siemens has expanded its data centre partner ecosystem to support the growth of next-generation artificial intelligence infrastructure, focusing on the integration of compute, power, and operational systems.

The expansion includes a strategic investment in Emerald AI, a collaboration with PhysicsX, and the integration of energy storage technologies from Fluence.

As AI adoption accelerates, data centre operators are facing increasing constraints around power availability and grid connection timelines. Siemens says the expanded ecosystem is intended to improve flexibility across infrastructure, helping operators scale capacity while maintaining reliability in power-constrained environments.

Coordinating compute and energy systems

Emerald AI’s technology enables AI workloads to shift in time and location to align with grid conditions, allowing data centre demand to respond dynamically to available power. This approach is designed to reduce peak demand pressures and support faster grid connections.

Fluence’s battery energy storage systems (BESS) are intended to help operators manage large-scale AI workloads by shaping energy demand and supporting more predictable load profiles. The systems can also provide on-site power during grid constraints or outages, supporting operational continuity.

In addition, Siemens is working with PhysicsX to apply physics-based AI modelling to data centre power distribution systems. Using simulation data, the approach enables engineers to model thermal behaviour in real time, reducing design times and supporting optimisation for dynamic AI workloads.

Siemens said the combined ecosystem brings together workload orchestration, energy infrastructure, and AI-driven modelling to address the growing complexity of data centre design and operation as AI demand increases.

For more from Siemens, click here.

Joe Peck - 17 March 2026

Artificial Intelligence in Data Centre Operations

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Events

Sponsored

'Agentic core networks shape 6G, unlocking new business'

At MWC Barcelona 26, Dr Wen Tong, Huawei Wireless CTO, delivered a keynote speech on 6G core network. He introduced Agentic Core Networks as the revolutionary 6G-orientated AI core network driven by agentic AI and explained that the architecture seamlessly integrates application creation with network customisation to deliver intent-as-a-service, empowering operators to explore new business models and drive growth in the 6G era.

The agentic AI technology is rapidly redefining applications and services from human-centric to agent-centric. This transition is already fuelling an explosion in data traffic, with global token consumption surging by over 100 times in the past year and traffic from AI-training web crawlers increasing 21-fold. At the same time, AI agents have seen rapid adoption in enterprise scenarios, with 80% of Fortune 500 companies now integrating them into their operations.

AI will be a pivotal enabler of 6G. From AI-enabled terminals to AI-powered wireless networks and AI core networks, the industry is exploring ways to integrate AI into end-to-end 6G systems to improve spectral and energy efficiency, as well as to establish a robust foundation for the rapid growth of AI applications. In this transformation, the role of the AI core network is particularly critical. It will align with the advancing trends in AI technologies, reshaping the 6G core network by incorporating agentic AI. This transformation will unlock new service models and monetisation avenues, as well as expanding business opportunities for operators.

The introduction of Agentic Core Networks

Agentic Core Networks architecture brings a fundamental shift to service processes. Traditionally, all operations were carried out based on predefined procedures. However, the AI core network utilises Agentic NAS to proactively detect user needs, predict user intent ahead of OTT applications, and autonomously generate, execute, and continuously optimise personalised services through multi-agent collaboration. This transition enables fully automated operations, reduces TCO, ensures a superior user experience, shifting from fixed connections to intent-driven services.

Agentic Core Networks will become integrated platforms of network functions, operator services, and third-party tools. This architecture enables service applications to be dynamically onboarded and iterated like plug-ins, cutting service rollout time from weeks down to minutes. More than a technological advancement, this marks a strategic shift in operators' business models: from providing connectivity to delivering intelligent services, from passively meeting user needs to proactively enabling service scenarios, and from relatively closed network systems to open ecosystems. Closed-loop capabilities spanning intent recognition, AI-driven generation, and ecosystem monetisation will be essential for operators seeking to capture value in the 6G era.

Agentic Core Networks capabilities will allow 6G to deliver precise services in high-value scenarios. For example, in dynamic hotspots such as stadiums or disaster recovery sites, 6G can be deployed on demand and reclaimed once the need subsides. In the short term, high-value applications - like autonomous taxi dispatch or remote assistance by humanoid robots and AI-driven orchestration - will unlock new business opportunities. Ultimately, it will help 6G strike the optimal balance between deployment costs and business value.

In his address, Wen concluded that the strategic priorities of Agentic Core Networks are becoming increasingly clear. He called for accelerating exploration in the 5G-A era to build a solid connectivity foundation for AI terminals and applications, powered by multi-dimensional network capabilities. This, he noted, represents the first step for the evolution of the entire industry ecosystem.

Looking ahead, Wen emphasised that with the advancement of 6G standards and technologies, Agentic Core Networks will enable collaboration between terminals and networks, foster scenario-specific applications, and cultivate a robust industry chain ecosystem. These efforts, he added, will infuse the entire mobile industry with new vitality and unlock new growth opportunities.

MWC Barcelona 2026 was held between 2–5 March in Barcelona, Spain. During the event, Huawei showcased its latest products and solutions at Stand 1H50 in Fira Gran Via Hall 1.

The era of agentic networks is now approaching fast, and the commercial adoption of 5G-A at scale is gaining speed. Huawei says it is actively working with carriers and partners around the world to unleash the full potential of 5G-A and pave the way for the evolution to 6G. It adds that it is also creating AI-Centric Network solutions to enable intelligent services, networks, and network elements (NEs), speeding up the large-scale deployment of level-4 autonomous networks (AN L4), and using AI to upgrade its core business. Together with other industry players, it says it will create leading value-driven networks and AI computing backbones for a fully intelligent future.

For more information, you can visit Huawei’s website by clicking here.

For more from Huawei, click here.

Joe Peck - 12 March 2026

Artificial Intelligence in Data Centre Operations

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Events

Sponsored

Huawei: Accelerating towards the agentic internet era

At MWC Barcelona 2026, Li Peng, Huawei's Senior Vice President and President of ICT Sales & Service, delivered a keynote on how carriers can maximise the value of 5G-A and AI to accelerate towards the agentic internet era.

Li proposed that, as networks converge with AI, carriers have the opportunity to redefine the value of connectivity by upgrading to "5G-A x AI". He says this will allow them to not only monetise traffic and experience, but also AI services.

Leap in industry value: Entering a 10-trillion-dollar agentic internet era

Over the past few years, the mobile industry has steadily evolved from 4G to 5G, and some carriers have begun deploying 5G-A. As networks are stronger than ever, they are bringing intelligent applications to all kinds of devices.

Li said, "This year, we're entering the agentic internet era. Networks will not only connect people; they will also connect hundreds of billions of agents."

The rise of agent applications over the next decade, however, will increase connectivity demands, as networks will not simply facilitate human communication but also communication between agents. This will drive carriers to shift from offering traffic to offering high-value services and open up a new market worth $10 trillion (£7.4 trillion).

Business model upgrade: Elevating brands and offerings to unlock new revenue streams

The evolution of network capabilities will also result in changes to carrier business models. In the seven years since the commercialisation of 5G, more than 300 carriers around the world have launched new packages to monetise traffic, and this has helped them grow both their revenue and user-base.

As 5G networks continue to mature, experience monetisation will be more essential to carriers' success. 5G SA and 5G-A provide more diverse network resources that more than 30 leading carriers have used to launch experience-based packages to monetise speeds, latency, and more.

By dynamically scheduling resources, carriers can go beyond "best-effort" service to deterministic experience. This helps them strengthen brand reputation and users' willingness to spend on premium services. By offering services like custom logo displays and multi-level speed boosts, carriers are able to guarantee network performance at critical moments and enhance users' perception of network quality.

Connectivity and AI service convergence: Unleashing new growth potential with AI-powered consumer, home, and enterprise services

Li also explained how carriers will be able to transform their main services and improve consumer satisfaction by applying AI models:

● AI for consumers: First, AI can be integrated into traditional calling services. There are currently 5.4 billion calling service users around the world, and AI can be used to unlock features like transcription, translation, and AI assistants. Many of these features have already entered large-scale commercial use in China and South Korea. In addition, more and more carriers are launching AI phones to act as portals for the agentic era. They are using these phones to upgrade their B2C services - the largest source of revenue for most carriers.

● AI for homes: In addition to the recent initiatives by carriers to upgrade home broadband towards ultra-gigabit, AI is also being implemented to enable smart home services. For example, acceleration assistants can guarantee deterministic speeds for key services like gaming and livestreaming. Network assistants can help people optimise their Wi-Fi and resolve network faults via voice commands. AI lifestyle assistants are also a promising avenue for carriers looking to unlock new value from traditional services. By integrating AI with video and storage services, they do things like automatically generating cloud-based family albums that can be shared between devices.

● AI for business: In industrial scenarios, the convergence of 5G-A and AI can be used to transform core workflows and significantly improve production efficiency. For example, in flexible manufacturing, AI-enabled factories will be able to respond to demand in seconds, schedule new production runs in minutes, and deliver new products in hours.

New vision: Helping carriers upgrade their portfolio with AI services

"Looking ahead, there are still many opportunities just waiting to be unlocked with 5G-A and AI, and carriers are in the best position to explore future applications like massive IoT and embodied AI," said Li at the event.

He also recommended three courses of action for carriers to seize these opportunities: First, carriers should evolve all services, devices, and frequency bands to 5G-A to create a thriving network ecosystem. Second, carriers should introduce AI into B.O.M. (business, operations, management) domains; this will provide a foundation for diversified O&M services. Third, carriers should bring intelligence to infrastructure to support the evolution of future network architecture.

"Huawei is ready to work closely with carriers to make the most of 5G-A and AI and help them evolve into AI service providers," concluded Li. "We can work with carriers to upgrade their main services through the multi-agent collaboration platform. We can also help them build AI-centric networks for more efficient operations. Together, we can unlock a world of new opportunities and lay a strong foundation for future networks."

MWC Barcelona 2026 was held between 2–5 March in Barcelona, Spain. During the event, Huawei showcased its latest products and solutions at Stand 1H50 in Fira Gran Via Hall 1.

The era of agentic networks is now approaching fast, and the commercial adoption of 5G-A at scale is gaining speed. Huawei says it is actively working with carriers and partners around the world to unleash the full potential of 5G-A and pave the way for the evolution to 6G. It adds that it is also creating AI-Centric Network solutions to enable intelligent services, networks, and network elements (NEs), speeding up the large-scale deployment of level-4 autonomous networks (AN L4), and using AI to upgrade its core business. Together with other industry players, it says it will create leading value-driven networks and AI computing backbones for a fully intelligent future.

For more information, you can visit Huawei's website by clicking here.

For more from Huawei, click here.

Joe Peck - 11 March 2026

Artificial Intelligence in Data Centre Operations

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Sponsored

Huawei showcases industrial intelligence at MWC 2026

During MWC Barcelona 2026, Chinese multinational technology company Huawei released 115 industrial intelligence showcases, together with its customers, during Industrial Digital and Intelligent Transformation Summit 2026.

The summit, titled 'Advancing Industrial All Intelligence', was held by Huawei to explore new practices in industrial intelligence with its customers, partners, and peers. The company also announced the launch of upgrades to its SHAPE 2.0 partner framework.

In addition, Huawei showcased 22 new industrial intelligence solutions with partners for the electric power, manufacturing and retail, finance, transportation, oil and gas, ISP, media, public service, and smart city sectors.

Huawei proposed the ACT Pathway: A replicable intelligence framework

AI technologies have advanced rapidly over the last year, with reasoning models and agentic workflows both maturing and physical AI beginning to truly take off. This has allowed AI tools to begin entering core production scenarios and helped applications move from pilots to large-scale use. AI agents can now better understand and interact with the physical world, being capable of making decisions independently.

With this in mind, Huawei has introduced the ACT Pathway, which has been developed during its collaboration with global customers over the past few years.

Three key steps specified in the ACT framework were mandatory for achieving comprehensive industrial intelligence:

The first step is “assessing high-value scenarios”. So far, Huawei has helped customers identify over 1,000 core production scenarios where AI can play a big role.

The second step is “calibrating AI models with high-quality vertical data”. Huawei has built a six-layer AI security framework to ensure every stage of the AI lifecycle is secure and trustworthy.

The third step is “transforming business operations with AI talent”. Talent that understands both industry and AI are needed. Huawei does this by focusing on four areas, including hands-on practice programs, CANN open-source communities, vertical industry communities on Huawei Cloud, and ICT Academies.

Huawei worked with customers to release global industrial intelligence showcases

During the summit, a number of Huawei’s customers joined on stage to help launch 115 global showcases for industrial intelligence, including executives from Eskom, Shandong Port Group, Converge ICT, HM Hospitales, and PetroChina (Beijing)’s Digital Intelligent Research Institute, CNPC, providing reference for organisations of various sectors to embark on their journey towards intelligence.

MWC Barcelona 2026 is being held from 2 March to 5 March in Barcelona, Spain. During the event, Huawei is showcasing its latest products and solutions at Stand 1H50 in Fira Gran Via Hall 1.

For more information, click here to visit Huawei’s website.

For more from Huawei, click here.

Joe Peck - 4 March 2026

Artificial Intelligence in Data Centre Operations

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Data Centre Security: Protecting Infrastructure from Physical and Cyber Threats

Security Risk Management for Data Centre Infrastructure

Vertiv launches AI predictive maintenance service

Vertiv, a global provider of critical digital infrastructure, has launched a new AI-powered predictive maintenance service, Vertiv Next Predict, aimed at modern data centres and facilities supporting AI workloads, including AI factories.

The managed service is designed to move maintenance away from time-based and reactive models, using data analysis to identify potential issues before they affect operations.

Vertiv says the service supports power, cooling, and IT systems with the aim of improving visibility and supporting more consistent infrastructure performance.

The company notes that, as AI workloads increase compute intensity, data centre operators are under pressure to maintain uptime and performance across increasingly complex environments. In this respect, predictive maintenance and advanced analytics are positioned as a way to support more informed operational decisions.

Ryan Jarvis, Vice President of the Global Services Business Unit at Vertiv, says, “Data centre operators need innovative technologies to stay ahead of potential risks as compute intensity rises and infrastructures evolve.

“Vertiv Next Predict helps data centres unlock uptime, shifting maintenance from traditional calendar-based routines to a proactive, data-driven strategy. We move from assumptions to informed decisions by continuously monitoring equipment condition and enabling risk mitigation before potential impacts to operations.”

AI-based monitoring and anomaly detection

Vertiv Next Predict uses AI-based anomaly detection to analyse operating conditions and identify deviations from expected behaviour at an early stage. A predictive algorithm then assesses potential operational impact to determine risk and prioritise responses.

The service also includes root cause analysis to help isolate contributing factors, supporting more targeted resolution. Based on system data and site context, prescriptive actions are defined and carried through to execution, with corrective measures carried out by Vertiv Services personnel.

According to Vertiv, this approach is intended to support earlier intervention and reduce the likelihood of unplanned outages by addressing issues before they escalate.

The service currently supports a range of Vertiv power and cooling platforms, including battery energy storage systems (BESS) and liquid cooling components. Vertiv says the platform is designed to expand over time to support additional technologies as data centre infrastructure evolves.

Vertiv Next Predict is intended to integrate as part of a broader grid-to-chip service architecture, with the aim of supporting long-term scalability and alignment with future data centre technologies.

For more from Vertiv, click here.

Joe Peck - 27 January 2026

Head office & Accounts:

Suite 14, 6-8 Revenge Road, Lordswood

Kent ME5 8UD

T: +44 (0)1634 673163

F: +44 (0)1634 673173