Artificial Intelligence in Data Centre Operations

Artificial Intelligence in Data Centre Operations

Data

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Data Centres

VAST Data unveils its operating system for the 'thinking machine'

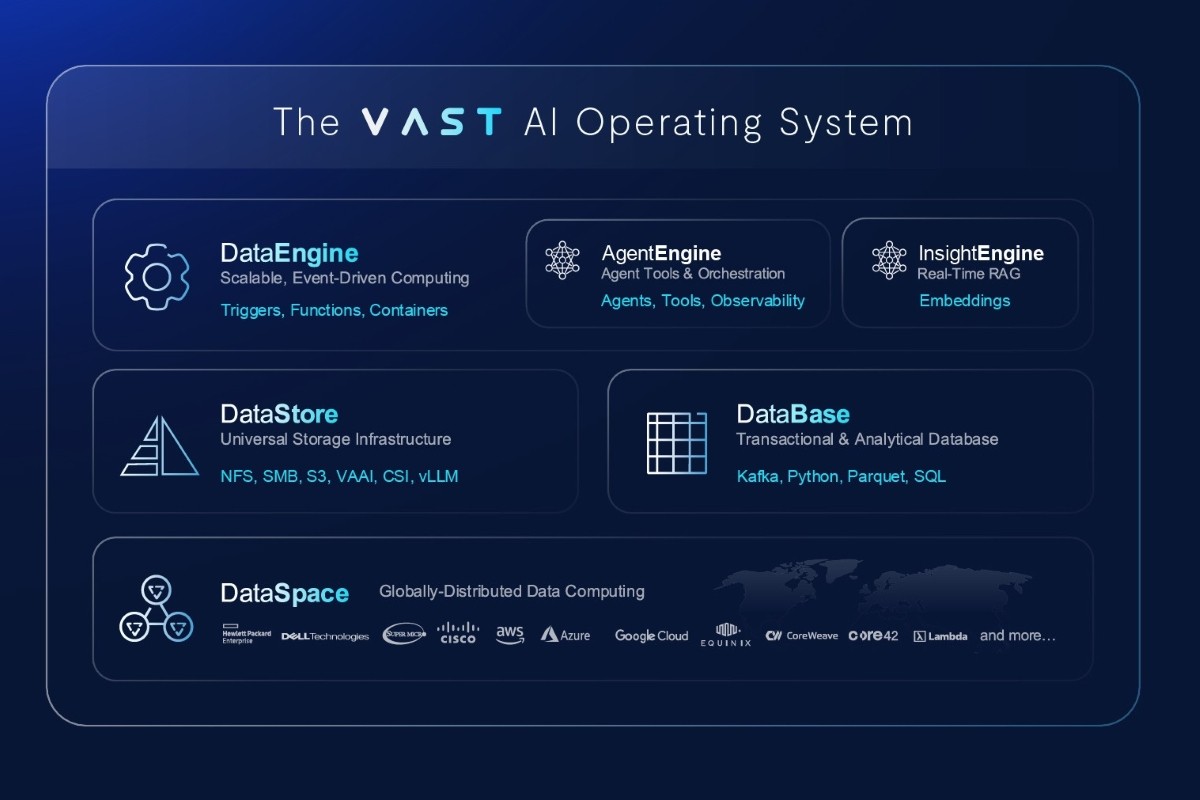

VAST Data, a technology company focused on artificial intelligence and deep learning computing infrastructure, today announced the result of nearly a decade of development with the unveiling of the VAST AI Operating System (OS), a platform purpose-built for the next wave of AI breakthroughs.

As AI redefines the fabric of business and society, the industry again finds itself at the dawn of a new computing paradigm – one where great numbers of intelligent agents will reason, communicate, and act across a global grid of millions of GPUs that are woven across edge deployments, AI factories and cloud data centres. To make this world accessible, programmable, and operational at extreme scale, a new generation of intelligent systems requires a new software foundation.

The VAST AI OS is the product of nearly ten years of engineering with the aim to create an intelligent platform architecture that can harness the new generation of AI supercomputing machinery and unlock the potential of AI at scale. The platform is built on VAST’s Disaggregated Shared-Everything (DASE) architecture, a parallel distributed system architecture – making it possible to parallelise AI and analytics workloads, federate clusters into a unified computing and data cloud, and then feed new AI workloads with high amounts of data from one tier of storage. Today, DASE clusters support over 1 million GPUs around the world in many of the world’s most data-intensive computing centres.

The scope of the AI OS is broad and is intended to consolidate disparate legacy IT technologies into one modern offering.

“This isn’t a product release — it’s a milestone in the evolution of computing,” says Renen Hallak, Founder & CEO of VAST Data. “We’ve spent the past decade reimagining how data and intelligence converge. Today, we’re proud to unveil the AI Operating System for a world that is no longer built around applications — but around agents.”

The AI OS consists of every aspect of a distributed system to run AI at a global scale: a kernel to run platform services on from private to public cloud, a runtime to deploy AI agents with, eventing infrastructure for real-time event processing, messaging infrastructure, and a distributed file and database storage system that can be used for real-time data capture and analytics.

In 2024, VAST previewed the VAST InsightEngine – a service that extracts context from unstructured data using AI embedding tools. If the VAST InsightEngine prepares data for AI using AI, VAST AgentEngine is how AI now comes to life with data – an auto-scaling AI agent deployment runtime that aims to equip users with a low-code environment to build workflows, select reasoning models, define agent tools, and operationalise reasoning.

The AgentEngine features a new AI agent tool server that provides support for agents to invoke data, metadata, functions, web search, or other agents using them as MCP-compatible tools. AgentEngine allows agents to assume multiple personas with different purpose and security credentials, and provides secure, real-time access to different tools. The platform’s scheduler and fault-tolerant queuing mechanisms are also intended to ensure agent resilience against machine or service failure.

Just as operating systems ship with pre-built utilities, the VAST AgentEngine will feature a set of open-source agents that VAST will release (one per month). Some personal assistants will be tailored to industry use cases, whereas others will be designed for general purpose use. Examples include:

● A reasoning chatbot, powered by all of an organisation’s VAST data

● A data engineering agent to curate data automatically

● A prompt engineer to help optimise AI workflow inputs

● An agent agent, to automate the deployment, evaluation, and improvement of agents

● A compliance agent, to enforce data and activity level regulatory compliance

● An editor agent, to create rich media content

● A life sciences researcher, to assist with bioinformatic discovery

In the spirit of enabling organisations to build on the VAST AI OS, VAST Data will be hosting VAST Forward, a series of global workshops, both in-person and online, throughout the year. These workshops will include training on components of the OS and sessions on how to develop on the platform.

For more from VAST, click here.

Joe Peck - 23 May 2025

Artificial Intelligence in Data Centre Operations

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Data Centre Security: Protecting Infrastructure from Physical and Cyber Threats

News

House of Lords AI summit highlights cyber threats

Technology industry leaders gathered in the House of Lords yesterday for a high-profile debate on the transformative role artificial intelligence (AI) will play in the UK jobs market.

The discussion, chaired by Steven George-Hilley of Centropy PR, brought together experts to address key industry challenges, including the digital skills shortage and AI’s potential to enhance compliance and accelerate digital transformation across key areas of the UK economy.

The debate highlighted the growing role of AI in reshaping traditional job roles and powering a new wave of relentless cyber threats which could damage British businesses.

Key speakers, including Richard Cuda of Kasha, discussed the role AI and digital technology can play in helping entrepreneurs launch their own business.

Leigh Allen, Strategic Advisor, Cellebrite, says, "In a world where police forces are under increasing strain to combat crime and national security threats, AI technology represents a key enabler in unlocking digital evidence and significantly reducing investigation times. Cellebrite delivers secure, ethical access to digital evidence, using AI to accelerate investigations while closing the digital skills gap for modern law enforcement. We don’t just respond to digital threats—we equip agencies to lead with confidence in a complex, tech-driven world."

Dr Janet Bastiman, Chief Data Scientist, Napier AI, comments, "Financial crime is one of the biggest threats facing the UK economy right now, and in AI we have the answer. AI-driven anti-money laundering solutions have the capacity to save UK financial institutions £2.2 billion each year, helping to bolster compliance processes, improve the accuracy of transaction screening, and monitor transaction behaviour to more effectively identify criminal networks."

Linda Loader, Software Development Director, Resonate, suggests, "AI has the potential to significantly enhance operations in the rail industry by enabling faster and more efficient services. But this must be underpinned by quality data to drive innovative solutions that prioritise security and robust protection for our critical national infrastructure. By exploring smaller AI use cases now, we can build a solid foundation and understanding for more extensive, secure transport applications in future."

Chris Davison, CEO, NavLive, mentions, "By using cutting edge AI and robotics technology to create automated 2D and 3D models of buildings in real time, we can make retrofits, brownfield developments more efficient and contribute to sustainable building practices. NavLive saves architects, engineers and construction professionals time and money, by providing accurate real time spatial data across the lifecycle of a building."

Richard Bovey, Chief for Data, AND Digital, states, "The AI winners are the businesses that have invested the most in AI experimentation, underpinned by years of strong data foundations, meanwhile, SMEs are quickly watching a widening AI gap. But all isn’t lost, investing in data and modern tooling can stop the slide, helping businesses to keep pace and preventing a significant competitive disadvantage from taking over."

Arkadiy Ukolov, Co-Founder and CEO, Ulla Technology, says, "As AI adoption continues to skyrocket, we must ensure that privacy and data security remain a critical component of development. Most of the popular AI tools send data to third-party AI providers, which may use client data to train models. This is unacceptable for sensitive meeting discussions and confidential documents, as it opens them up to data leaks. Placing safety and ethics at the centre of the discussion is the only route that we can take forward as AI evolves."

For more on cyber security, click here.

Joe Peck - 21 May 2025

Artificial Intelligence in Data Centre Operations

Cyber Security Insights for Resilient Digital Defence

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Data Centre Security: Protecting Infrastructure from Physical and Cyber Threats

News

Scalable Network Attached Solutions for Modern Infrastructure

AI set to supercharge cyber threats by 2027

The UK’s National Cyber Security Centre (NCSC) has released a landmark cyber threat assessment, warning that rapid advances in artificial intelligence (AI) will make cyber attacks more frequent, effective and harder to detect by 2027. The digital divide between organisations with the resources to defend against digital threats, and those without, will inevitably increase.

Published on the opening day of CYBERUK, the UK’s flagship cyber security conference, the report outlines how both state and non-state actors are already exploiting AI to increase the speed, scale and sophistication of cyber operations. Generative AI is enabling more convincing phishing attacks and faster malware development. This significantly lowers the barrier to entry for cyber crime and cyber intelligence.

Of particular concern is the rising risk to the UK’s democratic processes, Critical National Infrastructure (CNI) and commercial sectors. Advanced language models and data analysis capabilities are used to craft highly persuasive content, resulting in more frequent attacks that are difficult to detect.

Andy Ward, SVP International at Absolute Security, says, “While AI offers significant opportunities to bolster defences, our research shows 54% of CISOs feel unprepared to respond to AI-enabled threats. That gap in readiness is exactly what attackers will take advantage of."

"To counter this, businesses must go beyond adopting new tools - they need a robust cyber resilience strategy built on real-time visibility, proactive threat detection, and the ability to isolate compromised devices at speed.”

This latest warning forms part of the UK Government’s wider cyber strategy after announcing the new AI Cyber Security Code of Practice earlier this year. This will form the basis of a new global standard to secure AI and ensure national security keeps pace with technological evolution, safeguarding the country against emerging digital threats.

For more from NCSC click here.

DCNN staff - 8 May 2025

Artificial Intelligence in Data Centre Operations

Data

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

News

Fluidstack selects VAST Data to power AI workloads

VAST Data, an AI data platform company, today announced that Fluidstack, the AI cloud platform, has selected the VAST Data Platform to join other partners in helping to power large-scale, high-performance AI workloads for Fluidstack’s global customer base. With VAST, Fluidstack can deliver enterprise-grade stability, security, and innovation for some of the most demanding AI training environments in the world.

Fluidstack has built its business by managing end-customer workloads on third-party compute capacity – from VAST-powered AI cloud service provider customers to building dedicated GPU clusters on behalf of clients. Pushing the boundaries of what managed services can offer, Fluidstack uses a flexible problem-solving approach to help end customers manage and scale their workloads with unmatched reliability and agility.

Our mission at Fluidstack is to take the complexity out of deploying and scaling AI infrastructure for our customers,” says César Maklary, President & Co-Founder of Fluidstack. “VAST’s platform gives us the advanced enterprise capabilities we need to deliver reliable, scalable, secure, and future-proof AI infrastructure for our customers as they build cutting-edge models to further AI adoption.

The VAST Data Platform provides Fluidstack’s end customers with:

• Reliable, secure data management: VAST’s enterprise-grade stability, multi-tenant security, and reliability were critical in supporting the demanding AI workloads that Fluidstack manages for customers, while the VAST DataStore’s multi-protocol support (S3, NFS, SMB) offered seamless interoperability for diverse application needs.• Future-proof AI infrastructure: To further support Fluidstack in building, operating, and managing AI infrastructure and workloads for customers, the VAST DataEngine provides integrated vector search capabilities, automated triggers, and intelligent data processing functions designed for large-scale model training and inference. Combined with the real-time data awareness and scalable semantic indexing of the VAST InsightEngine, Fluidstack is well-positioned to deliver increasingly intelligent, responsive, and globally efficient AI infrastructure services.• Fast access to distributed data at limitless scale: The VAST Data Platform’s unique Disaggregated Shared-Everything (DASE) architecture ensures these deployments can reach exabyte scale while remaining cost-efficient—helping Fluidstack empower organisations to use distributed datasets and enable globally-synchronised model training.• Bringing structure to unstructured data: The VAST DataBase serves as a transactional data lakehouse that supports trillions of vectors, allowing Fluidstack customers to index the entirety of their distributed data corpus for AI deployments - providing real-time data access for efficient querying, analysis, and retrieval of massive datasets.

Fluidstack’s innovative approach to AI infrastructure delivery requires a data platform that can operate globally, securely, and with the performance to match cutting-edge AI workloads,” says Renen Hallak, Founder & CEO of VAST Data. “Together with Fluidstack, we’re helping customers turn visionary projects into reality. The combination of Fluidstack’s dynamic managed services with VAST’s global data fabric and advanced enterprise features is unlocking new possibilities for AI model training and development at scale.

For more from VAST Data, click here.

Simon Rowley - 17 April 2025

Artificial Intelligence in Data Centre Operations

Data

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Enterprise Network Infrastructure: Design, Performance & Security

News in Cloud Computing & Data Storage

Juniper and Google Cloud enhance branch deployments

Juniper Networks has announced its collaboration with Google Cloud to accelerate new enterprise campus and branch deployments and optimise user experiences. With just a few clicks in the Google Cloud Marketplace, customers can subscribe to Google’s Cloud WAN solution alongside Juniper Mist wired, wireless, NAC, firewalls and secure SD-WAN solutions.

Unveiled at Google Cloud Next 25, the solution is designed to simply, securely and reliably connect users to critical applications and AI workloads whether on the internet, across clouds or within data centres.

“At Google Cloud, we’re committed to providing our customers with the most advanced and innovative networking solutions. Our expanded collaboration with Juniper Networks and the integration of its AI-native networking capabilities with Google’s Cloud WAN represent a significant step forward,” says Muninder Singh Sambi, VP/GM, Networking, Google Cloud. “By combining the power of Google Cloud’s global infrastructure with Juniper’s expertise in AI for networking, we’re empowering enterprises to build more agile, secure and automated networks that can meet the demands of today’s dynamic business environment.”

AIOps key to GenAI application growth

As the cloud expands and GenAI applications grow, reliable connectivity, enhanced application performance and low latency are paramount. Businesses are turning to cloud-based network services to meet these demands. However, many face challenges with operational complexity, high costs, security gaps and inconsistent application performance. Assuring the best user experience through AI-native operations (AIOps) is essential to overcoming these challenges and maximising efficiency.

Powered by Juniper’s Mist AI-Native Networking platform, Google’s Cloud WAN, a new solution from Google Cloud, delivers a fully managed, reliable and secure enterprise backbone for branch transformation. Mist is purpose-built to leverage AIOps for optimised campus and branch experiences, assuring that connections are reliable, measurable and secure for every device, user, application and asset.

“Mist has become synonymous with AI and cloud-native operations that optimise user experiences while minimising operator costs,” says Sujai Hajela, EVP, Campus and Branch, Juniper Networks. “Juniper’s AI-Native Networking Platform is a perfect complement to Google’s Cloud WAN solution, enabling enterprises to overcome campus and branch management complexity and optimise application performance through low latency connectivity, self-driving automation and proactive insights.”

Google’s Cloud WAN delivers high-performance connections for campus and branch

The campus and branch services on Google’s Cloud WAN driven by Mist provide a single, secure and high-performance connection point for all branch traffic. A variety of wired, wireless, NAC and WAN services can be hosted on Google Cloud Platform, enabling businesses to eliminate on-premises hardware, dramatically simplifying branch operations and reducing operational costs. By natively integrating Juniper and other strategic partners with Google Cloud, Google’s Cloud WAN solution enhances agility, enabling rapid deployment of new branches and services, while improving security through consistent policies and cloud-delivered threat protection.

Carly Weller - 11 April 2025

Artificial Intelligence in Data Centre Operations

Cooling

Data

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Data Centres

Liquid Cooling Technologies Driving Data Centre Efficiency

Compu Dynamics launches AI and HPC Services unit

Compu Dynamics has announced the launch of its full lifecycle AI and High-Performance Computing (HPC) Services unit, showcasing the company’s end to end capabilities.

The expanded portfolio encompasses the entire spectrum of data centre needs, from initial design and procurement to construction, operation and ongoing maintenance, with a particular emphasis on cutting-edge liquid cooling technologies for AI and HPC environments.

Compu Dynamics’ new AI and HPC service offerings build on the company’s expertise in white space deployment, including advanced liquid cooling and post-installation services. As a vendor-neutral solutions provider, the company is uniquely positioned to support equipment from virtually every manufacturer with no geographical limitations, ensuring clients receive unbiased recommendations and optimal solutions tailored to their specific requirements.

"Our advanced AI and HPC service offerings represent a significant evolution in data centre services," says Steve Altizer, President and CEO of Compu Dynamics. “We have created this team to respond to the accelerating demand for highly-qualified technical support for high-density AI data centre infrastructure. By working with a variety of OEM partners and offering true end-to-end solutions, we are empowering our clients to focus on their core business while we handle the complexities of their modern critical infrastructure."

The company’s holistic solutions portfolio addresses the growing need for specialised support in high-density computing environments. Compu Dynamics’ innovative liquid cooling solutions are said to offer superior efficiency and reduced energy consumption, making them essential for future-ready data centres. Key highlights of these service offerings include:

· Equipment evaluation, design consultation and procurement.

· Power distribution and liquid cooling system installation, startup, commissioning and quality assurance/quality control.

· Flexible maintenance service options designed for seamless, worry-free support including comprehensive fluid management, coolant sampling and contamination and corrosion prevention.

· Onsite staffing for day-to-day technical operations.

· Dedicated customer success manager.

· 24x7 emergency response team for technical issues and repair services.

"As AI and HPC workloads drive unprecedented demand on data centre infrastructure, our liquid cooling expertise has become increasingly crucial,” says Scott Hegquist, Director of AI/HPC Services at Compu Dynamics. “We're committed to helping our clients navigate these challenges, providing cutting-edge solutions that optimise performance, efficiency and sustainability."

Carly Weller - 11 April 2025

Artificial Intelligence in Data Centre Operations

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Data Centres

STT GDC India launches AI-ready campus in Kolkata, India

ST Telemedia Global Data Centres (India) (STT GDC India) is set to revolutionise the data centre landscape in Eastern India with the launch of its state-of-the-art AI-ready campus in New Town, Kolkata, India.

Spanning 5.59 acres, this next-generation campus is engineered to support the growing demands of AI computing with high-density rack configurations, advanced cooling systems, and a scalable, modular design. It aligns with the larger economic goals of the country to promote digitally enabled growth and broaden access to sustainable digital infrastructure.

The new age data centre facility has earned the prestigious TIA-942 Rated-3 Design certification, underscoring its commitment to world-class infrastructure and reliability. The campus provides a significant boost to digital infrastructure creation in the eastern part of the country with scalable capacity of up to 25MW in terms of overall IT load. It incorporates forward-thinking power architecture with an N+2C design for reliability and a radial N+N configuration for main power incomers, ensuring dedicated feeder availability. The campus utilises TYPE-TESTED Compact Substations and LV DGs, setting new standards in power reliability and efficiency.

Bimal Khandelwal, CEO of STT GDC India, says, "This expansion is a gateway to accelerating AI innovation in Eastern India. Our Kolkata campus is specifically designed to support the burgeoning AI ecosystem, from startups developing local language AI models to enterprises deploying large language models. The facility’s high-performance computing capabilities and low-latency connectivity will empower organisations to build and deploy AI solutions that drive digital transformation across sectors”.

The facility is built with a concurrently maintainable infrastructure ensuring zero Single Points of Failure (SPOF). It boasts a modular design with flexibility for liquid cooling technologies, supporting the next generation of high-performance computing workloads. The Kolkata data centre prioritises sustainability with a low-PUE (Power Usage Effectiveness) cooling design, incorporating water conservation techniques through closed-loop cooling, rainwater harvesting and greywater reuse. The facility also employs low-GWP refrigerants to reduce carbon footprint, reinforcing STT GDC India's commitment to environmental responsibility.

Having launched in March 2025, this Kolkata facility expands STT GDC India's nationwide footprint to 30 data centres across 10 cities with a total IT load capacity of 400MW. Its strategic location in New Town’s Silicon Valley positions it as a crucial hub for AI development, serving enterprises, hyperscale cloud service providers and government organisations.

This investment aligns with India's growing focus on artificial intelligence and the increasing demand for AI-ready digital infrastructure. The facility will support diverse AI-driven initiatives, from natural language processing in regional languages to computer vision applications in manufacturing and healthcare, ensuring high reliability, energy efficiency and environmental sustainability.

Carly Weller - 7 April 2025

Artificial Intelligence in Data Centre Operations

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Data Centres

News in Cloud Computing & Data Storage

Raxio lands $100m to expand sub-Saharan African data centres

Raxio Group has signed an agreement for $100 million in financing from the International Finance Corporation (IFC) to accelerate Raxio’s expansion of data centres to power key technologies like AI, cloud computing and digital financial services – critical enablers of African economic growth and digital inclusion.

The debt funding from IFC will help Raxio double its deployment of high-quality colocation data centres within three years, addressing growing demand in underserved markets across the continent. The company is developing a Sub-Saharan African regional data centre platform in countries including Ethiopia, Mozambique, the Democratic Republic of Congo, Côte d’Ivoire, Tanzania and Angola.

Raxio is committed to bridging Africa’s digital divide by introducing Tier III-certified, carrier-neutral, and secure data services to markets that have been overlooked by other providers. With a focus on high-growth areas, the company is tapping into regions with significant economic potential to unlock new opportunities across the continent.

“Raxio’s business model shows how digital infrastructure can empower businesses, governments and communities to thrive in the digital economy,” says Sarvesh Suri, IFC Regional Industry Director, Infrastructure and Natural Resources in Africa. “This partnership between Raxio and IFC is set to strengthen Africa’s digital ecosystem and catalyse further investments and regional integration, building a more inclusive and sustainable future.”

“This funding from IFC is a powerful endorsement of Raxio’s vision and operational excellence,” says Robert Skjødt, CEO of Raxio Group. “It will allow us to bring critical infrastructure to the regions that need it most and attract further investment as we continue to grow. Together with our other partners, we’re building the foundation for Africa’s digital future and setting new benchmarks for sustainability.”

Raxio’s facilities are designed for 24/7 reliability, ensuring uninterrupted service even during maintenance or unforeseen disruptions. The company integrates renewable energy solutions to minimise its environmental footprint and uses innovative energy-efficient equipment to reduce electricity and water consumption for cooling in several of its countries of operation.

In the Democratic Republic of Congo, Raxio’s Kinshasa facility is poised to meet growing demand for data services in one of Africa’s largest and fastest-growing urban centres. In Côte d’Ivoire, Raxio is establishing a digital hub to serve Francophone West Africa, connecting regional markets and facilitating cross-border trade. These efforts are empowering local businesses and integrating them into the global digital economy.

Carly Weller - 7 April 2025

Artificial Intelligence in Data Centre Operations

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Data Centres

News

AirTrunk expands with second AI-ready data centre in Johor

Asia Pacific & Japan (APJ) hyperscale data centre specialist, AirTrunk, has announced plans to develop its second cloud and AI-ready data centre in Johor, Malaysia.

AirTrunk JHB2 will be located in Iskandar Puteri, Johor region. Scalable to over 270MW, JHB2 will support demand from global public cloud and technology companies in the region.

The JHB2 announcement follows the opening of AirTrunk’s first data centre in Johor, 150+MW AirTrunk JHB1, in July 2024. Combined, AirTrunk is investing over RM 9.7 billion / A$3.5 billion in Malaysia, providing more than 420MW of IT load.

JHB2, strategically located in a major availability zone, provides an end-to-end cross border connectivity strategy for customers and the ability to scale their operations to match demand. The additional capacity will support Malaysia’s fast-growing digital economy and follows the establishment of the landmark Johor-Singapore special economic zone (JS-SEZ).

Like JHB1, the new data centre will feature AirTrunk’s state-of-the-art liquid cooling technology for managing the high-density demands of AI and will ensure significant energy savings. JHB2 is designed to meet the highest standards of efficiency and security, with a low design PUE (Power Usage Effectiveness) of 1.25 and multiple renewable energy options available to customers.

To support Johor State Government’s aim to diversify water sources, AirTrunk is scoping treated greywater as a recycled sustainable water supply for its campuses’ operations.

Aligned with the Malaysian Government’s focus on National Technical and Vocational Education and Training (TVET) and increasing opportunities for highly skilled workers, AirTrunk is creating jobs for Malaysians, with above market rate remuneration for AirTrunk employees, 90% local employees and career development opportunities. AirTrunk is also contributing to digital literacy programs and funding STEM education scholarships at the Universiti Teknologi Malaysia (UTM) to further support the local community over the long term.

Advancing towards its net zero 2030 target, AirTrunk recently announced one of the largest onsite solar deployments for a data centre in Southeast Asia at JHB1, as well as the first renewable energy Virtual Power Purchase Agreement for a data centre for 30MW of renewable energy, under Malaysia’s Corporate Green Power Programme.

AirTrunk is working with the leading Malaysian utility company, Tenaga Nasional Berhad (TNB) to connect JHB2 through TNB’s Green Lane Pathway for Data Centres initiative, streamlining high-voltage electricity supply to an accelerated timeframe of 12 months. AirTrunk is also providing land for TNB to build a new substation, adding resilience to the electricity distribution system in the area. This continuing collaboration, which started from an MoU signed in 2023, opens the door for AirTrunk to explore green solutions with TNB in efforts to advance the energy transition in the region.

AirTrunk Founder & Chief Executive Officer, Robin Khuda, says, “As Malaysia establishes itself as a digital powerhouse, it is a privilege for AirTrunk to contribute to this growth over the long term and deliver shared benefit for the people of Malaysia. AirTrunk’s data centres serve as essential infrastructure that will help boost productivity and enable new products and services that can drive economic growth.

“We are committed to helping realise the potential of cloud and AI in Malaysia and prioritising circularity for the benefit of society and the environment. AirTrunk is supporting local digital literacy and STEM initiatives, driving the energy transition and working to embed a sustainable water supply to make a positive impact.”

Carly Weller - 12 February 2025

Artificial Intelligence in Data Centre Operations

Data

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

News

Progress Data Cloud platform launched

Progress, a provider of AI-powered digital experiences and infrastructure software, has announced the launch of Progress Data Cloud, a managed Data Platform as a Service designed to simplify enterprise data and artificial intelligence (AI) operations in the cloud. With Progress Data Cloud, customers can accelerate their digital transformation and AI initiatives while reducing operational complexity and IT overhead.

As global businesses scale their data operations and embrace AI, a robust cloud data strategy has become the cornerstone of success, enabling organisations to harness the full potential of their data for innovation and growth. Progress Data Cloud meets this critical need by providing a unified, secure and scalable platform to build, manage and deploy data architectures and AI projects without the burden of managing IT infrastructure.

“Organisations increasingly recognise that cloud and AI are pivotal to unlocking business value at scale,” says John Ainsworth, GM and EVP, Application and Data Platform, Progress. “Progress Data Cloud empowers companies to achieve this by offering a seamless, end-to-end experience for data and AI operations, removing the barriers of infrastructure complexity while delivering exceptional performance, security and predictability.”

Key features and benefits

Progress Data Cloud is a Data Platform as a Service that enables managed hosting of feature-complete instances of Progress Semaphore and Progress MarkLogic, with plans to support additional Progress products in the future. Core benefits include:

• Simplified operations: Eliminates infrastructure complexity with always-on infrastructure management, monitoring service, continuous security scanning and automated product upgrades.• Cost efficiency: Reduces IT costs and bottlenecks with predictable pricing, resource usage transparency and no egress fees.• Enhanced security: Helps harden security posture with an enterprise-grade security model that is SOC 2 Type 1 compliant.• Scalability and performance: Offers superior availability and reliability, supporting mission-critical business operations, GenAI demands and large-scale analytics.• Streamlined user management: Self-service access controls and tenancy management provide better visibility and customisation.

Progress Data Cloud accelerates time to production by offering managed hosting for the Progress MarkLogic Server database and the Progress MarkLogic Data Hub solution with full-feature parity. Customers can benefit from enhanced scalability, security and seamless deployment options.

Replacing Semaphore Cloud, Progress Data Cloud provides a next-generation cloud platform with all existing Semaphore functionality plus new features for improved performance, security, reliability, user management and SharePoint Online integration.

“As enterprises continue to invest in digital transformation and AI strategies, the need for robust, scalable and secure data platforms becomes increasingly evident,” says Stewart Bond, Vice President, Data Intelligence and Integration Software, IDC. “Progress Data Cloud addresses a critical market need by simplifying data operations and accelerating the development of AI-powered solutions. Its capabilities, from seamless infrastructure management to enterprise-grade security, position it as a compelling choice for organisations looking to unlock the full potential of their data to drive innovation and business value.”

Progress Data Cloud is a cloud-based hosting of foundational products that make up the Progress Data Platform portfolio.

Progress Data Cloud is now available for existing and new customers of the MarkLogic and Semaphore platforms.

Simon Rowley - 24 January 2025

Head office & Accounts:

Suite 14, 6-8 Revenge Road, Lordswood

Kent ME5 8UD

T: +44 (0)1634 673163

F: +44 (0)1634 673173