Data Centre Infrastructure News & Trends

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

News

EUDCA backs EU data centre energy integration plan

The European Data Centre Association (EUDCA), the representative body of the European data centre community, has co-signed a Declaration of Intent aimed at improving the integration of data centres within the European Union's energy system.

The agreement supports the objectives of the European Commission's Strategic Roadmap for Digitalisation and AI in the Energy Sector and seeks to strengthen cooperation between data centre operators, energy providers, grid operators, and public authorities.

As investment in AI, cloud computing, and digital infrastructure continues to increase across Europe, the declaration is intended to help establish common frameworks for planning and coordinating future infrastructure development.

According to the signatories, the initiative will contribute to the development of shared principles, procedures, and best practices that can be adopted by EU Member States to support sustainable growth in data centre capacity.

The declaration aligns with several European policy initiatives, including the Data Centre Energy Efficiency Package, the European Grids Package, and the proposed Cloud and AI Development Act.

Industry groups target closer energy sector collaboration

The declaration has been signed by organisations representing a broad range of sectors, including electricity networks, energy storage, renewable energy, district heating, and digital infrastructure.

Among the signatories are the EUDCA, Eurelectric, ENTSO-E, WindEurope, SolarPower Europe, Energy Storage Europe, and the EU DSO Entity.

Lex Coors, President of the EUDCA, says, "The energy system can no longer be viewed as a single connection to a single data centre. Europe is moving into a more complex, four-dimensional environment where capacity, flexibility, sustainability, and digital resilience must be planned together.

"Data centres are becoming part of the wider energy system, and this Declaration of Intent is an important step towards building that cooperation in a responsible and future-proof way."

The declaration establishes a series of working groups focused on areas including grid planning, connection agreements, flexibility services, energy generation, and energy storage.

Working groups to address future capacity requirements

Europe is expected to expand its data centre capacity significantly over the next five to seven years as AI infrastructure investment accelerates. The declaration is intended to support this growth while helping Member States meet wider energy and sustainability objectives.

Michael Winterson, Secretary General of the EUDCA, explains, "Europe’s AI, cloud, and digital ambitions will require significant new infrastructure capacity over the coming years. Delivering that growth responsibly will depend on much closer coordination between the digital infrastructure and energy sectors.

"This Declaration of Intent shows our commitment to partner with energy providers, local authorities, and wider EU institutions to deliver on advanced technologies, energy, and sustainability ambitions."

The EUDCA says it will contribute technical and policy expertise to the working groups as discussions progress, supporting the development of future frameworks for cooperation between Europe's digital infrastructure and energy sectors.

For more from the EUDCA, click here.

Joe Peck - 4 June 2026

Data Centre Business News and Industry Trends

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Insights into Data Centre Investment & Market Growth

Bergen Engines signs 750MW data centre deal

Bergen Engines, a Norwegian manufacturer of medium-speed gas and dual-fuel engines, has signed an agreement with Crusoe to provide up to 750MW of power generation capacity for AI data centre developments in the United States.

The agreement comprises a 438MW contract and a further 310MW letter of intent, supporting Crusoe's expanding portfolio of AI infrastructure projects.

Crusoe develops large-scale AI data centre campuses using a combination of grid power, natural gas generation, renewable energy, and battery storage. The company deploys both grid-connected and behind-the-meter power infrastructure to support the high energy demands of AI workloads.

John Adams, Senior Vice President of Power at Crusoe, says, "The pace of AI infrastructure development demands builders who treat power as a first-class AI infrastructure layer.

"Bergen’s gensets give us the reliable baseload power we need to energise large-scale campuses, deployable on our timeline. We’re building AI factories at record speed, and this agreement helps us maintain that pace."

Under the initial contract, Bergen Engines will supply 27 gas-powered generating sets rated at 12.5MWe and 20 units rated at 5MWe. Additional units are included within the letter of intent, with deliveries planned across multiple US locations through 2027.

On-site generation supports growing AI power demand

The generators are intended to provide continuous baseload power for AI data centres operating around the clock.

The systems will incorporate alternators from Marelli Motori and dynamic power stabilisation technology from Piller Power Systems. According to the companies, the technology is designed to manage rapid fluctuations in electricity demand associated with computing-intensive workloads.

Dean Richards, CEO of Piller Power Systems, says, "AI workloads have a distinct power profile that demands purpose-built generation and stabilisation technology.

"SHIELD-X is designed to manage those dynamics, protecting the generation assets and maintaining stable plant operation while ensuring consistent power quality for the data centre."

As AI infrastructure capacity expands, developers are increasingly turning to on-site and behind-the-meter power generation where grid connections are unavailable or unable to support required capacity within project timescales.

Theo Lorentzos, Vice President of Sales for Bergen Engines Americas, notes, "The pace of AI infrastructure development is unlike anything the power generation industry has seen before.

"In this market, access to power determines how fast you can scale. Crusoe’s model is built around speed and stable power, and our solution is designed to deliver both."

The agreement forms part of a wider trend towards dedicated power infrastructure for AI data centres, enabling developers to accelerate deployments while reducing reliance on traditional utility connection timelines.

For more from Bergen Engines, click here.

Joe Peck - 3 June 2026

Data Centre Infrastructure News & Trends

Enterprise Network Infrastructure: Design, Performance & Security

News

LINX offers 15 months free at NoVA

The London Internet Exchange (LINX), an internet exchange point (IXP) operator, has launched a new initiative offering 15 months of no-charge port access and peering services at its LINX NoVA internet exchange in Ashburn, Virginia, USA.

Available from 1 June 2026, the offer applies to both existing members upgrading services and organisations joining LINX for the first time. Participants signing up to an 18-month service term will receive the first 15 months without charge.

LINX says the initiative has been introduced to help network operators manage increasing traffic demands and cost pressures while expanding their interconnection capabilities.

Established in 2014, LINX NoVA operates within Ashburn's 'Data Center Alley', one of the world's largest internet interconnection markets. The exchange spans five data centre campuses operated by Equinix, Digital Realty, Iron Mountain, CoreSite, and QTS.

More than 50 networks are connected to the platform, including content delivery networks, internet service providers, and cloud operators.

Jennifer Holmes, CEO of LINX, says, "We recognise that network operators are managing a complex environment right now, from capacity planning to cost control. As a member-owned organisation, our role is to listen carefully to the feedback from our membership and monitor trends in the industry, acting where we can.

"This initiative is about supporting our community in a practical way - creating space for networks to plan, grow, and adapt without immediate pressure."

Ashburn exchange continues to expand network ecosystem

LINX NoVA operates as a carrier-neutral internet exchange and allows participants to establish peering relationships through a single connection across its multi-site infrastructure.

The organisation says the new programme is intended to encourage greater traffic localisation, increased peering activity, and further interconnection growth within the region.

Jennifer continues, "We want to remind our members why LINX has remained a global leader in interconnection for over 30 years.

"The difference is in the engineering discipline, the resilience of the platform, and the depth of operational support we provide. Not all IXPs are built the same - and when networks rely on interconnection for critical traffic paths, there’s very little margin for error.

"Packet loss, instability, or downtime can have a direct and immediate impacts on revenue and customer experience. At LINX, we’ve built our reputation on removing that risk, delivering a level of reliability and support that our members can depend on without question."

The exchange is supported by a fully redundant architecture, a 24/7 Network Operations Centre, and a distributed platform spanning multiple data centre locations.

LINX operates as a member-owned organisation and says revenue is reinvested into the development of its infrastructure and services.

For more from LINX, click here.

Joe Peck - 2 June 2026

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Liquid Cooling Technologies Driving Data Centre Efficiency

Products

Schneider Electric unveils Uniflair XCA chillers

Global energy technology company Schneider Electric has introduced the Uniflair XCA range of air-cooled and free-cooling chillers, designed for high-density, liquid-cooled data centres supporting AI workloads.

The new portfolio comprises the Uniflair XCAC air-cooled series and the Uniflair XCAF free-cooling series. Both incorporate oil-free centrifugal compressors with magnetic bearing technology and variable-speed drives to support operation across varying thermal loads and environmental conditions.

The chillers are available in six sizes, ranging from 1,200kW to 2,500kW, and utilise low global warming potential (GWP) refrigerants. Schneider Electric says the systems are designed to support elevated water temperatures commonly associated with liquid cooling deployments in AI data centres.

Andrew Bradner, Senior Vice President, Cooling Business at Schneider Electric, notes, "Energy efficiency, adaptability, and reliability are essential components of liquid cooling systems for AI-optimised data centres, and we’ve designed the Uniflair XCA line with these most important design features at the forefront.

"With adaptable water operating temperatures and versatile deployment options, the XCA line features a system-level approach that gives operators scalability, enhanced performance, and long-term peace of mind as data centre complexity continues to rise."

Cooling infrastructure adapts to rising AI power densities

As AI applications, GPU clusters, and liquid cooling deployments increase data centre power densities, cooling infrastructure is becoming an increasingly important factor in facility efficiency and reliability.

The Uniflair XCA platform incorporates oil-free magnetic bearing centrifugal compressors, which remove the need for lubrication systems and are intended to reduce maintenance requirements and mechanical losses.

The chillers also feature a spray evaporator combined with V-shaped microchannel coils, designed to improve heat exchange performance while reducing refrigerant volume and material usage.

For free-cooling deployments, the XCAF models support water outlet temperatures of up to 33°C and are designed to operate in ambient temperatures ranging from -20°C to 52°C. Schneider Electric states that, in suitable climates, the free-cooling configuration can reduce energy consumption compared with mechanical cooling systems by extending free-cooling operating periods.

The range can also be configured with a variety of electrical, hydraulic, acoustic, and performance options to suit different deployment requirements.

Additionally, a quick restart capability is included, enabling systems to reportedly return to full operating capacity within three minutes of a power outage.

New control features target operational efficiency

The XCA range also introduces new firmware and control functions designed to optimise cooling performance.

These include variable-speed pump algorithms supporting constant flow, constant temperature differential, and constant head pressure operation, alongside advanced fan control modes that can be adjusted according to temperature, load conditions, or scheduled operating periods.

Additional monitoring capabilities include energy metering and real-time water flow measurement to provide greater visibility into system performance.

According to Schneider Electric, these features are designed to reduce compressor cycling and improve long-term operational stability.

The first Uniflair XCA chiller units are scheduled to begin shipping globally in June 2026.

For more from Schneider Electric, click here.

Joe Peck - 2 June 2026

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Products

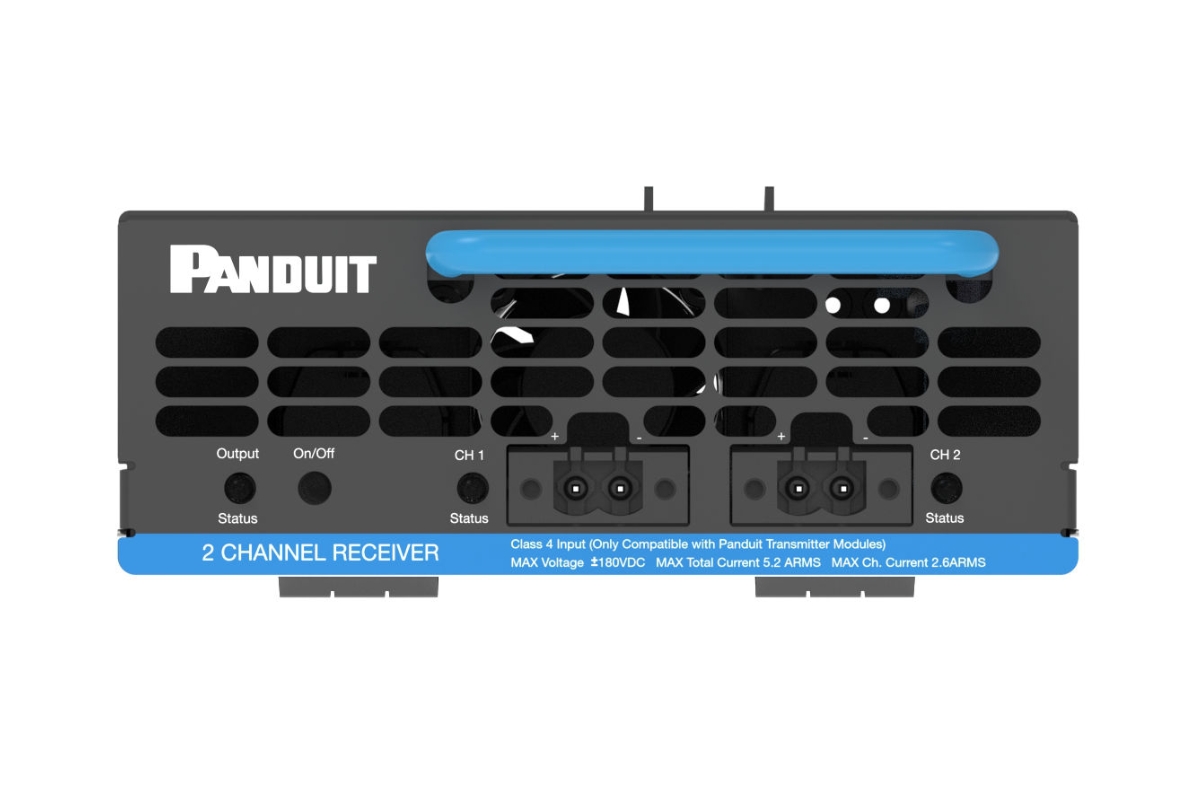

Panduit expands fault managed power portfolio

Panduit, a manufacturer of electrical and network infrastructure hardware, has launched the second generation of its Fault Managed Power System (FMPS), introducing higher power density and additional deployment options for enterprise environments.

Designed for centralised power distribution, the FMPS Gen 2 platform is intended for use across campuses, warehouses, and large distributed facilities. The system uses Class 4 fault managed power technology, which allows power to be delivered over longer distances using low-voltage installation methods.

According to Panduit, the platform is UL 1400 listed and SIL 3 rated, enabling organisations to distribute power while reducing electrical hazards and simplifying installation requirements.

The company also states that the system uses less copper than traditional power distribution methods and remains backward compatible with existing FMPS deployments, allowing infrastructure upgrades without replacing existing installations.

A key feature of the platform is the consolidation of backup power systems. By centralising UPS infrastructure rather than deploying units within individual intermediate distribution frames (IDFs), organisations can reduce equipment requirements, maintenance demands, and space utilisation.

New hardware targets enterprise and edge deployments

The FMPS Gen 2 portfolio includes a new 2kW system comprising a 1kW transmitter, a 2kW power supply, and a 2kW receiver. The range also includes a 600W single-channel receiver designed for applications such as lighting and security systems.

Additional updates include higher-density power delivery within the same footprint, expanded receiver options, and support for both PoE++ and DC-powered devices.

The platform is designed to support a range of applications including enterprise networking equipment, security and surveillance systems, wireless and in-building cellular infrastructure, lighting, and smart building technologies.

Mahmoud Ibrahim, Senior Business Development Manager at Panduit Ventures, says, "FMPS Gen 2 reinforces our commitment to making enterprise power safer, simpler, and more efficient.

"By increasing power density and enabling true UPS consolidation, customers can place power where it’s needed, remove complexity from IDFs, and confidently support the growing demands of modern networks - all without introducing new risk."

The platform also incorporates monitoring and management capabilities intended to provide centralised visibility of connected infrastructure and support future expansion.

Tom Kelly, Chief Technology Officer at Panduit, explains, "FMPS is engineered and designed by Panduit as a complete power platform, integrating power, cabling, and physical infrastructure into a single, coordinated solution.

"Drawing on the expertise that developed the first generation of UL-listed Class 4 power distribution products, Panduit has engineered a second-generation system that aligns with where the market is going while also meeting requests from customers and partners in the space.

"We’re excited to see the market transformation taking shape, as Class 4 power distribution adoption grows."

For more from Panduit, click here.

Joe Peck - 2 June 2026

Data Centre Build News & Insights

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Renewables and Energy: Infrastructure Builds Driving Sustainable Power

VIRTUS installs super-grid transformers at Berlin campus

VIRTUS Data Centres, a UK data centre owner-operator and part of ST Telemedia Global Data Centres (STT GDC), has completed the installation of two 185MVA super-grid transformers at its Wustermark campus in Berlin/Brandenburg, Germany.

According to the company, the transformers are among the largest deployed at a European data centre and represent a key milestone in the development of the site.

The Wustermark campus is expected to become the first data centre campus in the Berlin/Brandenburg region to connect directly to a 380kV transmission network. VIRTUS says this will give customers the option of operating without diesel generators while maintaining access to conventional backup generation where required.

The transformers form part of the campus's initial 300MW capacity, with power supplied through a dedicated 500MW substation and dual direct connections to the 50Hertz 380kV network.

VIRTUS says the integration with the 50Hertz Wustermark substation and the high-voltage transmission connections are designed to provide a resilient and stable power architecture for large-scale data centre operations.

High-voltage design targets efficiency and resilience

The company says the site has been designed to support both traditional generator-backed operations and a generator-free operating model.

As with other VIRTUS facilities, the campus will operate using 100% certified renewable electricity. The site is also located close to regional renewable energy resources, including onshore wind generation.

According to VIRTUS, the higher-voltage transformer design provides several operational benefits, including improved electrical efficiency, reduced transmission losses, increased system stability, and enhanced resilience for high-density computing environments.

The company adds that the approach may also help reduce system usage charges and long-term energy costs.

Mike Golding, SVP of Construction at VIRTUS Data Centres, says, “Delivering the Wustermark Campus has been one of the most ambitious engineering programmes VIRTUS has undertaken to date.

“From the 380kV connections to the deployment of these super-grid transformers, every element has been designed to deliver levels of resilience and scalability that have not previously been available in this region.

“This campus represents a new generation of infrastructure - one that supports AI-scale growth, reduces reliance on generators, and aligns with the future of renewable energy.”

For more from VIRTUS, click here.

Joe Peck - 1 June 2026

Data Centre Infrastructure News & Trends

Enterprise Network Infrastructure: Design, Performance & Security

Sponsored

Digital infrastructure boosts rural development in Guizhou

The province of Guizhou has reached the top ranks in base station numbers and 5G coverage in China. Digital infrastructure like base stations forms a strong economic foundation and stimulates rural development. Under the guidance of national strategies like Digital China and Broadband China, Guizhou continues to enhance communications network construction.

Robust 4G, 5G, and 5G-A networks have enabled mountainous villages and ancient towns in Guizhou to overcome geographical and communications barriers. These upgrades have made life more convenient for local residents, stimulated rural economic growth, and enabled local intangible cultural heritage to reach a wider audience, ensuring shared benefits from digitalisation for all.

Guizhou is one of China's first national big data pilot regions. To date, China Mobile has built nearly 200,000 base stations in the province, including more than 70,000 5G base stations. Now all of Guizhou's administrative villages and high-speed rail lines are covered by 5G. Special coverage assurance is provided for key urban and rural areas, with gigabit broadband available in all townships.

Connecting canyons with 5G-A to stimulate growth

Economic and telecom development along the Huajiang Grand Canyon, which looks like a crack in the Earth, have long been constrained by the region's mountainous terrain. Now, the Huajiang Grand Canyon Bridge has eliminated physical barriers, and the integrated communications networks built by Chinese multinational technology company Huawei and China Mobile provide a digital bridge for these villages.

Working in the steep cliffs of the Huajiang Grand Canyon, Huawei and China Mobile innovatively used drones to deploy four 4G sites, four 5G sites, and 39 cells on the bridge and in its surrounding areas to provide 4G, 5G, and 5G–A connectivity for around 11,000 users. Field tests in hotspot areas like the Yundu Service Area have shown average 5G-A download speeds of up to 1500Mbps.

Pan Cong, Network Engineer at China Mobile Guizhou, explains, "For Guizhou's complex mountainous terrain, we used drone-assisted lifting and installation to solve the challenge of building networks over cliffs and canyons where traditional construction methods cannot be applied."

Improved transport and network infrastructure is stimulating and transforming the development of villages in the region. Residents of Xiaohuajiang Village now use high-speed networks for e-commerce and homestay businesses. In April 2026, the village had a total of 19 homestays, and its homestay revenue and tourist numbers increased three to fourfold year-on-year.

Homestay owner Lin Guoquan says, "Now, more tourists can find out about our village through short videos and livestreaming. And many young people who used to work in other places have returned home to seek careers in the village."

Digital technology helps conserve and promote the cultural heritage of ancient towns

Digital enablement is happening in Guizhou's ancient towns as well. In Tianlong Tunpu Ancient Town, which has a history of more than 600 years, network construction was very difficult in the past due to the town's narrow streets and densely-packed stone buildings. Today, Huawei and China Mobile have used innovative solutions to build nine 4G base stations, eight 5G base stations, and one 5G-A base station to provide seamless network coverage in the town's core areas.

With improved connectivity, residents in the town have started selling local products like chili peppers and batik items through livestreaming. This has resulted in a 15% increase in agricultural product sales and a 9% increase in resident income. Dixi Opera, a form of intangible cultural heritage, can now reach a wider audience through livestreaming. Relevant livestreams have already garnered more than 100,000 views. These developments have boosted local cultural tourism. From January to April 2026, the number of visitors to Tianlong Tunpu Ancient Town increased by two to three times year-on-year.

Dixi Opera performer Zheng Ruhong comments, "Now, many people across the country know about Dixi Opera from livestreaming. Many young people who used to work in other places have returned home to learn this art. This ensures that this intangible cultural heritage can be preserved and passed down."

Working together to promote digital inclusion and bridge the development divide

On 29 May, China Mobile and Huawei jointly hosted the TECH Cares Digital and Intelligent Guizhou Roundtable Forum, which brought together representatives from carriers, enterprises, and international organisations. The attendees discussed how digital infrastructure enables rural development, intangible cultural heritage preservation, and sustainable development in the region, and explored new paths for inclusive digital development.

Yang Mengmeng from China Mobile Guizhou stated that China Mobile Guizhou set up special teams to overcome the challenges of building networks in mountainous areas to serve local residents in Guizhou. The company has led the construction of a 'gigabit Guizhou', providing 5G coverage to all administrative villages and dual gigabit connections to all townships.

Aleksei Savrasov from the United Nations Industrial Development Organization (UNIDO) says, "For a remote enterprise, a signal bar is the difference between a local stall and a global market. Where the signal reaches, the economy follows."

Huawei's Zhou Jianguo adds, "While physical bridges shorten distances, digital connections bridge digital gaps. Huawei will continue advancing technological innovation and open collaboration to provide remote areas with equal access to the digital world, so that they can share in the dividends of the digital era."

By 2025, Huawei had worked with partners to provide digital connectivity for 170 million people in rural and remote areas in more than 80 countries and regions. Moving forwards, Huawei and China Mobile say they will continue to innovate in rural network technologies and provide digital skills training to help more regions bridge geographical and digital divides. This will allow more people to benefit from the digital and intelligent world.

For more from Huawei, click here.

Joe Peck - 29 May 2026

Data Centre Build News & Insights

Data Centre Infrastructure News & Trends

Enterprise Network Infrastructure: Design, Performance & Security

Quantum Computing: Infrastructure Builds, Deployment & Innovation

euNetworks launches quantum-safe connectivity service

euNetworks, a European bandwidth infrastructure company, has launched a new quantum-safe private connectivity service developed in collaboration with Adtran, a US manufacturer of networking and communications equipment.

Called Quantum Shield, the service is designed to provide encrypted data centre connectivity for organisations with high security and compliance requirements across Europe.

According to euNetworks, the platform combines dedicated optical infrastructure, real-time fibre monitoring, and quantum-resistant encryption technologies to protect sensitive data in transit.

The company says the launch comes as businesses prepare for evolving cybersecurity regulations and post-quantum security requirements, including the EU’s post-quantum cryptography roadmap, DORA, and NIS2.

Quantum Shield will be offered as an additional security layer for euNetworks’ Private Connect MOFN service, which provides managed private network infrastructure for enterprise customers.

The new platform uses FSP 3000 technology from Adtran, alongside post-quantum cryptography aligned with standards from NIST.

According to the companies, all traffic is encrypted automatically at Layer 1 across dedicated fibre infrastructure.

Optical monitoring and encryption combined for data protection

The deployment also incorporates Adtran’s ALM fibre monitoring technology, which is designed to detect and locate fibre-tapping events in real time.

euNetworks says the combined system is intended to provide low-latency, high-throughput connectivity while giving customers greater visibility into how data is secured across the optical layer.

Marisa Trisolino, CEO of euNetworks, says, “We’re committed to providing customers with connectivity that meets increasingly stringent security requirements and chose to partner with Adtran because they bring deep expertise in optical networking and a practical understanding of how private infrastructure is built and operated at scale.”

Christoph Glingener, CTO of Adtran, adds, “By combining quantum-resilient encryption with real-time fibre monitoring, we’re helping euNetworks safeguard critical traffic without compromising performance or scalability.”

The companies say the deployment reflects increasing demand for secure optical networking infrastructure as enterprises prepare for future cybersecurity challenges linked to quantum computing.

For more from euNetworks, click here.

Joe Peck - 29 May 2026

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Liquid Cooling Technologies Driving Data Centre Efficiency

ChemTreat joins Dow coolant network for data centres

ChemTreat, a US provider of industrial water treatment chemicals and cooling system services, has joined materials science company Dow’s Coolant Care Network as a strategic US service provider for AI and liquid-cooled data centre environments.

Under the agreement, ChemTreat becomes Dow’s only preferred service provider in Virginia, USA, and will provide national support for the company’s coolant management programme.

According to Dow, the Coolant Care Network combines coolant supply, fluid testing, data analysis, and field support within a single framework for data centre operators.

ChemTreat will provide on-site services including fluid sampling, mitigation, and coolant optimisation, working alongside Dow-qualified laboratories and technical specialists.

The companies say the collaboration is intended to support data centres deploying liquid cooling systems for AI and high-density compute workloads.

Ashour Khamis, President of ChemTreat, notes, “The data centre industry is under enormous pressure to scale liquid cooling environments to meet AI-driven workload demands.

“Pairing ChemTreat’s proven service-focused approach with Dow’s decades of thermal fluid innovation and reliable global supply chain allows us to help customers quickly deploy mission-critical systems and maintain reliable cooling lifecycle performance.”

Liquid cooling demand grows alongside AI workloads

ChemTreat says its data centre offering includes water treatment technologies, monitoring systems, specialist chemistries, and support for direct-to-chip cooling loops and facility cooling infrastructure.

Through the partnership, the company will also provide access to Dow’s DOWFROST LC and DOWFROST HD heat transfer fluids, alongside certified coolant testing services and technical support.

Chuck Carn, Data Center Growth Platform Director at Dow, says, “This collaboration reflects Dow’s clear understanding of the operational complexity data centre operators face as cooling systems become more critical to performance and uptime.

“Collaborating with experienced service providers like ChemTreat, who uphold rigorous technical and service standards, is key to helping customers run their operations smoothly and with confidence.”

The companies say the partnership is designed to address increasing cooling requirements as AI infrastructure deployment continues to expand globally.

Joe Peck - 29 May 2026

Artificial Intelligence in Data Centre Operations

Data Centre Infrastructure News & Trends

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Innovations in Data Center Power and Cooling Solutions

MPs warn grid failures could cost Britain the AI race

The All-Party Parliamentary Group (APPG) for Data Centres has published its Insights Paper, summarising findings from its inaugural 'Call for Evidence'.

The group is a cross‑party group of UK MPs and Peers that fosters parliamentary understanding of data centre development, examines sector challenges (particularly planning, energy, resilience, and sustainability) and makes evidence‑based policy recommendations to support UK digital infrastructure and economic growth.

Notably, respondents to its Call for Evidence signalled a substantial appetite to invest in UK data centre infrastructure.

Operators including Ark Data Centres, Nebius, Pure DC, and VIRTUS collectively identified £11–12 billion in specific investment plans, while Microsoft's submission committed a further £22 billion to UK AI infrastructure.

Despite this intent, respondents consistently described a set of interconnected structural barriers constraining the pace and location of development:

• Grid access and energy supply ranked as the sector's top priority, with 52% of respondents placing it first.

• Planning was placed in the top three by nearly four in five respondents (79%), while energy costs, sustainability, water use, and skills also featured prominently across submissions.

• The APPG is particularly keen to hear further evidence from community representatives, local authorities, and organisations with experience of data centre development outside London and the South East.

Parliamentary comments

Chris Curtis MP, Chair of the Data Centres APPG, notes, "This Call for Evidence shows that while significant investment is ready to support the UK's expanding AI and digital economy, it remains constrained by grid access, energy costs, and planning inconsistencies.

“The APPG will use this evidence over the coming year to work constructively with stakeholders and the Government to ensure that there is a well-informed view on how data centre infrastructure drives our national economic growth."

Alison Griffiths MP, Vice-Chair of the Data Centres APPG, adds, “The submissions to this Call for Evidence make clear that the barriers to data centre development are not insurmountable.

"They highlight gaps in the consistent application of planning policy by local authorities, as well as the need to ensure electricity cost competitiveness is felt across every part of the country.

"It is clear there are practical steps the Government can take to strengthen the UK’s leadership in digital infrastructure [and] I look forward to exploring these issues further in our upcoming evidence sessions.”

David Reed MP, Officer of the Data Centres APPG, highlights, "The submissions from academic institutions such as Exeter, Durham, and Oxford remind us that research computing infrastructure is increasingly cost-prohibitive for academia. This gap risks undermining the UK's long-term international scientific competitiveness.

“As the APPG deepens its work, I look forward to hearing from a broad range of stakeholders in this vital debate and developing practical solutions that support a thriving data centre ecosystem.”

The Rt Hon. the Lord (Philip) Hunt of King’s Heath OBE, Officer of the Data Centres APPG, concludes, "Sustainability is not a secondary consideration for this sector; it is central to its long-term viability and its licence to operate in communities across the UK.

“The evidence on waste heat recovery is particularly striking: the UK is currently capturing just 3–5% of the heat generated by data centres, against a backdrop of a national housing and energy challenge that demands innovative solutions. The APPG will be pressing hard on what policy levers can unlock this opportunity."

Joe Peck - 28 May 2026

Head office & Accounts:

Suite 14, 6-8 Revenge Road, Lordswood

Kent ME5 8UD

T: +44 (0)1634 673163

F: +44 (0)1634 673173