Cooling

Cooling

Data Centres

News

Iceotope Technologies appoints Simon Jesenko as CFO

Iceotope Technologies Ltd has announced the appointment of Simon Jesenko, as Chief Financial Officer. Recognised for his track record in successfully scaling fast-growing international SMEs, Simon joins the company from predictive maintenance specialists, Senseye, where he oversaw the company’s acquisition and successful integration into Siemens.

While at Senseye, Simon fundamentally transformed the organisation’s finance function, focusing on SaaS-specific financial reporting and forecasting, changing pricing strategies to align with customer needs and market maturity, as well setting up the structure required for rapid international expansion and leading various funding activities on the way towards an eventual exit.

David Craig, CEO, Iceotope Technologies, says, “Simon is an accomplished CFO with an impressive track record of preparing the ground for corporate growth. His appointment is most welcome. He joins us at a time when the market is turning to liquid cooling to solve a wide range of challenges. These challenges include increasing processor output and efficiency, delivering greater data centre space optimisation and reducing energy inefficiencies associated with air-cooling to achieve greater data centre sustainability. Simon is a dynamic and well-respected CFO, with a clear understanding of how to optimise corporate structures and empower improved financial performance company-wide through the democratisation of fiscal data.”

Simon says, “We find ourselves at a pivotal moment in the market, where the pull towards liquid cooling solutions is accelerating as a result of two key factors: one, sustainability initiatives and regulation imposed by governments and two, increase in computing power to accommodate processing-intensive applications, such as AI and advanced analytics. Iceotope’s precision liquid cooling technology is at the forefront of existing liquid cooling technologies and therefore places the company in a unique position to seize this huge opportunity.

“My focus is going to be on delivering growth and financial performance that will increase shareholder value in the years to come as well as building a robust business structure to support this exponential growth along the way.”

Isha Jain - 13 June 2023

Cooling

Data Centres

News

Airedale by Modine expands its service offerings in the US

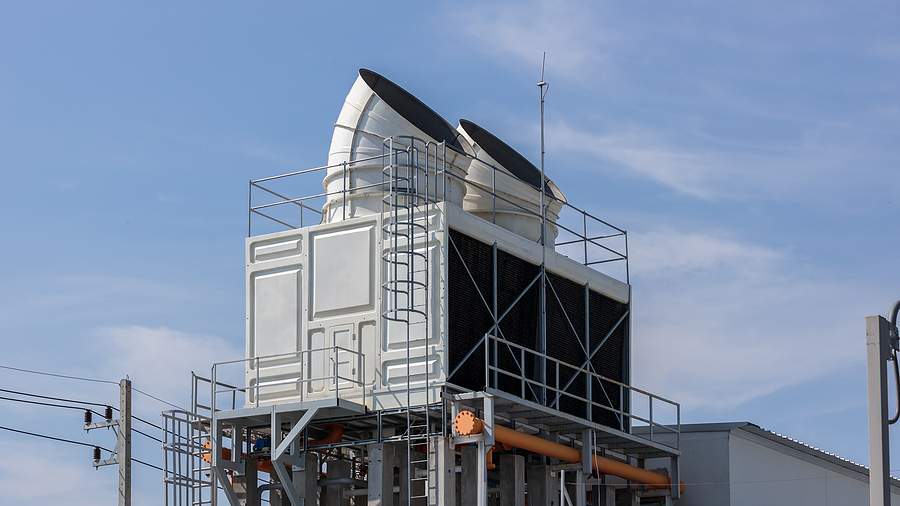

A five megawatts testing laboratory has been recently commissioned at the Modine Rockbridge facility in Virginia, further expanding the services that Airedale by Modine can offer its data centre customers and meet increasing demand from the data centre industry for validated and sustainable cooling solutions.

Airedale is a trusted brand of Modine and provides complete cooling solutions to industries where removing heat is mission critical. Its facility opened in 2022 to manufacture chillers to meet the growing demand from US data centre customers.

The new lab can test a complete range of air conditioning equipment, accommodating air-cooled chillers up to 2.1MW and water-cooled chillers up to 5MW. Crucially for data centre applications, the ambient temperature inside the chamber can be reduced to prove chiller-free cooling performance.

Free cooling is the process of using external ambient temperature to reject heat, rather than using the refrigeration process. If used within an optimised system, it can help a data centre significantly reduce its energy consumption and carbon footprint. The lab also can facilitate quality witness tests for customers to validate chiller performance in person.

In addition, the first US based service team has been launched to provide ongoing support to data centre customers in the field. The team offers coverage for spare parts, planned maintenance and emergency response.

The facility is also working with colleges in Northern Virginia to recruit and train service engineers, either as new graduates who will receive fast-tracked training or through apprenticeships. Apprentices will have a mix of college classes and on-site training, after which they will graduate with an associate’s degree in engineering.

Rob Bedard, General Manager of Modine’s North America data centre business says, “Our ongoing investment in our people in the US and the launch of the service team and apprenticeship program, along with the opening of our 5MW chiller test centre allows us to better serve our customers and cement our continuing commitment to the US data centre industry.”

Isha Jain - 12 June 2023

Cooling

GRC introduces HashRaQ MAX to enhance crypto mining

GRC (Green Revolution Cooling) has announced its newest offering for blockchain applications - HashRaQ MAX. The HashRaQ MAX is a next-gen, productivity-driven, immersion cooling solution that tackles the extreme heat loads generated by crypto mining.

The precisely engineered system features a high-performance cooling distribution unit (CDU) that supports high-density configuration and ensures maximum mining capability with minimal infrastructure costs, allowing for installation in nearly any location with access to power and water. The unit’s moulded design provides even coolant distribution, so each miner operates at peak capability.

HashRaQ MAX was developed utilising the experience and customer feedback GRC has accumulated over its 14 years of designing, building, and deploying immersion cooling systems specifically for the mining industry. The unit is capable of cooling 288kW with warm water when outfitted with 48 Bitmain S19 miners. Its space-saving and all-inclusive design consists of racks, frame, power distribution units (PDUs), coolant distribution unit (CDU), and monitoring, ensuring users can capitalise on the benefits of a comprehensive, validated, and cost-effective cooling solution.

It’s a well-established fact that cryptocurrency mining utilises a significant amount of energy, with Bitcoin alone consuming a reported 127TWh a year. In the United States, mining operations are estimated to emit up to 50 million tons of CO2 annually. HashRaQ MAX is designed to reduce the carbon footprint of mining operations by minimising energy use while also enabling miners to optimise profitability. Additionally, the system is manufactured utilising post-industrial, recycled materials and is flat-pack shipped to further reduce costs and carbon emissions. The unit is also fully recyclable at the end of its life.

“We are proud to present digital asset mining operators with a complete and reliable cooling solution that eliminates the time and complexity of piecing together an in-house system - and doesn’t break the bank,” says Peter Poulin, CEO of GRC. “We’ve been developing systems specifically for the blockchain industry since our inception in 2009, and our Hash family of products has been proven in installations around the world. It’s exciting to release this next generation HashRaQ MAX immersion cooling system in continuing support of cryptocurrency miners during this next era in digital asset mining.”

Beatrice - 2 June 2023

Cooling

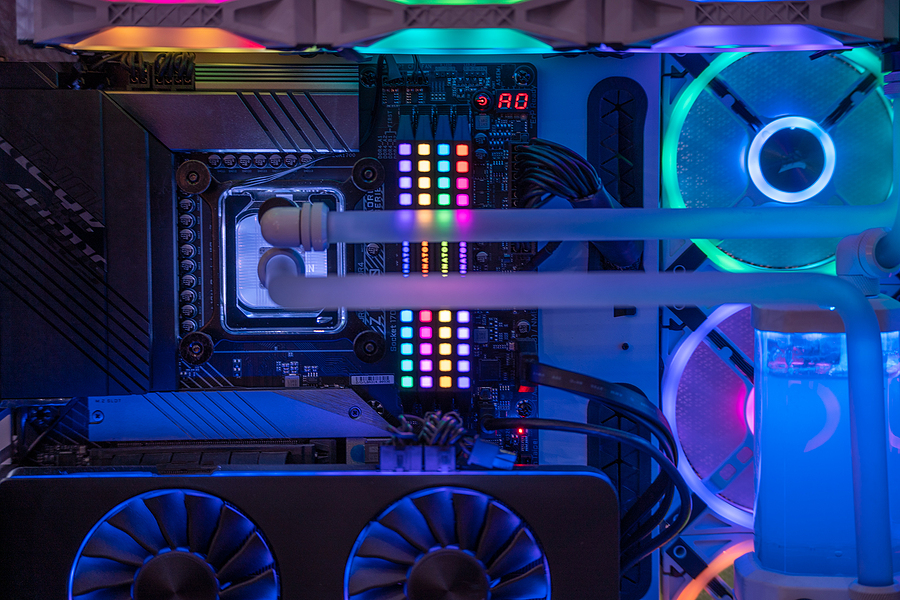

Supermicro launches NVIDIA HGX H100 servers with liquid cooling

Supermicro continues to expand its data centre offering with liquid-cooled NVIDIA HGX H100 rack scale solutions. Advanced liquid cooling technologies reduce the lead times for a complete installation, increase performance, and result in lower operating expenses while significantly reducing the PUE of data centres. Savings for a data centre are estimated to be 40% for power when using Supermicro liquid cooling solutions when compared to an air-cooled data centre. In addition, up to 86% reduction in direct cooling costs compared to existing data centres may be realised.

“Supermicro continues to lead the industry supporting the demanding needs of AI workloads and modern data centres worldwide,” says Charles Liang, President, and CEO of Supermicro. “Our innovative GPU servers that use our liquid cooling technology significantly lower the power requirements of data centres. With the amount of power required to enable today's rapidly evolving large scale AI models, optimising TCO and the Total Cost to Environment (TCE) is crucial to data centre operators. We have proven expertise in designing and building entire racks of high-performance servers. These GPU systems are designed from the ground up for rack scale integration with liquid cooling to provide superior performance, efficiency, and ease of deployments, allowing us to meet our customers' requirements with a short lead time.”

AI-optimised racks with the latest Supermicro product families, including the Intel and AMD server product lines, can be quickly delivered from standard engineering templates or easily customised based on the user's unique requirements. Supermicro continues to offer the industry's broadest product line with the highest-performing servers and storage systems to tackle complex compute-intensive projects. Rack scale integrated solutions give customers the confidence and ability to plug the racks in, connect to the network and become more productive sooner than managing the technology themselves.

The top-of-the-line liquid cooled GPU server contains dual Intel or AMD CPUs and eight or four interconnected NVIDIA HGX H100 Tensor Core GPUs. Using liquid cooling reduces the power consumption of data centres by up to 40%, resulting in lower operating costs. In addition, both systems significantly surpass the previous generation of NVIDIA HGX GPU equipped systems, providing up to 30 times the performance and efficiency of today's large transformer models, with faster GPU-GPU interconnect speed and PCIe 5.0 based networking and storage.

Supermicro's liquid cooling rack level solution includes a Coolant Distribution Unit (CDU) that provides up to 80kW of direct-to-chip (D2C) cooling for today's highest TDP CPUs and GPUs for a wide range of Supermicro servers. The redundant and hot-swappable power supply and liquid cooling pumps ensure that the servers will be continuously cooled, even with a power supply or pump failure. The leak-proof connectors give customers the added confidence of uninterrupted liquid cooling for all systems.

Rack scale design and integration has become a critical service for systems suppliers. As AI and HPC have become an increasingly critical technology within organisations, configurations from the server level to the entire data centre must be optimised and configured for maximum performance. The Supermicro system and rack scale experts work closely with customers to explore the requirements and have the knowledge and manufacturing abilities to deliver significant numbers of racks to customers worldwide.

Beatrice - 24 May 2023

Cooling

Data Centres

Carrier advances data centre sustainability with lifecycle solutions

Carrier is providing digital lifecycle solutions to support the unprecedented growth and criticality of data centres. More than 300 data centre owners and operators with over one million racks, spanning enterprise, colocation and edge markets benefit from Carrier’s optimisation solutions across their portfolios.

“Data centre operators have made great strides in power usage effectiveness over the past 15 years,” says Michel Grabon, Data Centre Solutions Director, Carrier. “Continual technology advances with higher powered server processors present power consumption and cooling challenges requiring the specialised solutions that Carrier provides.”

Carrier’s range of smart and connected solutions deliver upstream data from the data centre ecosystem to cool, monitor, maintain, analyse and protect the facility to meet green building standards, sustainability goals and comply with local greenhouse gas emission regulations. Carrier’s Nlyte DCIM tools share detailed information between the HVAC equipment, power systems and servers/workloads that run within data centres, providing unprecedented transparency and control of the infrastructure for improved uptime.

Carrier’s purpose-built solutions are integrated across its solutions portfolio with HVAC equipment, data centre infrastructure management (DCIM) tools and building management systems to help data centre operators use less power and improve operating costs and profitability for many years. Marquee projects around the world include:

• OneAsia’s data centre in Nantong Industrial Park. Carrier collaborated with the company to build its first data centre in China, equipped with a water-cooled chiller system. By optimising the energy efficiency of the entire cooling system, the high-efficiency chiller plant can reduce the annual electricity bill by approximately $180,000.

• China’s Zhejiang Cloud Computing Centre is an example of how Carrier’s AquaEdge centrifugal chillers and integrated controls provide the required stability, reliability and efficiency for 200,000 servers. The integrated controls help reduce operating expenses and allow facility managers to monitor performance remotely and manage preventative maintenance to keep the chillers running according to operational needs.

• Iron Mountain’s growing underground data centre, in a former Pennsylvania limestone mine, earned the industry’s top rating with the use of Carrier’s retrofit solution to control environmental heat and humidity. AquaEdge chillers with variable speed drive respond with efficient cooling, enabling the HVAC units to work under part-or full-load conditions.

Carrier’s Nlyte Asset Lifecycle Management and Capacity Planning software provides automation and efficiency to asset lifecycle management, capacity planning, audit and compliance tracking. It simplifies space and energy planning, easily connecting to an IT service management system and all types of business intelligence applications, including Carrier’s Abound cloud-based digital platform and BluEdge service platform to track and predict HVAC equipment health, enabling continuous operations.

Beatrice - 12 May 2023

Cooling

The liquid future of data centre cooling

By Markus Gerber, Senior Business Development Manager, nVent Schroff

Demand for data services is growing and yet, there has never been greater pressure to deliver those services as efficiently and cleanly as possible.

As every area of operation comes under greater scrutiny to meet these demands, one area in particular - cooling - has come into sharp focus. It is an area not only ripe for innovation, but where progress has been made that shows a way forward for a greener future.

The number of internet users worldwide has more than doubled since 2010. Furthermore, as technologies emerge that are predicted to be the foundation of future digital economies, demand for digital services will rise not only in volume, but also sophistication and distribution.

This level of development brings challenges for energy consumption, efficiency, and architecture. The IEA estimates that data centres are responsible for nearly 1% of energy-related greenhouse gas (GHG) emissions. While it acknowledges that since 2010, emissions have grown modestly despite rapidly growing demand, through energy efficiency improvements, renewable energy purchases by ICT companies and broader decarbonisation of electricity grids, it also warns that to align with the net zero by 2050 scenario, emissions must halve by 2030.

This is a significant technical challenge. Firstly, in the last several decades of ICT advancement, Moore’s Law has been an ever-present effect. It states that compute power would more or less double, with costs halving, every two years or so. As transistor densities become more difficult to increase as they get into the single nanometre scale, the CEO of NVidia has asserted that Moore’s Law is effectively dead. This means that in the short term, to meet demand, more equipment and infrastructure will have to be deployed, in greater density.

All changes will impact upon cooling infrastructure and cost

In this scenario of increasing demand, higher densities, larger deployments, and greater individual energy demand, cooling capacity must be ramped up too.

Air as a cooling medium was already reaching its limits, being as it is, difficult to manage, imprecise, and somewhat chaotic. As rack systems become more demanding, often mixing both CPU and GPU-based equipment, individual rack demands are approaching or exceeding 30W each. Air-based systems also tend to demand a high level of water consumption, for which the industry has also received criticism in the current environment.

Liquid cooling technologies have developed as a means to meet the demands of both volume and density needed for tomorrow’s data services. Liquid cooling takes many forms, but the three primary techniques currently are direct-to-chip, rear door heat exchangers, and immersion cooling.

Direct to chip (DtC) cooling is where a metal plate sits on the chip or component, and allows liquid to circulate within enclosed chambers carrying heat away. It is often used with specialist applications, such as high performance compute (HPC) environments.

Rear door heat exchangers are close-coupled indirect systems that circulate liquid through embedded coils to remove server heat before exhausting into the room. They have the advantage of keeping the entire room at the inlet air temperature, making hot and cold aisle cabinet configurations and air containment designs redundant, as the exhaust air cools to inlet temperature and can recirculate back to the servers.

Immersion technology employs a dielectric fluid that submerges equipment and carries away heat from direct contact. This enables operators to immerse standard servers with certain minor modifications such as fan removal, as well as sealed spinning disk drives. Solid-state equipment generally does not require modification.

An advantage of the precision liquid cooling approach is that full immersion provides liquid thermal density, absorbing heat for several minutes after a power failure without the need for back-up pumps.

Cundall’s liquid cooling findings

According to a study by engineering consultant, Cundall, liquid cooling technology outperforms conventional air cooling.

This is principally due to the higher operating temperature of the FWS, compared to the cooling mediums used for air cooled solutions. In all air cooled cases, considerable energy and water is consumed to arrive at a supply air condition that falls within the required thermal envelope. The need for this is avoided with liquid cooling.

There were consistent benefits found, in terms of energy efficiency and consumption, water usage and space reduction, in multiple liquid cooling scenarios, from hybrid to full immersion, as well as OpEx and CapEx benefits.

In hyperscale, colocation and edge computing scenarios, Cundall found the total cost of cooling ITE per kW consumed in liquid versus the base case of current air cooling technology, varied from 13-21% less.

In terms of emissions, Cundall states that PUE and TUE are lower for the liquid cooling options in all tested scenarios. Expressing the reduction in terms of kg CO2 per kW of ITE power per year, results saw more than 6% for colocation, rising to almost 40% for edge computing scenarios.

What does the future hold in terms of liquid cooling?

Combinations of liquid and air cooling techniques will be vital in providing a transition, especially for legacy instances, to the kind of efficiency and emission-conscious cooling needs of current and future facilities. Though immersion techniques offer the greatest effect, hybrid cooling offers an improvement over air alone, with OpEx, performance and management advantages.

Even as the data infrastructure industry institutes initiatives to better understand, manage and report sustainability efforts, more can be done to make every aspect of implementation and operation sustainable.

Developments in liquid cooling technologies are a step forward that will enable operators and service providers to meet demand, while ensuring that sustainability obligations can be met. Initially, hybrid solutions will facilitate legacy operators to make the transition to more efficient and effective systems, while more advanced technologies will ensure new facilities more efficient, even as capacity is built out to meet rising demand.

By working collaboratively with the broad spectrum of vendors and service providers, cooling technology providers can ensure that requirements are met, enabling the digital economy to develop to the benefit of all, while contributing towards a liveable future.

Beatrice - 17 April 2023

Cooling

Data Centres

Product

Vertiv introduces new chilled water thermal wall

Vertiv has introduced the Vertiv Liebert CWA, a new generation of thermal management system for slab floor data centres. For decades, hyperscale and colocation providers have used raised floor environments to cool their IT equipment. Simplifying data centre design with slab floors enables the construction of new white space more efficiently and cost-effectively, but also introduces new cooling challenges. The Liebert CWA was designed to provide uniform air distribution to the larger surface area which comes with a slab floor application, while also allowing more space for rack installation and compute density. Developed in the United States, the Liebert CWA chilled water thermal wall cooling unit is available in 250kW, 350kW and 500kW capacities across EMEA, as well as the Americas.

Liebert CWA technology utilises integrated state-of-the-art controls to facilitate improved airflow management and provide an efficient solution for infrastructures facing the challenges of modern IT applications. The Liebert CWA can also be integrated with the data centre’s chilled water system to improve the operating conditions of the entire cooling network. Furthermore, the Liebert CWA is installed outside the IT space to allow more floor space in the data centre, increase accessibility for maintenance personnel, and also increase the security of the IT space itself.

“The launch of the Liebert CWA reinforces our mission to provide innovative, state-of-the-art technologies for our customers that allow them to optimise the design and operation of their data centres” says Roberto Felisi, Senior Global Director, thermal core offering and EMEA business leader at Vertiv. “As the Liebert CWA can be quickly integrated with existing cooling systems, customers can leverage all the benefits of a slab floor layout, such as lower installation and maintenance costs, and a greater availability of white space.”

Air handling units have been used in the past to cool raised-floor data centres but there is now an opportunity in the market to drive more innovative thermal management solutions for slab floor data centres. The Vertiv Liebert CWA provides Vertiv’s customers with a standardised thermal wall built specifically for data centre applications, therefore minimising installation costs of custom-made solutions on site. The product's layout is engineered to maximise the cooling density and to meet the requirements for cooling continuity set by the most trusted and established certification authorities for data centre design and operation.

Vertiv has developed the Liebert CWA in close consultation with experienced data centre operators. With data centres having a myriad of layouts and equipment configurations, Vertiv has defined a strategic roadmap to enhance standardised thermal management solutions for slab floor applications. Vertiv also provides consulting and design expertise to create the right solution for their customers’ specific data centre white space requirements.

Beatrice - 9 March 2023

Cooling

News

Product

Castrol’s work with Submer on immersion cooling gains momentum

Castrol is working with Submer, a frontrunner in immersion cooling systems. Castrol’s cooling fluids are designed to maximise data centre cooling efficiency, offer enhanced equipment protection and can help safeguard against facility downtime by comprehensive material testing.

Immersion cooling involves submerging electronic components in a non-conductive liquid coolant. Compared to conventional cooling methods, immersion cooling can help reduce the consumption of energy and water needed to cool servers and storage devices, and enables the reuse of generated waste heat.

Submer has now conducted a comprehensive compatibility study through its Immersion Centre of Excellence on Castrol ON Immersion Cooling Fluid DC 20. The product exceeded Submer’s technical requirements and has now been fully approved and warranty-backed for use across Submer equipment.

“This is a significant milestone in Castrol’s collaboration with Submer,” comments Nicola Buck, CMO, Castrol. “We are now well-positioned to work together in developing a joint offer to data centre customers and magnify each other’s market reach and impact. We aspire to make immersion cooling technology mainstream in the data centre industry and will develop integrated customer offers to further improve the technology and address hurdles for adoption.”

Nicola adds, “We strongly believe in the future of immersion cooling technology as it can help achieve significant operational benefits for the data centre industry and address some of its future challenges. Through this collaboration with Submer, we want to help optimise the efficiency and energy usage across some of the world’s most powerful data centres. This is also aligned to Guiding Principle 4 of our PATH360 sustainability programme and the work we are doing with our customers to help them save energy, waste and water.”

“Submer’s journey to fluid standardisation began in 2020. We're now proud to be seeing tangible results from our rigorous array of testing, including thermal performance, oxidation, and a sustainability assessment, all of which ensure all fluids meet industry standards. With Castrol on board, the widescale adoption of immersion cooling is one step closer”, says Peter Cooper, VP of Fluids and Chemistry at Submer.

Beatrice - 21 February 2023

Cooling

Data Centres

Improved data centre resilience and efficiency is a cool outcome from Schneider Electric upgrade at UCD

The Future Campus project at University College Dublin called for space utilised by facility plant and equipment to be given up for development to support the student population. Total Power Solutions, an Elite Partner to Schneider Electric, worked with UCD’s IT Services organisation to upgrade its primary data centre cooling system, to provide greater resilience for its HPC operations whilst releasing valuable real estate.

Introduction: data centres at Ireland’s largest university

University College Dublin (UCD) is the largest university in Ireland, with a total student population of about 33,000. It is one of Europe’s leading research-intensive universities with faculties of medicine, engineering, and all major sciences, as well as a broad range of humanities and other professional departments.

The university’s IT infrastructure is essential to its successful operation, for academic, administration and research purposes. The main campus at Belfield, Dublin is served by two on-premises data centres that support all the IT needs of students, faculty and staff, including high-performance computing (HPC) clusters for computationally intensive research. The main data centre in the Daedalus building hosts all the centralised IT including storage, virtual servers, Identity and Access Management, business systems, networking, and network connectivity, in conjunction with a smaller on-premises data centre.

“Security is a major priority, so we don’t want researchers having servers under their own desks. We like to keep all applications inside the data centre, both to safeguard against unauthorised access - as universities are desirable targets for hackers - and for ease of management and efficiency.”

Challenges: ageing cooling infrastructure presents downtime threat and reputational damage

Resilience is a key priority for UCD’s IT Services. Also, with its campus located close to Dublin’s city centre, real estate is at a premium. There are continuing demands for more student facilities and consequently the need to make more efficient use of space by support services such as IT. Finally, there is a pervasive need to maintain services as cost-effectively as possible and to minimise environmental impact in keeping with a general commitment to sustainability.

As part of a major strategic development of the university’s facilities called Future Campus, the main Daedalus data centre was required to free up some outdoor space taken up by a mechanical plant and make it available for use by another department. The IT Services organisation took this opportunity to revise the data centre cooling architecture to make it more energy and space efficient, as well as more resilient and scalable.

“When the data centre was originally built, we had a large number of HPC clusters and consequently a high rack power density,” says Tom Cannon, Enterprise Architecture Manager at UCD. “At the time we deployed a chilled-water cooling system as it was the best solution for such a load. However, as the technology of the IT equipment has advanced to provide higher processing capacity per server, the cooling requirement has reduced considerably even though the HPC clusters have greatly increased in computational power.”

One challenge with the chilled water system was that it relied upon a single set of pipes to supply the necessary coolant, which therefore represented a single point of failure. Any issues encountered with the pipework, such as leaks, could therefore threaten the entire data centre with downtime. This could create problems at any time in the calendar, however, were it to occur at critical moments such as during exams or registration it would have a big impact on the university community. Reputational damage, both internally and externally, would also be significant.

Solution: migration to Schneider Electric Uniflair InRow DX Cooling Solution resolves reliability, scalability and space constraints

UCD IT services took the opportunity presented by the Future Campus project to replace the existing chilled water-based cooling system with a new solution utilising Schneider Electric’s Uniflair InRow Direct Expansion (DX) technology, utilising a refrigerant vapour expansion and compression cycle. The condensing elements have been located on the roof of the data centre, conveniently freeing up significant ground space on the site formerly used for a cooling plant.

Following on from an open tender, UCD selected Total Power Solutions, a Schneider Electric Elite Partner, to deliver the cooling update project. Total Power Solutions had previously carried out several power and cooling infrastructure installations and upgrades on the campus and is considered a trusted supplier to the university. Working together with Schneider Electric, Total Power Solutions was responsible for the precise design of an optimum solution to meet the data centre’s needs and its integration into the existing infrastructure.

A major consideration was to minimise disruption to the data centre layout, keeping in place the Schneider Electric EcoStruxure Row Data Centre System (formerly called a Hot Aisle Containment Solution, or HACS). The containment solution is a valued component of the physical infrastructure, ensuring efficient thermal management of the IT equipment and maximising the efficiency of the cooling effort by minimising the mixing of the cooled supply air and hot return – or exhaust - airstream.

The new cooling system provides a highly efficient, close-coupled approach which is particularly suited to high density loads. Each InRow DX unit draws air directly from the hot aisle, taking advantage of higher heat transfer efficiency and discharges room-temperature air directly in front of the cooling load. Placing the unit in the row yielding 100% sensible capacity and significantly reduces the need for humidification.

Cooling efficiency is a critical requirement for operating a low PUE data centre, but the most obvious benefit of the upgraded cooling system is the built-in resilience afforded by the 10 independent DX cooling units. No longer is there a single point of failure; there is currently sufficient redundancy in the system that if one of the units fails, the others can take up the slack and continue delivering cooling with no impairment of the computing equipment in the data centre.

“We calculated that we might just have managed with eight separate cooling units,” says Tom, “but we wanted the additional resilience and fault tolerance that using 10 units gave us.” Additional benefits of the new solution include its efficiency – the system is now sized according to the IT load and avoids the overcooling of the data centre both to reduce energy use and improve its PUE.

In addition, the new cooling system is scalable according to the potential requirement to add further HPC clusters or accommodate innovations in IT, such as the introduction of increasingly powerful but power-hungry CPUs and GPUs. “We designed the system to allow for the addition of four more cooling units if we need them in the future,” says Tom. “All of the power and piping needed is already in place, so it will be a simple matter to scale up when that becomes necessary.”

Implementation: upgrading a live environment at UCD

It was essential while installing the new system that the data centre kept running as normal and that there was no downtime. The IT department and Total Power Solutions adopted what Tom Cannon calls a 'Lego block' approach; first to consolidate some of the existing servers into fewer racks and then to move the new cooling elements into the freed-up space. The existing chilled-water system continued to function while the new DX-based system was installed, commissioned and tested. Finally, the obsolete cooling equipment was decommissioned and removed.

Despite the fact that the project was implemented at the height of the COVID-19 pandemic with all the restrictions on movement and the negative implications for global supply chains, the project ran to schedule and the new equipment was successfully installed and implemented without any disruption to IT services at UCD.

Results: a cooling boost for assured IT services and space freed for increased student facilities

The new cooling equipment has resulted in an inherently more resilient data centre with ample redundancy to ensure reliable ongoing delivery of all hosted IT services in the event that one of the cooling units fails. It has also freed up much valuable real-estate that the university can deploy for other purposes.

As an example, the building housing the data centre is also home to an Applied Languages department. “They can be in the same building because the noise levels of the new DX system are so much lower than the chilled-water solution,” says Tom. “That is clearly an important issue for that department, but the DX condensers on the roof are so quiet you can’t tell that they are there. It’s a much more efficient use of space.”

With greater virtualisation of servers, the overall power demand for the data centre has been dropping steadily over the years. “We have gone down from a power rating of 300kW to less than 100kW over the past decade,” says Tom. The Daedalus data centre now comprises 300 physical servers but there are a total of 350 virtual servers split over both data centres on campus.

To maximise efficiency, the university also uses EcoStruxure IT management software from Schneider Electric, backed up with a remote monitoring service that keeps an eye on all aspects of the data centre’s key infrastructure and alerts IT Services if any issues are detected.

The increasing virtualisation has seen the Power Usage Effectiveness (PUE) ratio of the data centre drop steadily over the years. PUE is the ratio of total power consumption to the power used by the IT equipment only and is a well understood metric for electrical efficiency. The closer to 1.0 the PUE rating, the better. “Our initial indications are that we have managed to improve PUE from an average of 1.42 to 1.37,” says Tom.

“However, we’re probably overcooling the data centre load currently, as the new cooling infrastructure settles. Once that’s happened, we’re confident that we can raise temperature set points in the space and optimise the environment in order to make the system more energy efficient, lower the PUE and get the benefit of lower cost of operations.”

The overall effects of installing the new cooling system are therefore: greater resilience and peace of mind; more efficient use of space for the benefit of the university’s main function of teaching; greater efficiency of IT infrastructure; and consequently a more sustainable operation into the future.

Carly Weller - 23 November 2022

Cooling

Data Centres

Green IT

Infrastructure Management

Swindon data centre goes carbon neutral in sustainability push

A data centre in Swindon, Carbon-Z, has become one of the first in the UK to be fully carbon neutral, following an overhaul of its site and work practices. This includes the submersion of all hardware components in cooling liquid and sourcing electricity from green energy providers. Plans are also in place for installing solar panels on the site’s roof.

The site was previously known as SilverEdge and is rebranding itself to reflect the change of direction in how it operates and the services it provides to clients. It now hopes to inspire a wider shift towards sustainability within the data centre industry, which accounts for more greenhouse gas emissions annually than commercial flights.

Jon Clark, Commercial and Operations Director at Carbon-Z, comments, “As the UK and the world move towards achieving net zero emissions by 2050, our industry is responsible for making data centres greener and more efficient. At Carbon-Z, we continually look for new ways to improve our sustainability, with the goal being to get our data centres to carbon neutral, then carbon zero and then carbon negative. We believe this is possible and hope to see a wider movement among our peers in the same direction over the coming years.”

Playing it cool

The growing intensity of computing power, as well as high performance demands, has resulted in rapidly rising temperatures within data centres and a negative cycle of energy usage. More computing means more power, more power means more heat, more heat demands more cooling, and traditional air-cooling systems consume massive amounts of power, which in turns contributes to the heating up of sites.

To get around this, Carbon-Z operates using liquid immersion cooling, a technology which involves the submersion of hardware components in dielectric liquid (which does not conduct electricity) and conveys heat away from the heat source. This greatly reduces the need for cooling infrastructure and costs less than traditional air cooling. The smaller amount of energy that is now needed to power the Swindon site can now be sourced through Carbon-Z’s Green Energy Sourcing.

While its clear that immersion cooling is quickly catching on - it is predicted to grow from $243 million this year to $700 million by 2026 - the great majority of the UK’s more than 600 data centres are not making use of it, and continue to operate in a way which is highly energy intensive and carbon emitting.

Riding the wave

As part of its rebrand, Carbon-Z has also updated the kinds of services it offers to customers to make sure that they are financially, as well as environmentally, sustainable. Its new service, Ocean Cloud, has been designed with this in mind, providing customers dedicated servers and a flat-fee approach to financing.

Having a dedicated server within a data centre means that spikes in demand from other tenants has no effect at all on yours, avoiding the ‘noisy neighbour’ problem associated with the multi-tenant model favoured by many large operators. This makes the performance of the server more reliable and energy efficient.

Ocean Cloud also solves one of the other major problems with other cloud services - overspend - through its flat-fee approach. Customers are charged a fixed fee that covers the dedicated server and associated storage, as well as hosting and remote support of the hardware infrastructure to reduce maintenance overheads.

Jon comments, “We are very proud of Ocean Cloud, as it allows us to offer clients a service that is not only better for the ocean, the planet and for our local communities than other hosted services, but also brings clear operational and cost-related benefits. Striking this balance is crucial to ensure customers are on board with the transition to more sustainable data centre operations, especially at times like these when many companies are feeling the financial pinch off the back of rising inflation.”

Beatrice - 28 October 2022

Head office & Accounts:

Suite 14, 6-8 Revenge Road, Lordswood

Kent ME5 8UD

T: +44 (0)1634 673163

F: +44 (0)1634 673173