News

Data Centre Build News & Insights

Data Centre Projects: Infrastructure Builds, Innovations & Updates

News

Renewables and Energy: Infrastructure Builds Driving Sustainable Power

Green Horizon secures approval for Norway data centre

Green Horizon, a Norwegian developer of hydropower-backed, AI-ready data centres, has received planning approval for Norway 1, a 36MW data centre development near Stavanger that is scheduled to enter service in the second half of 2027.

The approval follows the earlier granting of zoning permission for the site and allows the Norwegian developer to progress to final design and construction. The company is currently working with consultants and contractors ahead of a planned construction start later this year.

Located on Norway's southwest coast, Norway 1 is being developed as a carrier-neutral and cloud-neutral facility designed to provide connectivity to the UK, mainland Europe, and onward routes to North America.

The facility will be built to Tier III standards and is designed to support high-density AI, GPU, and high-performance computing (HPC) workloads. Green Horizon says the site will include two 'Meet-Me Rooms', diverse connectivity options, and access to multiple network providers.

Norway 1 forms the first phase of the company's wider data centre platform in the Stavanger region, where 96MW of power capacity has been secured across three planned developments.

The company says the facility will be powered by renewable hydropower and is targeting a power usage effectiveness (PUE) rating of 1.1 at full load.

Heat reuse strategy integrated into design

A key element of the project is its heat reuse strategy. Green Horizon plans to supply excess heat generated by the data centre to both a new greenhouse that will be integrated into the facility's design and an existing commercial greenhouse located adjacent to the site.

According to the company, the new greenhouse will be constructed directly above the data centre, enabling waste heat to be reused as part of a wider symbiosis partnership with Norway's largest greenhouse operator.

The concept has been technically validated and approved by the local municipality.

Operations at the site will be supported by CBRE, which will provide operational services and monitoring.

Richard Rettedal, CEO of Green Horizon, comments, "Securing planning approval for Norway 1 marks a major milestone for Green Horizon and for our ambition to build Norway’s AI data centre platform.

"Customers deploying AI and high-performance compute need dependable capacity, resilience, and a clear route to scale. Norway 1 is designed to deliver high-density infrastructure powered by renewable hydropower, with heat reuse enabled by design, supporting both lower-cost operation and a lower operational footprint.

"We’re proud that this project will contribute to the local community and bring new, renewable powered capacity to the market."

The €300 million (£259 million) development is expected to create around 400 construction jobs during the build phase and contribute additional renewable-powered data centre capacity to Norway's digital infrastructure.

Construction is expected to begin later in 2026, with the facility targeted to become operational in the second half of 2027.

Joe Peck - 9 June 2026

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Products

ABB launches grid stability package for data centres

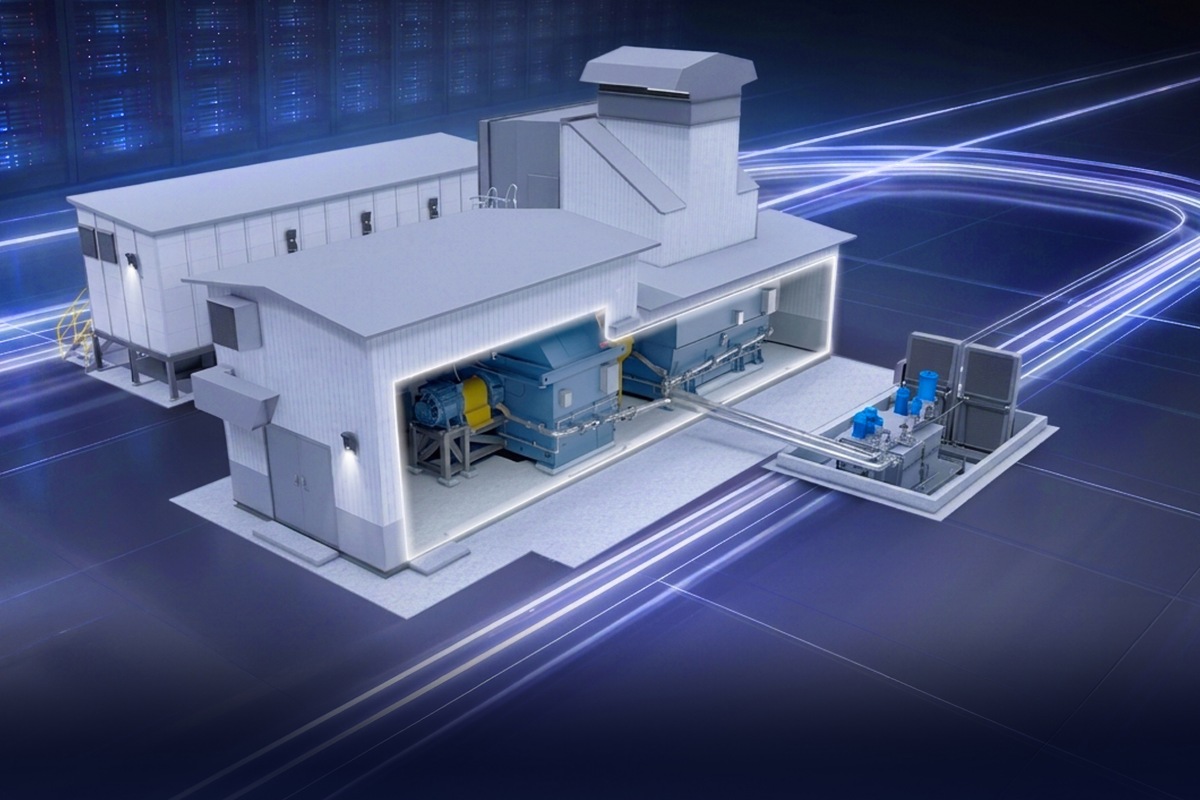

ABB, a multinational corporation specialising in industrial automation and electrification products, has introduced a pre-engineered synchronous condenser package designed to help data centre operators address grid stability challenges associated with growing AI workloads and increasing power demand.

The company says the modular system is intended to support power network stability at grid connection points, helping operators connect new capacity while maintaining reliable power system performance.

As AI adoption increases, data centres are placing greater demands on electricity networks. Large and rapidly changing power loads can affect voltage and frequency stability, creating challenges for both grid operators and data centre developers seeking new connections.

ABB's synchronous condenser package is designed to provide instantaneous inertia and dynamic reactive power, helping to stabilise voltage and frequency during sudden changes in demand.

According to ABB, the pre-engineered design is intended to simplify deployment by reducing engineering requirements, installation complexity, and project delivery times.

The package combines a synchronous condenser, flywheel, starting system, lubrication system, cooling infrastructure, auxiliary equipment, e-house, and optional noise enclosure within a standardised design.

The flywheel includes an integrated safety enclosure and is designed specifically to support electrical network stabilisation.

Supporting AI-driven power demands

ABB says the solution can help operators address grid stability requirements earlier in the development process, potentially simplifying approvals and supporting future capacity expansion without significant changes to core power infrastructure.

The company also states that providing mechanical, electrical, and control systems through a single supplier can reduce on-site integration requirements and streamline project delivery.

David Bjerharg, Business Line Manager, High Speed Synchronous at ABB, notes, "As data centres become increasingly widespread and AI-driven demand increases, grid stability is becoming a fundamental requirement for ongoing expansion.

"This solution enables operators to connect faster, operate reliably from day one, and scale with confidence."

The launch reflects growing industry focus on power infrastructure capable of supporting AI-driven facilities, where high-density computing workloads can create significant fluctuations in electricity demand.

ABB says the synchronous condenser package is intended to support long-term infrastructure performance while helping operators deploy new data centre capacity more efficiently.

For more from ABB, click here.

Joe Peck - 9 June 2026

Data Centre Business News and Industry Trends

Insights into Data Centre Investment & Market Growth

News

AMD commits £2bn to UK AI infrastructure

AMD, an American multinational semiconductor company, has announced plans to invest up to £2 billion in the UK over the next five years to support computing infrastructure, AI research, and technology development.

The investment, announced during London Tech Week, includes collaborations with government, academia, and industry aimed at expanding access to high-performance computing (HPC) resources and supporting the UK's AI ambitions.

AMD says the initiative aligns with the Government's AI Opportunities Action Plan and AI Hardware Strategy, which seek to strengthen the country's AI infrastructure, skills base, and adoption of AI technologies.

Lisa Su, Chair and CEO of AMD, explains, "The United Kingdom has the talent, research excellence, and ambition to help lead the next era of AI.

"AMD is proud to deepen our commitment to the UK and work with partners across government, academia, and industry to expand access to the compute infrastructure needed to advance sovereign AI, accelerate discovery, and drive long-term economic growth."

The announcement was welcomed by government ministers, who highlighted the potential impact on AI research, skills development, and economic growth.

Rachel Reeves, Chancellor of the Exchequer, says, "This investment is a major vote of confidence in Britain’s place as a global AI superpower. We’ve got the talent, the world-class universities, and the ambition to lead, and partnerships like this help turn that potential into real progress.

"It will drive more cutting-edge research here in the UK, open up opportunities for people to build the skills they need for the jobs of the future, and speed up breakthroughs that can improve people’s lives and grow our economy."

New partnerships to support AI research

As part of the investment programme, AMD has announced a collaboration with Imperial College London focused on computational science and research areas that require large-scale computing resources, including healthcare and climate modelling.

The organisations also plan to explore ways to optimise AI models, scientific workflows, and data-intensive applications using AMD hardware and its ROCm software platform.

AMD is also working with Oriole Networks as part of the UK Advanced Research and Invention Agency's (ARIA) Scaling Inference Lab initiative. The project will combine Oriole's photonic networking technology with AMD GPUs and processors to investigate new approaches for scaling AI inference workloads while improving performance and energy efficiency.

According to AMD, the initiative could contribute to the development of what is expected to be the world's first large-scale AI system built on a pure photonic network.

Expanding UK AI supercomputing capacity

AMD and Dell Technologies are also supporting the University of Cambridge's growing AI infrastructure programme, including the Zenith AI supercomputer and the Sunrise fusion AI system being developed with the UK Atomic Energy Authority (UKAEA).

Zenith is funded by the Department for Science, Innovation and Technology and UK Research and Innovation, while Sunrise is funded by the Department for Energy Security and Net Zero and will support fusion energy research.

The systems will be used across a range of 'AI-for-science' applications, including healthcare research, climate modelling, materials science, engineering simulation, fusion research, and AI model development.

Liz Kendall, Technology Secretary, says, "This investment reflects the strength of Britain’s talent, research, and ambition in AI, but also the infrastructure we are putting in place to match it.

"With world-class chip designers, leading universities, and partners such as AMD choosing to invest here, we are building the compute capability needed to power innovation, drive growth, create jobs, and ensure the most advanced AI technologies are developed in the UK."

AMD says it will continue working with partners across government, academia, and industry to expand computing capacity and support future scientific and technological research in the UK.

For more from AMD, click here.

Joe Peck - 9 June 2026

Data Centre Infrastructure News & Trends

Data Centre Security: Protecting Infrastructure from Physical and Cyber Threats

Innovations in Data Center Power and Cooling Solutions

Products

Security Risk Management for Data Centre Infrastructure

Siemens, Infineon partner on data centre circuit protection

German multinational technology company Siemens and German semiconductor manufacturing company Infineon Technologies have partnered to develop electrical protection technology for data centres, industrial facilities, and battery energy storage systems (BESS).

Under the agreement, Infineon will supply silicon carbide (SiC) power modules for use in Siemens's SENTRON 3QD2 semiconductor circuit breakers, designed to improve efficiency, power density, and reliability in power distribution systems.

According to the companies, growing electrification and the increasing complexity of AI data centres and industrial operations are driving demand for faster and more reliable electrical protection.

A semiconductor circuit breaker, also known as a solid-state circuit breaker, is designed to protect electrical circuits from excessive current caused by faults such as short circuits and overloads.

Unlike conventional electromechanical breakers, which use mechanical components to interrupt current flow, semiconductor-based devices use electronic components and control algorithms to react significantly faster.

Siemens says the SENTRON 3QD2 can interrupt current in the microsecond range, making it suitable for direct current (DC) power systems where rapid fault isolation is required to minimise downtime and equipment damage.

Andreas Weisl, Executive Vice President and Chief Sales Officer of Industrial and Infrastructure at Infineon, notes, "AI data centres and factories are becoming increasingly electrified and complex.

"This increases vulnerability to electrical failures and drives the demand for more sustainable, efficient, and reliable power distribution systems.

"By combining our advanced silicon carbide technology with Siemens's expertise in power distribution, we are addressing this demand to ensure fast, safe, and reliable operations in power-critical environments."

Growing interest in DC power systems

The collaboration centres on Infineon's CoolSiC MOSFET power module, which has been integrated into Siemens's semiconductor circuit breaker platform.

The companies say the technology supports the wider adoption of DC power distribution systems, which are gaining attention in industrial environments and data centres because of their potential efficiency benefits and ability to integrate more effectively with battery storage systems.

Markus Grabmeier, CEO Electrical Products at Siemens Smart Infrastructure, comments, "Our new direct current portfolio offers innovative solutions that not only improve energy efficiency but also enable the development of resilient, future-proof infrastructure.

"Direct current applications can decrease energy consumption and substantially cut material usage. By integrating batteries, peak power can also be significantly reduced.

"With this approach, we are making a decisive contribution to the decarbonisation of our industries, while reinforcing our commitment to developing technologies that deliver tangible value to our customers and society."

The companies state that the partnership is intended to support the growing requirements of power-critical environments where electrical protection systems must operate quickly and reliably to maintain availability and reduce the risk of service disruption.

A demonstration of the SENTRON 3QD2 semiconductor circuit breaker will be showcased at PCIM Europe 2026 in Nuremberg, Germany, from 9–11 June.

For more from Siemens, click here.

Joe Peck - 8 June 2026

Data Centre Infrastructure News & Trends

Events

Innovations in Data Center Power and Cooling Solutions

A-Gas to attend DCN Toronto as sponsor

A-Gas, a company specialising in lifecycle refrigerant management (LRM), will attend Data Center Nation (DCN) Toronto in Canada on 9 June as an official sponsor, following its participation as a Gold Sponsor at DCN Milan earlier this year.

The company is increasing its engagement with the data centre sector as demand for digital infrastructure continues to grow and cooling efficiency remains a key consideration for operators.

A-Gas specialises in LRM, providing services focused on the recovery, reclamation, reuse, and disposal of refrigerants. While the company has traditionally operated in sectors including HVAC, automotive, and cold chain logistics, it is expanding its focus on data centres and their cooling requirements.

Operating in 15 countries, A-Gas provides refrigerant supply services alongside refrigerant recovery and management programmes for facilities undergoing equipment replacement or decommissioning.

Refrigerant management remains key cooling consideration

As data centre operators deploy higher-density infrastructure and adopt new cooling technologies, refrigerant management is becoming an increasingly important aspect of sustainability and operational planning.

A-Gas says its offering includes on-site refrigerant recovery services, reclaimed refrigerant supply, and the destruction of refrigerants that cannot be processed for future reuse.

The company notes it will use the event to meet with industry stakeholders and discuss approaches to cooling infrastructure management within data centre environments.

For more from A-Gas, click here.

Joe Peck - 5 June 2026

Colocation Strategies for Scalable Data Centre Operations

Data Centre Infrastructure News & Trends

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Edge Computing in Modern Data Centre Operations

Enclosures, Cabinets & Racks for Data Centre Efficiency

Products

nLighten launches rapid colocation deployment service

European data centre operator nLighten has launched ReadyCabinet, a standardised colocation offering designed to reduce deployment times for organisations requiring edge infrastructure across Europe.

The service provides customers with a pre-built, fixed-price colocation cabinet and is designed to enable deployments within three working days of an order being placed.

According to nLighten, ReadyCabinet is intended to simplify the process of procuring colocation capacity by replacing bespoke design and engineering processes with a standardised offering.

Customers can choose a full or partial cabinet configuration, including up to 5kW of power and access to nConnect, the company's connectivity platform.

Joachim van Collenburg, Vice President of Enabling Services at nLighten, says, "We are moving colocation away from bespoke engineering and turning it into a scalable product.

"ReadyCabinet reflects that reality. It's a deliberately simple product, built to be the entry point to a much longer journey with our customers."

The deployment process consists of a quotation with real-time availability, a service order agreement, and cabinet handover within three working days.

Standardised approach targets edge infrastructure growth

nLighten says the service has been developed in response to increasing demand for rapid, repeatable infrastructure deployments across multiple locations.

The company cites the growth of AI inference, low-latency applications, and edge computing as drivers behind the need for faster provisioning and more standardised colocation services.

ReadyCabinet forms part of nLighten's wider colocation platform, which allows customers to expand from a single cabinet deployment to higher-density and liquid-cooled environments across its European data centre portfolio.

All ReadyCabinet deployments operate across nLighten's European edge platform and include metered power billing.

The service is currently available at selected nLighten facilities, with further expansion planned throughout 2026.

For more from nLighten, click here.

Joe Peck - 5 June 2026

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

News

EUDCA backs EU data centre energy integration plan

The European Data Centre Association (EUDCA), the representative body of the European data centre community, has co-signed a Declaration of Intent aimed at improving the integration of data centres within the European Union's energy system.

The agreement supports the objectives of the European Commission's Strategic Roadmap for Digitalisation and AI in the Energy Sector and seeks to strengthen cooperation between data centre operators, energy providers, grid operators, and public authorities.

As investment in AI, cloud computing, and digital infrastructure continues to increase across Europe, the declaration is intended to help establish common frameworks for planning and coordinating future infrastructure development.

According to the signatories, the initiative will contribute to the development of shared principles, procedures, and best practices that can be adopted by EU Member States to support sustainable growth in data centre capacity.

The declaration aligns with several European policy initiatives, including the Data Centre Energy Efficiency Package, the European Grids Package, and the proposed Cloud and AI Development Act.

Industry groups target closer energy sector collaboration

The declaration has been signed by organisations representing a broad range of sectors, including electricity networks, energy storage, renewable energy, district heating, and digital infrastructure.

Among the signatories are the EUDCA, Eurelectric, ENTSO-E, WindEurope, SolarPower Europe, Energy Storage Europe, and the EU DSO Entity.

Lex Coors, President of the EUDCA, says, "The energy system can no longer be viewed as a single connection to a single data centre. Europe is moving into a more complex, four-dimensional environment where capacity, flexibility, sustainability, and digital resilience must be planned together.

"Data centres are becoming part of the wider energy system, and this Declaration of Intent is an important step towards building that cooperation in a responsible and future-proof way."

The declaration establishes a series of working groups focused on areas including grid planning, connection agreements, flexibility services, energy generation, and energy storage.

Working groups to address future capacity requirements

Europe is expected to expand its data centre capacity significantly over the next five to seven years as AI infrastructure investment accelerates. The declaration is intended to support this growth while helping Member States meet wider energy and sustainability objectives.

Michael Winterson, Secretary General of the EUDCA, explains, "Europe’s AI, cloud, and digital ambitions will require significant new infrastructure capacity over the coming years. Delivering that growth responsibly will depend on much closer coordination between the digital infrastructure and energy sectors.

"This Declaration of Intent shows our commitment to partner with energy providers, local authorities, and wider EU institutions to deliver on advanced technologies, energy, and sustainability ambitions."

The EUDCA says it will contribute technical and policy expertise to the working groups as discussions progress, supporting the development of future frameworks for cooperation between Europe's digital infrastructure and energy sectors.

For more from the EUDCA, click here.

Joe Peck - 4 June 2026

Data Centre Build News & Insights

Data Centre Projects: Infrastructure Builds, Innovations & Updates

News

Corscale begins work on 140MW Iver data centre

Hyperscale data centre developer Corscale has appointed UK contractors McLaren Construction and Phoenix ME under a pre-construction services agreement to begin predevelopment works for a 140MW data centre campus in Iver, Buckinghamshire, UK.

Located on a 14-acre (4,047m²) site at Court Lane near the M25, the development forms part of the West London data centre market and represents a significant addition to UK digital infrastructure capacity.

The project will comprise two data centre buildings and a dedicated 140MVA substation. Designed by Gensler, the scheme includes architectural features intended to complement a nearby Grade II listed farmhouse, alongside measures aimed at supporting biodiversity.

McLaren Construction will serve as main contractor, while Phoenix ME will act as MEP delivery partner. Gensler is supported by Cundall on MEP design and L&P Group on engineering services.

Julian Michalski, Head of Development for Corscale Europe, says, "This is, by design, an exceptional collaboration of a tier-one team.

"It brings a combination of expertise and experience - each with a strong track record in complex, mission-critical environments - to deliver superior quality, programme certainty, and technical assurance at every stage, ensuring we meet programme deadlines and our practical completion date in late 2029."

Site clearance and remediation works begin in July

Predevelopment activities are scheduled to begin on 1 July 2026 and will include site clearance, enabling works, utility diversions, and environmental remediation.

The site is currently occupied by a mix of industrial uses, including vehicle storage, waste transfer operations, recycling facilities, concrete and aggregate storage, and tyre distribution businesses.

Among the first phases of work will be the relocation of two 36in (91cm) water mains by Affinity Water and the implementation of a site-wide remediation strategy.

David McDonnell, Managing Director for Data Centres at McLaren Construction, notes, "As data centres become larger, more powerful, and more complex, we become all the more reliant on the latest construction technology to achieve the project management and precision that this design requires.

"We are proud to be partnering with Corscale and this outstanding project team on what promises to be a landmark scheme, and we look forward to progressing works on site."

The project is targeting practical completion during the fourth quarter of 2029.

For more from Corscale, click here.

Joe Peck - 3 June 2026

Data Centre Infrastructure News & Trends

Enterprise Network Infrastructure: Design, Performance & Security

News

LINX offers 15 months free at NoVA

The London Internet Exchange (LINX), an internet exchange point (IXP) operator, has launched a new initiative offering 15 months of no-charge port access and peering services at its LINX NoVA internet exchange in Ashburn, Virginia, USA.

Available from 1 June 2026, the offer applies to both existing members upgrading services and organisations joining LINX for the first time. Participants signing up to an 18-month service term will receive the first 15 months without charge.

LINX says the initiative has been introduced to help network operators manage increasing traffic demands and cost pressures while expanding their interconnection capabilities.

Established in 2014, LINX NoVA operates within Ashburn's 'Data Center Alley', one of the world's largest internet interconnection markets. The exchange spans five data centre campuses operated by Equinix, Digital Realty, Iron Mountain, CoreSite, and QTS.

More than 50 networks are connected to the platform, including content delivery networks, internet service providers, and cloud operators.

Jennifer Holmes, CEO of LINX, says, "We recognise that network operators are managing a complex environment right now, from capacity planning to cost control. As a member-owned organisation, our role is to listen carefully to the feedback from our membership and monitor trends in the industry, acting where we can.

"This initiative is about supporting our community in a practical way - creating space for networks to plan, grow, and adapt without immediate pressure."

Ashburn exchange continues to expand network ecosystem

LINX NoVA operates as a carrier-neutral internet exchange and allows participants to establish peering relationships through a single connection across its multi-site infrastructure.

The organisation says the new programme is intended to encourage greater traffic localisation, increased peering activity, and further interconnection growth within the region.

Jennifer continues, "We want to remind our members why LINX has remained a global leader in interconnection for over 30 years.

"The difference is in the engineering discipline, the resilience of the platform, and the depth of operational support we provide. Not all IXPs are built the same - and when networks rely on interconnection for critical traffic paths, there’s very little margin for error.

"Packet loss, instability, or downtime can have a direct and immediate impacts on revenue and customer experience. At LINX, we’ve built our reputation on removing that risk, delivering a level of reliability and support that our members can depend on without question."

The exchange is supported by a fully redundant architecture, a 24/7 Network Operations Centre, and a distributed platform spanning multiple data centre locations.

LINX operates as a member-owned organisation and says revenue is reinvested into the development of its infrastructure and services.

For more from LINX, click here.

Joe Peck - 2 June 2026

Data Centre Infrastructure News & Trends

Innovations in Data Center Power and Cooling Solutions

Liquid Cooling Technologies Driving Data Centre Efficiency

Products

Schneider Electric unveils Uniflair XCA chillers

Global energy technology company Schneider Electric has introduced the Uniflair XCA range of air-cooled and free-cooling chillers, designed for high-density, liquid-cooled data centres supporting AI workloads.

The new portfolio comprises the Uniflair XCAC air-cooled series and the Uniflair XCAF free-cooling series. Both incorporate oil-free centrifugal compressors with magnetic bearing technology and variable-speed drives to support operation across varying thermal loads and environmental conditions.

The chillers are available in six sizes, ranging from 1,200kW to 2,500kW, and utilise low global warming potential (GWP) refrigerants. Schneider Electric says the systems are designed to support elevated water temperatures commonly associated with liquid cooling deployments in AI data centres.

Andrew Bradner, Senior Vice President, Cooling Business at Schneider Electric, notes, "Energy efficiency, adaptability, and reliability are essential components of liquid cooling systems for AI-optimised data centres, and we’ve designed the Uniflair XCA line with these most important design features at the forefront.

"With adaptable water operating temperatures and versatile deployment options, the XCA line features a system-level approach that gives operators scalability, enhanced performance, and long-term peace of mind as data centre complexity continues to rise."

Cooling infrastructure adapts to rising AI power densities

As AI applications, GPU clusters, and liquid cooling deployments increase data centre power densities, cooling infrastructure is becoming an increasingly important factor in facility efficiency and reliability.

The Uniflair XCA platform incorporates oil-free magnetic bearing centrifugal compressors, which remove the need for lubrication systems and are intended to reduce maintenance requirements and mechanical losses.

The chillers also feature a spray evaporator combined with V-shaped microchannel coils, designed to improve heat exchange performance while reducing refrigerant volume and material usage.

For free-cooling deployments, the XCAF models support water outlet temperatures of up to 33°C and are designed to operate in ambient temperatures ranging from -20°C to 52°C. Schneider Electric states that, in suitable climates, the free-cooling configuration can reduce energy consumption compared with mechanical cooling systems by extending free-cooling operating periods.

The range can also be configured with a variety of electrical, hydraulic, acoustic, and performance options to suit different deployment requirements.

Additionally, a quick restart capability is included, enabling systems to reportedly return to full operating capacity within three minutes of a power outage.

New control features target operational efficiency

The XCA range also introduces new firmware and control functions designed to optimise cooling performance.

These include variable-speed pump algorithms supporting constant flow, constant temperature differential, and constant head pressure operation, alongside advanced fan control modes that can be adjusted according to temperature, load conditions, or scheduled operating periods.

Additional monitoring capabilities include energy metering and real-time water flow measurement to provide greater visibility into system performance.

According to Schneider Electric, these features are designed to reduce compressor cycling and improve long-term operational stability.

The first Uniflair XCA chiller units are scheduled to begin shipping globally in June 2026.

For more from Schneider Electric, click here.

Joe Peck - 2 June 2026

Head office & Accounts:

Suite 14, 6-8 Revenge Road, Lordswood

Kent ME5 8UD

T: +44 (0)1634 673163

F: +44 (0)1634 673173