Exclusive

Data Centre Infrastructure News & Trends

Exclusive

Innovations in Data Center Power and Cooling Solutions

Liquid Cooling Technologies Driving Data Centre Efficiency

Data centre cooling in the AI era

During a busy Data Centre World London 2026, Joe from DCNN caught up with Alistair Barnes (pictured above), Global Head of Mechanical Engineering at Colt DCS, to ask how the mechanical engineering discipline is evolving in response to the rapid rise of AI workloads.

The two discussed a variety of topics, from the shift towards liquid cooling solutions to the challenge of keeping pace with ever-increasing rack-level power densities.

Here, you can read the full Q&A, in which Alistair shares his perspective on where liquid cooling stands today, how Colt DCS's Global Reference Design philosophy shapes its approach to data centre infrastructure, and what he believes remains the industry's toughest unsolved engineering challenge:

Liquid cooling, rack densities, and the future of mechanical engineering

Joe: Hi, Alistair! So, how is mechanical engineering keeping pace with the shift to higher-density AI workloads?

Alistair: Mechanical engineers are keeping pace with higher‑density AI workloads by moving beyond traditional air‑only cooling and rethinking the entire thermal design stack. Instead of simply supplying cold air, they now operate more like system integrators, collaborating closely with IT and facilities teams to cool heat‑intensive components such as GPUs. This includes integrating direct‑to‑chip cold plates, liquid distribution loops, and hybrid cooling systems capable of managing the extreme heat generated by modern AI hardware.

Joe: In your opinion, is liquid cooling now a mainstream solution or still a specialist one?

Alistair: Liquid cooling is becoming increasingly mainstream, but the industry isn’t yet at a point where it can rely on liquid alone, as air still plays an important role in most deployments. Operators adopting Global Reference Designs (GRDs) now include liquid‑cooling options to support high‑density AI workloads that air alone can’t efficiently manage. As a result, many still use hybrid setups that combine air cooling with liquid where needed. Closed‑loop systems, such as liquid‑to‑chip, circulate coolant in a sealed loop, ensuring near‑zero wastewater and making them practical and sustainable.

Joe: Where does mechanical engineering sit in Colt DCS's broader data centre design philosophy?

Alistair: Mechanical engineering sits at the core of our design philosophy, supporting our commitment to delivering scalable, efficient, and sustainable data centre solutions. We adopt a GRD, a standardised and repeatable blueprint that accelerates deployment, optimises cost, and maintains consistent quality while remaining flexible enough to meet local requirements. Mechanical engineers play a key role in shaping the GRD, ensuring mission-critical cooling infrastructure and integrating new technologies across sites to support future growth and reliable operations.

Joe: What's the hardest engineering problem the industry hasn't solved yet?

Alistair: The hardest engineering problem the industry hasn’t solved is keeping pace with the accelerating rise in rack‑level power densities. Liquid cooling is advancing quickly and can manage far more heat than ever before, but single‑rack densities approaching 2MW and beyond are increasing faster than these solutions can be deployed at scale. The real challenge is delivering this capacity sustainably - balancing cooling performance, energy efficiency, and power availability - all while accelerating build timelines to keep up with customer demand.

For more from Colt DCS, click here.

Joe Peck - 30 March 2026

Data Centre Build News & Insights

Exclusive

Exploring Modern Data Centre Design

Why DC-powered lighting matters for modern data centres

In this exclusive article for DCNN, Ton van de Wiel, Global Segment Manager, Intelligent Buildings at Signify, outlines why DC-powered LED lighting is emerging as a key consideration in making data centre infrastructure more efficient and resilient:

Building resilience from the ground up

The digital services that underpin modern economies – from media streaming to cloud computing – depend on a rapidly expanding global network of data centres. These facilities are not only critical to digital connectivity; they represent significant sources of employment, infrastructure investment, and tax revenue through construction and long-term operation.

Today, data centre operators face a convergence of challenges. Capacity requirements are accelerating due to AI-driven workloads, energy prices are rising, and expectations around sustainability and carbon reduction are becoming more stringent. In response, the industry is re-examining its electrical infrastructure. Direct current (DC) power architectures, once limited to niche applications, are gaining traction as a foundation for higher efficiency and greater operational resilience.

Within this shift, lighting – often treated as a peripheral system – can play a strategic role. DC-powered LED lighting combines high energy efficiency with relatively low implementation risk, making it an effective starting point for broader DC adoption. Beyond energy savings, lighting can also function as an intelligent layer within next-generation data centre infrastructure.

How power architectures are changing

Operating a data centre requires tight coordination between IT equipment, networking, cooling, security, and electrical distribution. Historically, alternating current (AC) has been the default for power distribution. However, as facilities' scale and power densities increase, electrical efficiency has become a primary design concern.

Early facilities relied on 48V DC for backup systems – safe but capacity-constrained. This gave way to 230/277V AC distribution, followed by 380V DC for internal systems. Today, the extreme power demands of AI servers are driving another transition towards 650V DC and even 800V DC architectures.

According to the Open Direct Current Alliance (ODCA), 650V DC represents the optimal level for building-wide distribution, balancing efficiency with safety, while organisations such as NVIDIA and the Open Compute Project are investigating 800V DC. Despite promising high-power IT loads, these higher voltages do not yet deliver the same system-wide efficiency benefits as a facility-level 650V DC approach.

Outside the data centre sector, industrial sites are already deploying 650V DC systems to improve energy efficiency and resilience. One key advantage is the ability to capture regenerative energy from motor drives and robotics – energy that would otherwise be dissipated as heat. Because lighting is a continuous base load, it can readily absorb this recovered energy, reducing grid dependency and operating costs.

Integrating lighting, motors, renewables, and storage on a shared DC grid reduces conversion losses, cuts copper usage through fewer conductors, and lowers transmission losses compared with 400V AC systems. When paired with solar PV and batteries, DC grids also improve self-consumption, backup capability, and flexible energy management.

What’s driving the move?

The momentum behind DC power in data centres is rooted in both engineering logic and economics:

• Lower conversion losses — Conventional AC systems require multiple conversion steps, resulting in energy losses of up to 18%.

• Alignment with IT equipment — Servers and GPUs operate natively on DC power.

• Simpler renewable integration — Solar panels and battery systems produce DC, enabling more efficient connections.

• Reduced system complexity — Fewer transformers and rectifiers mean simpler installation and improved reliability.

• Preparedness for AI growth — Rising AI workloads are accelerating the shift towards DC-based power systems.

DC power is therefore not just an alternative distribution method, but a pathway to smarter, more resilient infrastructure.

Lighting as the first step

Among all building systems, lighting is often the most practical candidate for early DC adoption. Connected LED lighting allows operators to pilot DC distribution with limited risk before extending it to mission-critical IT loads.

The benefits are tangible:

• Capital expenditure savings — DC lighting cables reduce copper use by 40%. Three-conductor DC cables (L+, L-, PE) can transmit the same power as five-conductor 400V three-phase AC cables.

• Operational cost reductions — With only two current-carrying conductors, DC lighting avoids approximately 33% of cable losses compared with three-phase AC at the same current.

• Improved resilience — DC lighting can operate directly from on-site solar generation or battery storage, strengthening microgrid performance during outages.

DC-compatible luminaires and components are already commercially available. For example, Signify offers a 100W Xitanium LED driver designed for 620–750V DC operation, integrated into the Pacific LED Gen5 and Maxos Fusion luminaire families. These solutions achieve up to 165lm/W efficacy and can be paired with systems such as Signify Interact and Philips Dynalite. Driver-level efficiency can exceed 95%, with future potential to reach 200lm/W through ultra-high-efficiency LED modules.

Sustainability and ESG outcomes

DC-powered lighting supports measurable sustainability objectives:

• Lower carbon emissions through reduced conversion losses and material usage

• Support for certifications such as LEED Zero and BREEAM

• Energy optimisation with connected lighting systems, cutting lighting energy use by up to 75%

For hyperscalers like Amazon Web Services and Microsoft Azure, as well as colocation providers, these outcomes translate directly into stronger ESG reporting and progress towards carbon neutrality.

DC lighting can also be implemented incrementally. Some facilities deploy rack-level DC lighting while retaining an AC backbone. Others adopt facility-wide DC grids that integrate lighting, renewables, storage, and IT infrastructure.

In larger deployments, centralised emergency lighting connected to the DC backbone ensures continuous illumination during outages, reinforcing safety in mission-critical spaces.

A strategic role for lighting

As operators prepare for the next phase of digital expansion, DC-powered lighting offers a practical, high-impact entry point into efficient, renewable-ready DC infrastructure.

Modern connected lighting systems extend far beyond illumination. With embedded sensors measuring occupancy, daylight, temperature, humidity, and air quality, luminaires form a dense, facility-wide sensing network without the need for additional hardware. Using open protocols such as DALI, BACnet, and MQTT, DC lighting networks integrate with building management systems and DCIM platforms, enabling predictive maintenance, enhanced operational intelligence, and optimised cooling and space utilisation.

By simplifying cabling, reducing losses, and enabling intelligent energy management, DC lighting transforms illumination from a passive load into an active contributor to resilient, sustainable data centre operations.

Joe Peck - 16 March 2026

Data Centre Build News & Insights

Data Centre Infrastructure News & Trends

Exclusive

Innovations in Data Center Power and Cooling Solutions

Renewables and Energy: Infrastructure Builds Driving Sustainable Power

Power supply options for data centres

In this exclusive article for DCNN, Tania Arora and James Wyatt, Partners at Baker McKenzie (London), examine the evolving landscape of data centre power supply, highlighting why a tailored approach - blending grid connections, on-site generation, microgrids, and emerging technologies such as SMRs and battery energy storage - is increasingly essential for resilience, sustainability, and commercial optimisation:

No universal solution

Data centres presently require considerable energy resources, with projections indicating a marked increase in their consumption in the coming years. Securing a steady, sufficient, reliable, and scalable power supply is crucial for the financing, operational success, and long-term resilience of any data centre.

A universal strategy does not exist for procuring power for data centres; each project requires a tailored approach. The market offers a wide range of power supply options and these are frequently combined to address the specific requirements of each project. The exact power procurement strategy for each project is determined by several factors, most notably the location of the data centre, local regulatory frameworks, its current and future operational needs, and the strategy of the developer (particularly considering other assets / other electricity supply arrangements they own). This article considers power procurement options available in the market and how these could be combined to achieve a successful power supply strategy.

The key power supply options available at present include grid power, on-site or adjacent-site power generation, and microgrids (renewable or conventional), supported by backup generators, battery energy storage systems (BESS), and fuel cells. On-site or adjacent-site nuclear power is increasingly viewed as a panacea solution for data centre energy needs, although there are still considerable political, technological, and risk-allocation problems to solve.

Data centres usually connect to public electricity grids, but most grids were not designed for their high load. Upgrades and expansions are often needed, which can be time-consuming and expensive. Sometimes, users must pay for these improvements, and further upgrades may be required if the data centre expands. Furthermore, securing a grid connection is rarely guaranteed; capacity reservations may be needed and are often subject to legal conditions.

In some cases, installing on-site generation and microgrids can help address grid challenges. This could involve constructing solar and wind power plants (supported by BESS), gas-fired power stations, and/or combined heat and power (CHP) units adjacent to the data centre and supplying electricity directly without relying on the public grid.

Furthermore, fuel cell and linear generator systems - as well as small modular reactors (SMRs) - are emerging as low-carbon, scalable power solutions for data centres. While the ongoing costs for self-generated energy are generally much lower, building such a dedicated energy infrastructure typically entails significantly higher upfront costs compared to connecting to the public grid. Furthermore, on-site projects are often constrained by space and planning restrictions, particularly in urban or suburban markets where demand is highest.

Sustainable options

Sustainability is a key consideration for a number of data centre market participants. Even if on-site wind or solar energy is economically viable for a project, these renewables alone cannot provide a stable base load due to their intermittency. To ensure base-load coverage, additional infrastructure such as energy storage systems, fuel cells, and conventional backup generators are required.

SMRs and advanced nuclear technologies are emerging as promising solutions for the rising power needs of data centres. They offer reliable, consistent base-load power, load-following capability, scalable output, low carbon emissions, and a small physical footprint. They can operate independently of the grid or alongside renewables and are designed to be more cost-effective and quicker to deploy than traditional large-scale nuclear plants due to modular construction and established supply chains.

SMRs are becoming a tangible reality for data centres. For example, the UK Government recently provided a considerable amount of support for SMRs for data centres through planning reforms, regulatory acceleration, funding, and explicit policy direction encouraging SMR–data‑centre colocation. However, SMRs face challenges: they are largely unproven and most jurisdictions still lack regulatory frameworks tailored to their unique characteristics. Key considerations for deploying SMRs include understanding local nuclear regulations, licensing and approval processes, decommissioning requirements, nuclear waste management, fuel supply security, and site suitability. Addressing these legal and regulatory issues is essential before SMRs can be widely adopted for data centres.

BESS has become a key part of data centre power strategies, serving not only as resilience infrastructure but also helping to unlock commercial opportunities. It provides load shifting and peak shaving, thus reducing exposure to volatile wholesale prices and network charges by charging during low-cost or high-renewable periods and discharging power at peak demand. BESS also delivers instant backup power during outages and enables participation in grid services for additional revenue. Key issues include permitting and safety (especially for large-scale systems near nuclear or high-voltage facilities), complex grid connection agreements, and risk allocation where BESS is delivered via third-party energy-as-a-service contracts.

Final considerations

The near to mid-term future of data centre power lies in combined strategies. Every option in the combination presents its own distinct legal and commercial considerations. Consequently, as strategies become more complex, market participants should anticipate navigating a greater number of legal issues within the context of rapidly evolving regulatory frameworks.

Joe Peck - 18 February 2026

Data Centre Architecture Insights & Best Practices

Data Centre Build News & Insights

Exclusive

Exploring Modern Data Centre Design

Sustainable Infrastructure: Building Resilient, Low-Carbon Projects

The blueprint for tomorrow’s sustainable data centres

In this exclusive article for DCNN, Francesco Fontana, Enterprise Marketing and Alliances Director at Aruba, explores how operators can embed sustainability, flexibility, and high-density engineering into data centre design to meet the accelerating demands of AI:

Sustainable design is now central to AI-scale data centres

The explosive growth of AI is straining data centre capacity, prompting operators to both upgrade existing sites and plan large-scale new-builds. Europe’s AI market, projected to grow at a 36.4% CAGR through 2033, is driving this wave of investment as operators scramble to match demand.

Operators face mounting pressure to address the environmental costs of rapid growth, as expansion alone cannot meet the challenge. The path forward lies in designing facilities that are sustainable by default, while balancing resilience, efficiency, and adaptability to ensure data centres can support the accelerating demands of AI.

The cost of progress

Customer expectations for data centres have shifted dramatically in recent years. The rapid uptake of AI and cloud technologies is fuelling demand for colocation environments that are scalable, flexible, and capable of supporting constantly evolving workloads and managing surging volumes of data.

But this evolution comes at a cost. AI and other compute-intensive applications demand vast amounts of processing power, which in turn place new strains on both energy and water resources. Global data centre electricity usage is projected to reach 1,050 terawatt-hours (TWh) by 2026, placing data centres among the world’s top five national consumers.

This rising consumption has put data centres firmly under the spotlight. Regulators, customers, and the wider public are scrutinising how facilities are designed and operated, making it clear that sustainability can no longer be treated as optional. To survive amongst these new expectations, operators must balance performance with environmental responsibility, rethinking infrastructure from the ground up.

Steps to a next-generation sustainable data centre

1. Embed sustainability from day one

Facilities designed 'green by default' are better placed to meet both operational and environmental goals, and this why sustainability can’t be an afterthought. This requires renewable energy integration from the outset through on-site solar, hydroelectric systems, or long-term clean power purchase agreements.

Operators across Europe are also committing to industry frameworks like the Climate Neutral Data Centre Pact and the European Green Digital Coalition, ensuring progress is independently verified. Embedding sustainability into the design and operation of data centres not only reduces carbon intensity but also creates long-term efficiency gains that help manage AI’s heavy energy demands.

2. Build for flexibility and scale

Modern businesses need infrastructures that can grow with them. For operators, this means creating resilient IT environments with space and power capacity to support future demand. Offering adaptable options - such as private cages and cross-connects - gives customers the freedom to scale resources up or down, as well as tailor facilities to their unique needs.

This flexibility underpins cloud expansion, digital transformation initiatives, and the integration of new applications - all while helping customers remain agile in a competitive market.

3. Engineering for the AI Workload

AI and high-performance computing (HPC) workloads demand far more power and cooling capacity than traditional IT environments, and conventional designs are struggling to keep up.

Facilities must be engineered specifically for high-density deployments. Advanced cooling technologies, such as liquid cooling, allow operators to safely and sustainably support power densities far above 20 kW per rack, essential for next-generation GPUs and other AI-driven infrastructure.

Rethinking power distribution, airflow management, and rack layout ensures high-density computing can be delivered efficiently without compromising stability or sustainability.

4. Location matters

Where a data centre is built plays a major role in its sustainability profile, as regional providers often offer greater flexibility and more personalised services to meet customer needs.

Italy, for example, has become a key destination for new facilities. Its cloud computing market is estimated at €10.8 billion (£9.4 billion) in 2025 and is forecast to more than double to €27.4 billion (£23.9 billion) by 2030, growing at a CAGR of 20.6%. Significant investments from hyperscalers in recent years are accelerating growth, making the region a hotspot for operators looking to expand in Europe.

5. Stay compliant with regulations and certifications

Strong regulatory and environmental compliance is fundamental. Frameworks such as the General Data Protection Regulation (GDPR) safeguard data, while certifications like LEED (Leadership in Energy and Environmental Design) demonstrate energy efficiency and environmental accountability.

Adhering to these standards ensures legal compliance, but it also improves operational transparency and strengthens credibility with customers.

Sustainability and performance as partners

The data centres of tomorrow must scale sustainably to meet the demands of AI, cloud, and digital transformation. This requires embedding efficiency and adaptability into every stage of design and operation.

Investment in renewable energy, such as hydro and solar, will be crucial to reducing emissions. Equally, innovations like liquid cooling will help manage the thermal loads of compute-heavy AI environments. Emerging technologies - including agentic AI systems that autonomously optimise energy use and breakthroughs in quantum computing - promise to take efficiency even further.

In short, sustainability and performance are no longer competing objectives; together, they form the foundation of a resilient digital future where AI can thrive without compromising the planet.

For more from Aruba, click here.

Joe Peck - 2 January 2026

Data Centre Infrastructure News & Trends

Exclusive

Innovations in Data Center Power and Cooling Solutions

Liquid Cooling Technologies Driving Data Centre Efficiency

CDUs: The brains of direct liquid cooling

As air cooling reaches its limits with AI and HPC workloads exceeding 100 kW per rack, hybrid liquid cooling is becoming essential. To this, coolant distribution units (CDUs) could be the key enabler for next-generation, high-density data centre facilities.

In this article for DCNN, Gordon Johnson, Senior CFD Manager at Subzero Engineering, discusses further the importance of CDUs in direct liquid cooling:

Cooling and the future of data centres

Traditional air cooling has hit its limits, with rack power densities surpassing 100 kW due to the relentless growth of AI and high-performance computing (HPC) workloads. Already, CPUs and GPUs exceed 700–1000 W per socket, while projections estimate that to rise to over 1500 W going forward.

Fans and heat sinks are just unable to handle these thermal loads at scale. Hybrid cooling strategies are becoming the only scalable, sustainable path forward.

Single-phase direct-to-chip (DTC) liquid cooling has emerged as the most practical and serviceable solution, delivering coolant directly to cold plates attached to processors and accelerators. However, direct liquid cooling (DLC) cannot be scaled safely or efficiently with plumbing alone. The key enabler is the coolant distribution unit (CDU), a system that integrates pumps, heat exchangers, sensors, and control logic into a coordinated package.

CDUs are often mistaken for passive infrastructure. But far from being a passive subsystem, they act as the brains of DLC, orchestrating isolation, stability, adaptability, and efficiency to make DTC viable at data centre scale. They serve as the intelligent control layer for the entire thermal management system.

Intelligent orchestration

CDUs do a lot more than just transport fluid around the cooling system; they think, adapt, and protect the liquid cooling portion of the hybrid cooling system. They maintain redundancy to ensure continuous operation, control flow, and pressure, using automated valves and variable speed pumps, filtering particulates to protect cold plates, and maintaining coolant temperature above the dew point to prevent condensation. They contribute to the precise, intelligent, and flexible coordination of the complete thermal management system.

Because of their greater cooling capacity, CDUs are ideal for large HPC data centres. However, because they must be connected to the facility's chilled water supply or another heat rejection source to continuously provide liquid to the cold plates for cooling, they can be complicated.

CDUs typically fall into two categories:

• Liquid to Liquid (L2L): Large HPC facilities are well-suited for high-capacity CDUs known as L2L. Through heat exchangers, they move chip heat into the isolated chilled water loop, such as the facility water system (FWS).

• Liquid to Air (L2A): For smaller deployments, L2A CDUs are simpler but have a lower cooling capacity. By utilising conventional HVAC systems, they transfer heat from the returning liquid coolant from the cold plates to the surrounding data centre air by using liquid-to-air heat exchangers rather than a chilled water supply or FWS.

Isolation: Safeguarding IT from facility water

Acting as the bridge between the FWS and the dedicated technology cooling system (TCS), which provides filtered liquid coolant directly to the chips via cold plate, CDUs isolate sensitive server cold plates from external variability, ensuring a safe and stable environment while constantly adjusting to shifting workloads.

One of L2L CDUs' primary functions is to create a dual-loop architecture:

• Primary loop (facility side): Connects to building chilled water, district cooling, or dry coolers

• Secondary loop (IT side): Delivers conditioned coolant directly to IT racks

CDUs isolate the primary loop (which may carry contaminants, particulates, scaling agents, or chemical treatments like biocides and corrosion inhibitors - chemistry that is incompatible with IT gear) from the secondary loop. As well as preventing corrosion and fouling, this isolation offers operators the safety margin that operators need for board-level confidence in liquid.

The integrity of the server cold plates is safeguarded by the CDU, which uses a heat exchanger to separate the two environments and maintain a clean, controlled fluid in the IT loop. Because CDUs are fitted with variable speed pumps, automated valves, and sensors, they can dynamically adjust the flow rate and pressure of the TCS to ensure optimal cooling even when HPC workloads change.

Stability: Balancing thermal predictability with unpredictable loads

HPC and AI workloads are not only high power; they are also volatile. GPU-intensive training jobs or changeable CPU workloads can cause high-frequency power swings, which - without regulation - would translate into thermal instability. The CDU mitigates this risk by controlling temperature, pressure, and flow across all racks and nodes, absorbing dynamic changes and delivering predictable thermal conditions.

The CDU absorbs fluctuations by stabilising temperature, pressure, and flow across all racks and nodes, regardless of how erratic the workload is. Sensor arrays ensure the cooling loop remains in accordance with specifications, while variable speed pumps modify flow to fit demand and heat exchangers are calibrated to maintain an established approach temperature.

Adaptability: Bridging facility constraints with IT requirements

The thermal architecture of data centres varies widely, with some using warm-water loops that operate at temperatures between 20 and 40°C. By adjusting secondary loop conditions to align IT requirements with the facility, the CDU adjusts to these fluctuations. The CDU uses mixing or bypass control to temper supply water. It can alternate between tower-assisted cooling, free cooling, or dry cooler rejection depending on the environmental conditions, and it can adjust flow distribution amongst racks to align with real-time demand.

This adaptability makes DTC deployable in a variety of infrastructures without requiring extensive facility renovations. It also makes it possible for liquid cooling to be phased in gradually - ideal for operators who need to make incremental upgrades.

Efficiency: Enabling sustainable scale

Beyond risk and reliability, CDUs unlock possibilities that make liquid cooling a sustainable option.

By managing flow and temperature, CDUs eliminate the inefficiencies of over-pumping and over-cooling. They also maximise scope for free cooling and heat recovery integration such as connecting to district heating networks and reclaiming waste heat as a revenue stream or sustainability benefit. This allows operators to simultaneously lower PUE (Power Usage Effectiveness) to values below 1.1 while simultaneously reducing WUE (Water Usage Effectiveness) by minimising evaporative cooling. All this, while meeting the extreme thermal demands of AI and HPC workloads.

CDUs as the thermal control plane

Viewed holistically, CDUs are far more than pumps and pipes; they are the thermal control plane for thermal management, orchestrating safe isolation, dynamic stability, infrastructure adaptability, and operational efficiency.

They translate unpredictable IT loads into manageable facility-side conditions, ensuring that single-phase DTC can be deployed at scale, enabling HPC and AI data centres to evolve into multi-hundred kilowatt racks without thermal failure.

Without CDUs, direct-to-chip cooling would be risky, uncoordinated, and inefficient. With CDUs, it becomes an intelligent and resilient architecture capable of supporting 100 kW (and higher) racks as well as the escalating thermal demands of AI and HPC clusters.

As workloads continue to climb and rack power densities surge, the industry’s ability to scale hinges on this intelligence. CDUs are not a supporting component; they are the enabler of single-phase DTC at scale and a cornerstone of the future data centre.

For more from Subzero Engineering, click here.

Joe Peck - 4 November 2025

Data Centre Build News & Insights

Data Centre Projects: Infrastructure Builds, Innovations & Updates

Exclusive

Rewiring the data centre

In this exclusive article for DCNN, Will Stewart, Global Senior Industry Segment Manager Lead, Smart Infrastructure and Mobility at HARTING, explores how modularity, power density, and sustainability are converging to redefine how facilities are built, cooled, and scaled:

Building smarter infrastructure for the AI age

Artificial intelligence (AI) has moved from hype to headline, impacting everything from health diagnostics to financial analysis. While the public marvels at AI breakthroughs, the engines powering these advances - the world’s data centres - face growing, behind-the-scenes challenges. As organisations expand their AI capabilities, the energy needed to support modern computing infrastructure is rising at an unprecedented rate.

Current research projects that global data centre power demand will increase by 50% as soon as 2027 and 165% by 2030, with much of this surge attributed to AI workloads’ explosive growth. Already, data centres account for approximately 2% of worldwide electricity consumption and forecasts suggest this share will continue its upward march. The resulting strain extends beyond server rooms; it is currently reshaping energy supply chains, policy priorities, and environmental strategy across industries.

Rising to the infrastructure challenge

Serving next-generation, AI-driven applications requires a dramatic rethink of traditional data centre design. Historically, a data centre’s infrastructure balanced a mix of physical and virtual resources - servers, storage, networking, power distribution units, cooling systems, security protocols, and supporting elements like racks and fire suppression - all engineered for reliability and uptime.

AI’s energy-hungry, compute-intensive tasks have shattered these historical balances. Data centres today must deliver far more power to denser racks, operate reliably under heavier loads, and deploy new capacity at speeds unimaginable even a decade ago. These requirements are putting immense pressure on every inch of physical infrastructure, from the electrical grid connection to the server cabinet.

Navigating power and cooling demands

One of the most acute challenges is arising from escalating power and cooling needs. Where historical rack architecture required 16 or 32A, current designs push 70, 100, or even 200A, often in the same amount of physical rack space. These giant increases not only generate more heat, but require thicker, less flexible power cabling, raising new problems for deployment and ongoing maintenance.

Efficiently removing heat from ever-denser configurations is a major engineering feat. Next-generation cooling technologies - ranging from liquid cooling to full-system immersion - are becoming essential rather than optional. At the same time, every connection point and cable run becomes a potential source of inefficiency or risk. Operators can no longer afford energy loss, heat generation, or even the downtime that results from outdated power distribution or poorly optimised layouts.

The space and scalability constraint

AI workloads are increasingly mission-critical. Even short interruptions in data centre uptime can lead to significant financial loss or damaging outages for users and services. With power loads climbing fast and every square foot optimised, the need for trustworthy, quickly serviceable infrastructure grows more urgent. Reliable system operation is now a defining competitive factor for data centre operators.

To complicate matters further, capacity needs are accelerating, but available space remains finite. In many regions, the cost and scarcity of real estate forces data centres to pack as much compute and power as possible into smaller footprints. As higher-power architectures proliferate, the infrastructure supporting them - from power to networking - must become more compact and adaptable, maintaining robust operation without sacrificing maintainability or safety.

Because new workloads can spike unpredictably, data centre leaders now require infrastructure that can be rapidly scaled up, upgraded, or reconfigured, sometimes within days rather than months. The traditional model of labour-intensive rewiring is proving unsustainable in today’s emerging reality.

Sustainability in the spotlight

Environmental scrutiny from regulators, investors, and end-users places data centres at the heart of the global decarbonisation agenda. Facilities must now integrate renewable energy, maximise electrical efficiency, and minimise overall carbon footprints while delivering more power each year. But achieving these goals demands holistic change from energy procurement and grid strategy down to every connector, cable, and cooling loop inside the facility.

The challenges of the AI era are being met with new ideas at every level of the data centre: smarter building management systems now orchestrate lighting, thermal control, and energy use with unprecedented efficiency; cooling technologies are evolving quickly, as operators push beyond the limits of traditional air-based systems; advanced power distribution and grid connectivity solutions are enabling better load balancing, more reliable energy supply, and easier renewable integration.

Within this broad transformation, the move towards modular, plug-and-play connections - sometimes called connectorisation - is having a dramatic impact. Unlike hardwired installations - which are slow to deploy, often hard to scale and maintain, and require specialised labour that is often unavailable - connectorised infrastructure supports pre-assembled, pre-tested units that can be installed in days rather than weeks and by the workforce that is already available on-site. This not only gets new capacity online faster, but also reduces the opportunity for error, simplifies expansions, and supports higher power throughput within constrained spaces.

Connectors designed for current and future demands minimise heat and energy loss, enhance reliability, and simplify upgrades. Maintenance is easier and faster, with less need for specialised expertise and less operational downtime. These modular technologies are also helping data centres better optimise their architecture, manage complex workloads, and future-proof their operations.

Cooperation and adaptation in a complex landscape

Modernising data centre infrastructure is not simply a technical challenge, but one that requires broad collaboration between technology vendors, utilities, cloud providers, regulators, and policymakers. Federal incentives, innovative funding, and public-private partnerships are all working in support of grid modernisation efforts, while the need for flexibility in design and operation allows data centres to adapt to regional differences in energy supply, regulation, and demand.

While AI has redefined what is possible, it has also redefined what is required behind the scenes. Data centre infrastructure must evolve rapidly - becoming not only larger, but smarter, faster, and greener. Every connection system and square foot now counts in the race to keep up with exponential demand.

For more from HARTING, click here.

Joe Peck - 22 October 2025

Artificial Intelligence in Data Centre Operations

Data Centre Build News & Insights

Data Centre Operations: Optimising Infrastructure for Performance and Reliability

Data Centre Projects: Infrastructure Builds, Innovations & Updates

Exclusive

Rethinking infrastructure for the AI era

In this exclusive article for DCNN, Jon Abbott, Technologies Director, Global Strategic Clients at Vertiv, explains how the challenge for operators is no longer simply maintaining uptime; it’s adapting infrastructure fast enough to meet the unpredictable, high-intensity demands of AI workloads:

Built for backup, ready for what’s next

Artificial intelligence (AI) is changing how facilities are built, powered, cooled and secured. The industry is now facing hard questions about whether existing infrastructure, which has been designed for traditional enterprise or cloud loads, can be successfully upgraded to support the pace and intensity of AI-scale deployments.

Data centres are being pushed to adapt quickly and the pressure is mounting from all sides: from soaring power densities to unplanned retrofits, and from tighter build timelines to demands for grid interactivity and physical resilience. What’s clear is that we’ve entered a phase where infrastructure is no longer just about uptime; instead, it’s about responsiveness, integration, and speed.

The new shape of demand

Today’s AI systems don’t scale in neat, predictable increments; they arrive with sharp step-changes in power draw, heat generation, and equipment footprint. Racks that once averaged under 10kW are being replaced by those consuming 30kW, 40kW, or even 80kW - often in concentrated blocks that push traditional cooling systems to their limits.

This represents a physical problem. Heavier and wider AI-optimised racks require new planning for load distribution, cooling systems design, and containment. Many facilities are discovering that the margins they once relied on - in structural tolerance, space planning, or energy headroom - have already evaporated.

Cooling strategies, in particular, are under renewed scrutiny. While air cooling continues to serve much of the IT estate, the rise of liquid-cooled AI workloads is accelerating. Rear-door heat exchangers and direct-to-chip cooling systems are no longer reserved for experimental deployments; they are being actively specified for near-term use. Most of these systems do not replace air entirely, but work alongside it. The result is a hybrid cooling environment that demands more precise planning, closer system integration, and a shift in maintenance thinking.

Deployment cycles are falling behind

One of the most critical tensions AI introduces is the mismatch between innovation cycles and infrastructure timelines. AI models evolve in months, but data centres are typically built over years. This gap creates mounting pressure on procurement, engineering, and operations teams to find faster, lower-risk deployment models.

As a result, there is increasing demand for prefabricated and modular systems that can be installed quickly, integrated smoothly, and scaled with less disruption. These approaches are not being adopted to reduce cost; they are being adopted to save time and to de-risk complex commissioning across mechanical and electrical systems.

Integrated uninterruptable power supply (UPS) and power distribution units, factory-tested cooling modules, and intelligent control systems are all helping operators compress build timelines while maintaining performance and compliance. Where operators once sought redundancy above all, they are now prioritising responsiveness as well as the ability to flex infrastructure around changing workload patterns.

Security matters more when the stakes rise

AI infrastructure is expensive, energy-intensive, and often tied to commercially sensitive operations. That puts physical security firmly back on the agenda - not only for hyperscale operators, but also for enterprise and colocation facilities managing high-value compute assets.

Modern data centres are now adopting a more layered approach to physical security. It begins with perimeter control, but extends through smart rack-level locking systems, biometric or multi-factor authentication, and role-based access segmentation. For some facilities - especially those serving AI training operations - real-time surveillance and environmental alerting are being integrated directly into operational platforms. The aim is to reduce blind spots between security and infrastructure and to help identify risks before they interrupt service.

The invisible fragility of hybrid environments

One of the emerging risks in AI-scale facilities is the unintended fragility created by multiple overlapping systems. Cooling loops, power chains, telemetry platforms, and asset tracking tools all work in parallel, but without careful integration, they can fail to provide a coherent operational picture.

Hybrid cooling systems may introduce new points of failure that are not always visible to standard monitoring tools. Secondary fluid networks, for instance, must be managed with the same criticality as power infrastructure. If overlooked, they can become weak points in otherwise well-architected environments. Likewise, inconsistent commissioning between systems can lead to drift, incompatibility, and inefficiency.

These challenges are prompting many operators to invest in more integrated control platforms that span thermal, electrical, and digital infrastructure. The goal is now to have the ability to see issues and to act quickly - to re-balance loads, adapt cooling, or respond to anomalies in real time.

Power systems are evolving too

As compute densities rise, so too does energy consumption. Operators are looking at how backup systems can do more than sit idle: UPS fleets are being turned into grid-support assets. Demand response and peak shaving programmes are becoming part of energy strategy. Many data centres are now exploring microgrid models that incorporate renewables, fuel cells, or energy storage to offset demand and reduce reliance on volatile grid supply.

What all of this reflects is a shift in mindset. Infrastructure is no longer a fixed investment; it is a dynamic capability - one that must scale, flex, and adapt in real time. Operators who understand this are the best placed to succeed in a fast-moving environment.

From resilience to responsiveness

The old model of data centre resilience was built around failover and redundancy. Today, resilience also means responsiveness: the ability to handle unexpected load spikes, adjust cooling to new workloads, maintain uptime under tighter energy constraints, and secure physical systems across more fluid operating environments.

This shift is already reshaping how data centres are specified, how vendors are selected, and how operators evaluate return on infrastructure investment. What once might have been designed in isolated disciplines - cooling, power, controls, access - is now being engineered as part of a joined-up, system-level operational architecture. Intelligent data centres are not defined by their scale, but by their ability to stay ahead of what’s coming next.

For more from Vertiv, click here.

Joe Peck - 21 October 2025

Data Centre Build News & Insights

Data Centre Projects: Infrastructure Builds, Innovations & Updates

Exclusive

Security Risk Management for Data Centre Infrastructure

Pressing challenges impacting the future of US data centres

In this exclusive article for DCNN, Matt Coffel, Chief Commercial and Innovation Officer at Mission Critical Group (MCG), explores how growth in the US data centre market is being affected by increasing challenges, demanding new levels of collaboration and innovation across the sector:

An increasingly omnipresent industry

Data centres and related facilities are everywhere today. Synergy Research Group states hyperscalers account for 44% of those facilities worldwide, while non-hyperscale colocation and on-premise account for 22% and 34% respectively. They also project that by 2030, hyperscalers will account for 61% of all data centres and related facilities.

While there are no definitive estimates on how many of these facilities will be constructed in the US in the coming years, planning and development in the country are happening faster than ever before. Take, for example, the recent developments in Pennsylvania regarding investment in data centres and other technology infrastructure to support AI, including significant investments from Amazon and CoreWeave.

With AI adoption surging and data generation accelerating in sectors like healthcare, financial services, and the federal government, the number of data centres is only set to grow. But as demand rises, so too do the obstacles. Data centre operators and their partners face mounting challenges that threaten timelines, drive up costs, and complicate efforts to scale efficiently - and there are no easy fixes.

Persistent and critical challenges

When it comes to the construction of a data centre, there are many factors to consider. However, four factors have emerged as critical challenges for data centre operators and their partners: permitting, power, skilled talent, and compute.

1. Regulation: Securing permits to build and operate

Even as demand for compute and power accelerates, operators are forced to navigate lengthy and often inconsistent approval processes. In some jurisdictions, permitting can take two to three years before projects can even break ground. These delays put US developers at a disadvantage compared to countries with more streamlined regulatory systems.

The challenge is compounded by the sheer scale of today’s facilities. Projects promising multiple gigawatts of capacity require not only land and power, but also regulatory sign-off on issues such as environmental impact, emissions, and noise. These reviews often involve multiple parties - utilities, consultants, environmental specialists, and local governments - which makes coordination slow and uncertain. Moreover, the difficulty varies widely by location. In cities like Austin in Texas, approvals can be tough to secure, while just miles outside the city limits, the process may move much faster.

2. Time to power

According to the International Energy Agency’s (IEA) Energy and AI report, “Power consumption by data centres is on course to account for almost half of the growth in electricity demand between now and 2030 in the US.”

This rising demand is evident in Northern Virginia, where a large cluster of data centres has been built over the past twenty years. With this cluster of data centres in a single area, along with the demands from AI and data processing loads, power substations have reached maximum capacity. This has forced utility providers and data centre operators to either bring in new lines from distant locations - where there is excess power, but transmission infrastructure is lacking - or to build new data centres in rural areas so they can access untapped power.

Yet, building new transmission lines from other locations or setting up data centres can take years and doesn’t address the current power demand.

3. Access to skilled talent to support current and future projects

Data centre operators and their partners are working to build new facilities across the US, often in remote parts such as Western Texas, where they can access untapped power sources. Building in these areas introduces several challenges related to skilled labour.

Building and maintaining a data centre requires highly skilled electricians, mechanics, and controls specialists who can handle complex electrical and mechanical systems and often on-site power generation. However, the US faces a nationwide shortage of these workers. The US Bureau of Labor Statistics forecasts that employment for electrical workers will grow by 11% from 2023 to 2033 - a much faster rate than the average for all jobs. Still, many electricians are nearing retirement and set to leave the field in the coming years. This is likely to create a gap that operators will find difficult to fill as they work to build and keep their facilities running.

Additionally, data centre operators and their partners face the reality that many skilled workers are unwilling to live far from population centres.

Recent estimates from Goldman Sachs Research underscore the scale of this challenge. It projects that the US will require 207,000 more transmission and interconnection workers and 300,000 extra jobs in power technologies, manufacturing, construction, and operations to support the additional power consumption needs projected for the US by 2030.

This dual challenge of labour scarcity and logistical complexity is making traditional, on-site construction methods increasingly untenable. As a result, the industry is pivoting towards prefabricated, modular power solutions that are engineered and assembled in a controlled factory environment. This approach mitigates the impact of localised labour shortages by capitalising on a centralised, highly skilled workforce and deploying nearly complete, pre-tested power modules to the remote data centre location for rapid and simplified final installation.

4. The accelerating pace of change in compute technology

The speed at which compute technology is evolving has reached an unprecedented level, putting enormous pressure on data centre operators and their partners.

Moore’s Law is no longer the standard; today’s compute configurations are far more advanced than ever before, with denser platforms being released every 12 to 18 months. This rapid cycle forces operators to rethink how they design and future-proof facilities - leveraging concepts such as modularisation - as infrastructure built just a few years ago can quickly fall behind.

The need for collaboration

Each of these challenges is significant on its own, but together they mark one of the most complex periods in the history of infrastructure development. To move forward, data centre operators, utilities, manufacturers, technology providers, and government agencies must work closely to identify solutions and provide support for each obstacle.

On the skilled labour front, companies outside the manufacturing space are also contributing. Earlier this year, Google pledged support to train 100,000 electrical workers and 30,000 new apprentices in the US. This funding was awarded to the electrical training ALLIANCE (etA), the largest apprenticeship and training program of its kind, founded by the International Brotherhood of Electrical Workers and NECA.

State leaders are playing a role as well. In Pennsylvania this summer, for example, the governor and other legislators have demonstrated strong support for data centre growth.

MCG, a manufacturer and integrator of power and electrical systems, is one example of how industry players are stepping up. MCG designs, manufactures, delivers, and services systems tailored for data centre operators and other mission critical environments.

In collaboration with operators and other equipment and technology providers, MCG produces modular power systems that are built off-site to ease workforce constraints. These systems are then delivered directly to data centres or their power facilities, where the MCG team commissions and maintains them.

With efforts from government officials, companies like MCG and Google, and other stakeholders, the US data centre industry can continue powering the digital future - no matter how much demand for power and compute increases.

For more from Mission Critical Group, click here.

Joe Peck - 17 October 2025

Data Centre Infrastructure News & Trends

Exclusive

Innovations in Data Center Power and Cooling Solutions

Why resilient cooling systems are critical to reliability

In this exclusive article for DCNN, Dean Oliver, Area Sales Manager Commercial (South and London areas) at Spirotech, explores why uninterrupted operation of data centres - 24 hours a day, 365 days a year - is no longer optional, but essential. To achieve this, he believes robust backup systems, advanced infrastructure, and precision cooling are fundamental:

The importance of data

In today's digitally driven economy, data is the backbone of intelligent business decisions. From individuals and startups to multinational corporations and financial institutions, the protection of personal and commercial information is more vital than ever.

The internet sparked a technological revolution that has continued to accelerate - ushering in innovations like cryptocurrencies and, more recently, the powerful rise of artificial intelligence (AI). While these developments are groundbreaking, they also highlight the need for caution and infrastructure readiness.

For most users, the importance of data centres only becomes clear when systems fail. A 30-minute outage can bring parts of the economy to a halt. If banks can’t process transactions, the consequences are immediate and widespread.

Data breaches can have a significant impact on businesses, both operationally and financially. This year alone, several high-profile companies have been targeted. Marks & Spencer, for example, reportedly suffered losses of around £300 million over a six-week period following a cyberattack.

These and other companies affected by such problems underline just how dependent our society is on digital infrastructure. Cyberattacks, like denial-of-service (DoS) assaults, are a real and growing threat.

But even without malicious intent, data centres must operate flawlessly, with zero downtime. Central to this is thermal management, including cooling systems that maintain optimal conditions to prevent system failure.

Why cooling is key

Data centres generate significant heat due to dense arrays of servers and network hardware. If temperatures are not precisely controlled, systems risk shutdown, data corruption, or permanent loss - an unacceptable risk for any organisation.

Cooling solutions are mission-critical. Given the security and performance demands on data centres, there’s no room for error. Cutting corners to save on cost can have catastrophic consequences.

That’s why careful planning at the design stage is essential. This should factor in redundancy for all key components: chillers, pumps, pressurisation units, and more. Communication links between these systems must also be integrated to ensure coordinated operation.

The equation is simple: the more computing power you deploy, the greater the cooling demand. Cloud infrastructure consumes enormous amounts of energy and space, requiring 10s of megawatts of power and covering thousands of square metres.

If the cooling system fails - whether from chiller malfunction or control breakdown - data loss on a massive scale becomes a very real possibility.

That’s why backup systems must be immediately responsive, guaranteeing continued operation under any condition.

Keeping systems operating

Today, there are innovative control systems available, like those offered by Spirotech, that offer detailed insights into system performance and which capture operational data from pumps, valves, pressurisation units, and vacuum degassers. This enables early detection of potential issues and provides trend analysis to support proactive maintenance.

For example, vacuum degassers can show how much air has been removed over time, while pressurisation units monitor pressure levels, leak events, and top-up activity. These systems work in tandem, ensuring balance and continuity. If a fault occurs, alerts are instantly dispatched to relevant personnel.

A poorly designed or maintained pressurisation system can result in negative pressure, leading to air ingress via vents and seals - or, conversely, excessive pressure that causes water discharge and frequent refills.

Air and dirt separators are also crucial to system health, preventing build-up and ensuring smooth operation across all pipework and components.

Conclusion

Effective cooling is essential for data centre systems due to the high demands on security and performance; there's no tolerance for failure. Inadequate or poorly designed cooling can lead to disastrous outcomes, including potential large-scale data loss.

To prevent this, detailed planning during the design phase is crucial. This includes building in redundancy for all major components like chillers, pumps, and pressure units, and ensuring these systems can communicate and function together reliably.

As computing capacity increases, so does the need for robust cooling. Modern cloud infrastructure uses vast amounts of power and physical space, placing even greater stress on cooling requirements.

Therefore, backup systems must be fast-acting and fully capable of maintaining continuous operation to avoid downtime and protect data integrity, regardless of any component failures.

For more from Spirotech, click here.

Joe Peck - 16 October 2025

Data Centre Infrastructure News & Trends

Enterprise Network Infrastructure: Design, Performance & Security

Exclusive

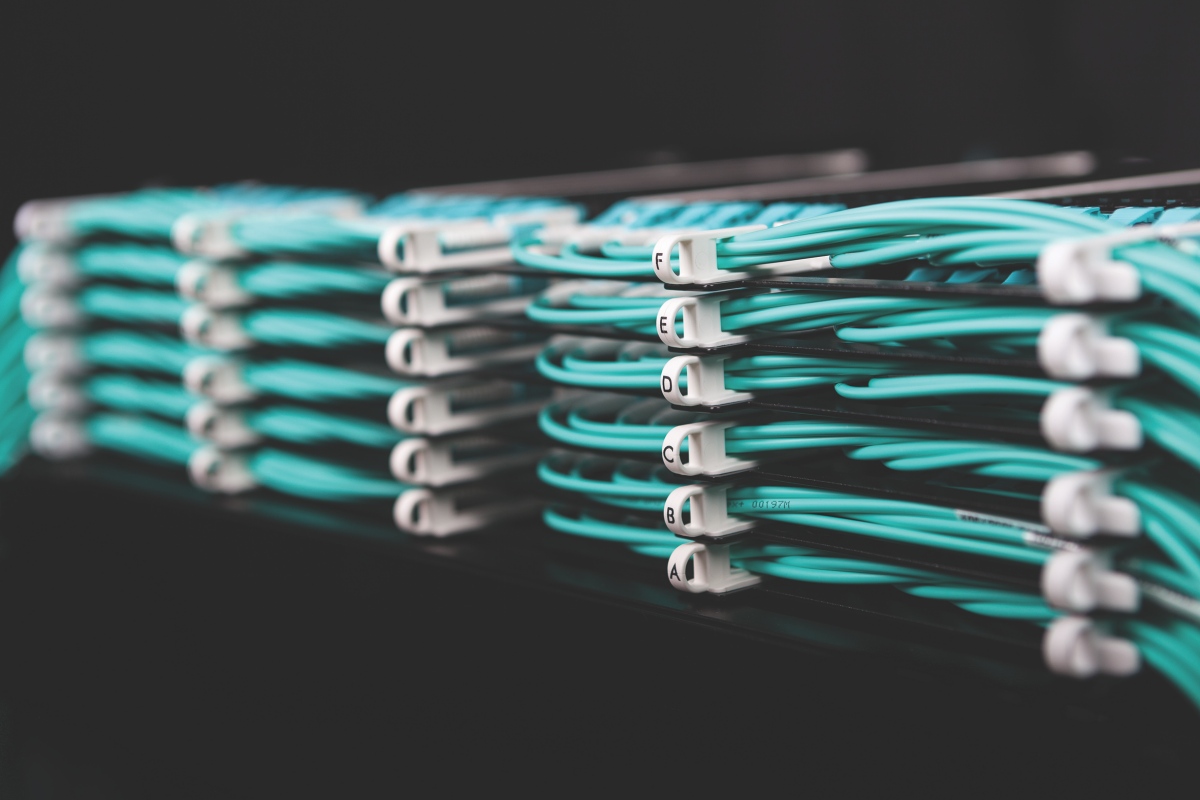

Building AI-ready networks: Smart cabling for the edge era

In this exclusive article for DCNN, Rachid Ait Ben Ali, Product & Solutions Manager, Smart Building & Data Center at Aginode, explores how next-generation fibre and automated management systems are redefining infrastructure for AI and edge computing:

Cabling for the future

As artificial intelligence and edge computing rapidly reshape data centre architectures, network infrastructure has to evolve to keep up. Critical applications such as autonomous systems, real-time analytics, and GPU-to-GPU communication for AI model training are highly sensitive to latency, signal degradation, and disruptions caused by excessive hops or amplification points. To meet fast-moving, rigorous requirements, cutting-edge cabling solutions - including ultra-dense fibre panels and the latest generation of Automated Infrastructure Management (AIM) systems - are essential.

A closer look at the requirements

AI-driven infrastructure is unforgiving of downtime and demands built-in redundancy. Workloads are often unpredictable and heavily overloaded, which means they need optical cabling that can absorb traffic spikes without performance degradation. Architectures supporting AI are non-linear and bandwidth-intensive, requiring designs with optimised physical pathways. Fibre networks in these settings must be capable of supporting transmission rates of 400G, 800G, and beyond.

At the same time, edge computing introduces a new level of complexity. Its highly distributed nature requires fibre connectivity that is not only robust and high-performing, but also low maintenance and compact enough to function within physical and power-constrained environments. As AI workloads - from training to inference - generate immense volumes of east-west traffic across dense GPU clusters, managing connectivity and thermal performance becomes critical. These clusters produce extreme heat, making thermally optimised cabling and carefully considered airflow vital.

Addressing challenges from a technical perspective

Everything starts with the right cabling. Pre-terminated fibre links support rapid and reliable deployment at hyperscale and edge sites. High CPR-rated solutions help ensure compliance with stringent fire safety standards. As organisations plan for upgrades to 800G or even 1.6 Tbps networks, deploying a future-ready cabling plant is essential. Smart labelling systems simplify identification, reduce downtime, and help teams operate more efficiently. Ultra-polished UPC and APC connectors minimise reflection and insertion loss - crucial for extremely latency-sensitive AI applications.

High-density fibre-optic cabling, such as MPO/MTP for parallel optics, enables scalable bandwidth in compact footprints. OM5 multimode fibre is well-suited for dense AI clusters and edge deployments. Supporting multiple wavelengths over short distances and offering tighter loss budgets, OM5 delivers high bandwidth without dramatically increasing cable volume. Modern cable designs enhance performance through quality shielding, precise construction, shorter channel lengths, and cleaner signal paths that reduce hops and signal loss.

The network architecture itself also plays a key role. Ethernet backbones operating at 400G and 800G, combined with direct-connect models like leaf-spine or fully meshed fabrics, reduce latency and support AI’s massive east-west traffic patterns. Minimising patching and interconnection points cuts down on signal attenuation and interference, further improving efficiency. Innovations such as ultra-dense fibre panels and MPO connectors help scale operations without overloading valuable rack space and enable rapid deployment while avoiding costly rewiring.

AIM systems are becoming indispensable, offering real-time visibility into port status, topology, and usage, and integrating with Data Centre Infrastructure Management (DCIM) and orchestration tools. By leveraging AI-powered tracking and analytics, AIM systems enable real-time monitoring and automate management tasks. This reduces mean time to repair (MTTR), minimises human error, and supports large-scale, AI-driven operations. In environments where model training may take months, cabling with embedded diagnostics ensures uninterrupted operation and transparency. Different types of fibre can be tracked, diagnosed, and reconfigured with minimal manual intervention. That’s essential for mission-critical systems.

Further considerations

Smooth integration of high-performance fibre into AI and edge-ready environments demands careful attention to detail. As GPU-dense racks fill with fibre, insertion and connection loss must be minimised, which increases the importance of ultra-low-loss multimode solutions. Thermal and spatial constraints in AI deployments necessitate slim, compact cabling designs that do not compromise on performance. Adhering to industry standards such as ISO/IEC OM5 and MPO ensures compatibility across hardware ecosystems and simplifies deployment at geographically distributed edge sites.

Looking ahead, future-proofing and sustainability are equally important. Investing in OM5 fibre today helps avoid the cost and disruption of replacement as bandwidth demands continue to grow. Automated cabling systems reduce operational expenditures but also enable agile provisioning, even in edge locations where power is constrained. Low-loss fibre reduces the need for signal amplification, conserving energy, while structured, durable panels minimise long-term maintenance waste.

To accommodate the demanding requirements of AI and edge computing, data centre design must transition from legacy copper and basic point-to-point fibre models to high-density, automated multimode fibre ecosystems. Smart cabling, ultra-dense panels, and intelligent management solutions form the backbone of this transformation. These technologies empower both hyperscale and edge operations with high bandwidth, low latency, and operational agility.

Meeting the needs of AI-ready infrastructure doesn’t just require speed; it demands ultra-high bandwidth, minimal latency, granular insights, simplified manageability, and flexible (re)configuration. With the right infrastructure, it’s possible to build networks that are powerful enough for AI at scale and flexible enough enough for edge deployment.

Joe Peck - 7 October 2025

Head office & Accounts:

Suite 14, 6-8 Revenge Road, Lordswood

Kent ME5 8UD

T: +44 (0)1634 673163

F: +44 (0)1634 673173